This seems to be the year of long-awaited synths, some with gestation times extending to more than half a decade. Of these, one of the most eagerly anticipated is the Hartmann Neuron which, through what its German manufacturers rather fancily describe as a "nervous system endowed with artificial intelligence" promises a vast array of exotic new sounds. One of these is no less modestly described in the Neuron's promotional literature as a sonic event which "changes in size, growing to a towering spire of sound whose body — originally wood — gradually metallises. It picks up momentum, darting past my left ear, describing a great curve behind my back before bursting in a mist of mercurial droplets at the centre of my brain". I don't know what drugs they're taking in Ravensburg at the moment, but I strongly suggest that they stop. While such flowery hyperbole may appeal to some people, I doubt that it will excite many serious professionals with £3500 of disposable cash in their pockets.

On the other hand, wouldn't it be wonderful if Hartmann's grandiose and psychedelic claims proved to be justified? So let's see if they are...

The Basics

At first sight, the Neuron is a daunting synthesizer, overflowing with strange controls and even stranger parameter names. It's also an odd synthesizer. This is not altogether surprising since Axel Hartmann, the man behind the unconventional Waldorf Wave, the Q, and the Alesis Andromeda, designed the cosmetics. And the Neuron, with its one wooden end cheek, is certainly another unconventional design to add to his portfolio (though personally, I'd rather it had one end cheek, or none). Another example of its non-standard design is that all the Neuron's connections, normally located on the rear of a synth, are on the non-wooden side panel, leaving the rear empty save for a huge, illuminated on/off switch. The I/O itself includes three stereo pairs of analogue outputs that you can also configure as a 5.1 surround system, plus a stereo headphone socket. Alongside these, you'll find two analogue inputs, 24-bit, 44.1kHz S/PDIF inputs and outputs, and inputs for three pedals: a continuous controller, a switch, and a sustain pedal. Finally, there are the ubiquitous MIDI In/Out/Thru and a USB socket for connection to a Mac or PC.

As the Neuron's connections are all located on its side, the rear panel contains only the stylish Hartmann logo, which doubles as the On switch.Photo: Mark EwingOf these, the most curious are the audio inputs. Despite references to them in Hartmann's promotional literature in the context of real-time processing of audio, mention of them is absent from the current draft of the manual (except in the specification), and they are currently inoperative. According to Hartmann, they will not become active until the Neuron's OS reaches version 2.0, which may take a while; the next planned revision is v1.3.

As the Neuron's connections are all located on its side, the rear panel contains only the stylish Hartmann logo, which doubles as the On switch.Photo: Mark EwingOf these, the most curious are the audio inputs. Despite references to them in Hartmann's promotional literature in the context of real-time processing of audio, mention of them is absent from the current draft of the manual (except in the specification), and they are currently inoperative. According to Hartmann, they will not become active until the Neuron's OS reaches version 2.0, which may take a while; the next planned revision is v1.3.

Having connected the Neuron's outputs to a suitable audio system, you're ready to switch on. Happily, the universal power supply accepts 100-240V, 50/60Hz, so you'll have no worries before doing so. The synth then takes about 40 seconds to boot, with a pyrotechnic display from its copious LEDs during the first half of this, and significant whirring from its high-powered cooling fan and internal hard disk drive throughout. To my mind, this makes the Neuron less than ideal for live work because the boot cycle is too long for comfort in the event of a power failure. Mind you, it's a lot better than waiting for Windows XP or Mac OS X to get their acts together. And, to the Neuron's credit, it didn't crash once during an extended and punishing review. This is more notable than it may seem, because the Neuron is based on PC architecture, and its stability is greater than could be expected of any conventional PC-based system.

Once running, the Neuron continues to make a lot of noise, most of which is contributed by the large fan mounted within the underside of the case. Hartmann implore you not to obstruct the air vents that permit the considerable airflow out of the synth, and I can see why. But there's a problem. The fan generates a level of noise that I would classify as annoying in a studio. Were this a piece of outboard equipment, you could shove it into the machine room, but as it's a performance synth, you'll have to live with the noise.

Initially, I found the Neuron's distinctive control panel rather ugly, but once I got to grips with it, I found that parts of the operating system are quick and simple to navigate. The most prominent features are the four bright orange X-Y joysticks. Three of these lie in the programming sections, and as soon as I started to think of them as vector synthesis controls, they made sense. The fourth is a pitch-bend/controller joystick, and, in my opinion, it's not well suited to this purpose; it's too short, and offers far too short a 'throw' in all directions for exact performance control.

Everything on the side; even mains power is provided via an IEC socket on the side of the Neuron. The all-important USB jack here is where the Modelmaker software is connected (via a suitable USB-to-Ethernet adaptor). Sadly, the two audio inputs are not yet functional.Photo: Mark EwingProgramming the Neuron is achieved using the joysticks, plus a smaller, black joystick that moves you one step at a time up/down and left/right within the menu systems (there's no scrolling, sadly), numerous buttons, 'endless' wheels, 'endless' rotary knobs, an Edit knob that you can also press to confirm some changes, and a small 16 x 2 display (see right).

Everything on the side; even mains power is provided via an IEC socket on the side of the Neuron. The all-important USB jack here is where the Modelmaker software is connected (via a suitable USB-to-Ethernet adaptor). Sadly, the two audio inputs are not yet functional.Photo: Mark EwingProgramming the Neuron is achieved using the joysticks, plus a smaller, black joystick that moves you one step at a time up/down and left/right within the menu systems (there's no scrolling, sadly), numerous buttons, 'endless' wheels, 'endless' rotary knobs, an Edit knob that you can also press to confirm some changes, and a small 16 x 2 display (see right).

Some aspects of this user interface quickly gave me cause for concern. Even after making allowances for their velocity sensitivity, the wheels appear to be inconsistent in their response. The Confirm/Enter function accessed by pressing in the Edit knob also behaved inconsistently, but in a different way; in some menus you must press the knob to input a value, while in others doing so reverts to the previous value. To be fair, nothing on the Neuron failed to work throughout the review, but user-interface niggles like this can drive you mad. Happily, it would seem that Hartmann's programmers are aware of such matters; one of the planned improvements in the v2.0 Neuron OS upgrade is improved wheel and dial handling. Good.

There are no fewer than a dozen sound programming sections, although two of these — the so-called Resynators — are identical in form and function. The others are the Blender, the Shapers (1, 2 and 3), Mod, the Slicer, Silver, Effects, the Programmer, and the Controllers. Hang on a moment... Resynators, Blenders, Slicers, and a Silver module? For me, these odd labels cloud the true purpose of the Neuron's facilities, and I wish Hartmann had used simpler, more intuitive names, instead of trying to make their synth sound different with deliberately esoteric ones. OK, there are precedents for the use of 'Shaper' to describe an envelope, and 'Resynator' is a contraction of 'Resynthesizing Oscillator'. The 'Blender', which, in Hartmann's words, "arbitrates between the two Resynators", is also not unreasonable, because it's far more than merely a two-channel mixer. But as we shall see, some of the other names sound rather more impressive than the facilities they provide. The handbook — which in some places is written more like an advertisement than a manual — explains all of the sections in conventional terms, but you then have to ask why unconventional names were adopted on the synth itself.

Resynators

When reviewing any synth, no matter how it works, I usually start by taking a look at the oscillators (or, in the case of FM synths, the operators). For all its unconventional terminology, it's still possible to treat the Neuron's dual Resynators as oscillators which allow you to manipulate a sound using parameters similar in philosophy to those found on physical modelling synths such as the Korg Z1.

However, the Resynators are not conventional waveform generators, nor do they play back simple PCM samples. Instead, they draw upon 'models' of a sound derived from a sample or set of samples. Creating these models is called 'resynthesis', and for an explanation of this, I direct you to the 'What is Resynthesis?' box below.

The small joystick to the left of the LCD moves you through the Neuron's programming menus, while the Edit knob to the right of the display adjusts the displayed values, and may also be pressed to enter or confirm values, although this is not always necessary.Photo: Mark EwingIn short, the act of turning a sample (or samples) into a model provides an opportunity for the resynthesis software to recognise prominent attributes within the sound, and assign a number of parameters to them. This is where the 'Neur...' in Neuron comes from; designer Stephan Sprenger claims that the Modelmaker software that performs this task is based upon neural-network technology derived from research into pattern recognition and artificial intelligence (for more on Neural Networks, see the box on the next page).

The small joystick to the left of the LCD moves you through the Neuron's programming menus, while the Edit knob to the right of the display adjusts the displayed values, and may also be pressed to enter or confirm values, although this is not always necessary.Photo: Mark EwingIn short, the act of turning a sample (or samples) into a model provides an opportunity for the resynthesis software to recognise prominent attributes within the sound, and assign a number of parameters to them. This is where the 'Neur...' in Neuron comes from; designer Stephan Sprenger claims that the Modelmaker software that performs this task is based upon neural-network technology derived from research into pattern recognition and artificial intelligence (for more on Neural Networks, see the box on the next page).

As the model is built, its parameters are divided into two subsets called Scape and Sphere. These are meaningless names, although Hartmann claim in the manual that they relate roughly to the excitation and resonant response of the models. This division represents the way that the real world works, and the parameters in physical-modelling synths such as the Yamaha VL1 and Korg Z1 are separated in exactly this fashion. Very broadly speaking, the excitation parameters (as the name suggests) usually relate to the way in which an instrument is energised (for example, blowing into a wind or brass instrument, strumming a guitar, hitting the keys on a piano or striking a drum). On the other hand, the resonant response parameters define what happens to that energy following the initial excitation (causing air to resonate within the body of the wind/brass instrument, exciting the strings and body of the guitar or piano, or causing the drum skin and shell to resonate enharmonically, to continue the previous examples).

Returning to the Neuron, each Scape and Sphere contains up to 12 parameters, distributed in three groups of four. When loaded into the Neuron itself, you move through these parameter sets using the Parameter Level buttons. Parameters placed diagonally across from one another are exclusive attributes (the manual cites Scape parameters of metal/wood and large/small as examples — see below) so you have two orthogonal pairs on each level.

Photo: Mark EwingYou can edit the values within the parameters levels using the joysticks, which allow you to change the character of the sound in a dynamic and recordable fashion that Hartmann call 'stick animation'. For precise programming, you can fall back on the editing system (mini-joystick, screen and Edit knob) and for precise animation there are envelopes hard-wired to each Resynator. The Resynators also respond to conventional synthesis parameters including such time-honoured favourites as LFO pitch modulation, velocity sensitivity, key tracking and so on.

Photo: Mark EwingYou can edit the values within the parameters levels using the joysticks, which allow you to change the character of the sound in a dynamic and recordable fashion that Hartmann call 'stick animation'. For precise programming, you can fall back on the editing system (mini-joystick, screen and Edit knob) and for precise animation there are envelopes hard-wired to each Resynator. The Resynators also respond to conventional synthesis parameters including such time-honoured favourites as LFO pitch modulation, velocity sensitivity, key tracking and so on.

By the time I had fathomed all of this, I was becoming confident that I understood the fundamental nature of the Resynators. But I soon found that things did not react as I expected. For example, when I chose a sine wave as my basic PCM (model 511) and twiddled its Scape (roughly speaking, excitation) parameters, I expected that nothing would happen. After all, the fundamental nature of a sine wave is that it contains a single frequency... nothing more, nothing less. To my surprise, two of the three Scape levels changed the sound considerably, until it bore no resemblance whatsoever to a sine wave. The Sphere parameters also changed the sound, but this is to be expected; pass a sine wave into a physical resonator such as a soundboard or instrument body, and what you get out is far removed from the initial sound. Experiments with other models generated equally unexpected results; some pleasing, others less so. I therefore assume that the sub-divisions into Scape and Sphere are to some extent arbitrary, and not precisely related to conventional excitation/resonance models.

Modelmaker

Hartmann have now released Modelmaker, a piece of software that makes it possible for users to generate new Neuron models from monophonic 44.1kHz AIFF files. The current restriction to a single data format is a bit limiting, and it's in marked contrast to Hartmann's original claim that Modelmaker will recognise "any standard audio file format for analysis/conversion" but, hopefully, most potential users will be able to convert to mono AIFF format if need be.

By the time you read this there may also be a PC version of Modelmaker that works with WAV files (one is in preparation), but at the time of writing, the Mac reigned supreme in Neuronland. No matter... I ran the OS 9.x review copy within the Classic environment on my 1GHz G4 Titanium Powerbook, and encountered no operating problems. Strangely, Hartmann will only send you Modelmaker once you've registered the Neuron. This is odd, because the software is useless without the Neuron itself, and will remain so until the day comes when you can create a pirate copy of a hardware instrument using your PC.

An FTP client communicating with the Neuron.The Neuron communicates with the Mac via FTP. At this point, if you are less than comfortable with configuring networks, you should turn away, because getting the Neuron, Modelmaker and a Mac up and running together is not like plugging two MIDI devices together. For one thing, you need to buy a USB-to-Ethernet converter. This could cost you anywhere between £20 to £50 in the UK depending on where you shop. You must then connect the former end to the Neuron and the latter to the computer. Given that the Neuron costs £3500, I thought that Hartmann might have thrown in the converter, but there you are. Secondly, you need to be able to configure the computer's network software, give it a unique Ethernet IP address, and then run a suitable FTP client application. One is supplied with the Neuron, but you can download a shareware one from the Internet if you know where to look.

An FTP client communicating with the Neuron.The Neuron communicates with the Mac via FTP. At this point, if you are less than comfortable with configuring networks, you should turn away, because getting the Neuron, Modelmaker and a Mac up and running together is not like plugging two MIDI devices together. For one thing, you need to buy a USB-to-Ethernet converter. This could cost you anywhere between £20 to £50 in the UK depending on where you shop. You must then connect the former end to the Neuron and the latter to the computer. Given that the Neuron costs £3500, I thought that Hartmann might have thrown in the converter, but there you are. Secondly, you need to be able to configure the computer's network software, give it a unique Ethernet IP address, and then run a suitable FTP client application. One is supplied with the Neuron, but you can download a shareware one from the Internet if you know where to look.

Since the Neuron is, at heart, a PC, I can see how a USB-USB communications link would be problematic (you can't have two USB masters, one at either end of a cable). But why didn't Hartmann use a simple Ethernet link? Well, I'm guessing here, but the adoption of USB-to-Ethernet allows the Neuron to fulfil all its communication and backup responsibilities through one connector, which is cheaper than providing both USB and Ethernet connectors on the Neuron. Happily, another email I received just as we were going to press informed me that a USB-to-USB link is also planned for when the Neuron receives its OS upgrade to version 1.3. This is welcome news.

The Neuron's 20GB internal drive contains two folders — 'ToNeuron' and 'FromNeuron' — that themselves contain four sub-folders named Models, SetUps, Software and Sounds (see screenshot above). Once you've set up the network between your computer and the Neuron, you can see the contents of the folders in your FTP client software. You place files in an appropriate folder to send data to the Neuron, but placing a file in the correct folder is not enough to load it; once it is there you must activate a Load routine from the front panel get it into the Neuron so that it is available for use. Weird. The transfer procedure is painless — unless you're in a hurry. The factory set of just under 300 models and associated Sounds took 29 minutes to dump to my G4, even with the FTP client permanently in the foreground. The data occupied a hair under 1GB.

If you've got this far, you're ready to use Modelmaker, to load new factory sounds and models, and also to update the operating system if need be. But I fear that many potential users will shy away from this, simply because this method of interfacing with the Neuron is so involved. Sure, if SOS were a computer magazine, it would be reasonable of Hartmann to expect you to be comfortable with network configuration, IP addresses and running FTP clients. Judge for yourself, but I'm not convinced. More generally, I'm not happy that you must connect the Neuron to a computer to get full use from it. If you don't, you will be forever limited to using the models generated at the factory, and that would be a terrible waste.

Launching Modelmaker presents the screen shown above. Unfortunately, there's no context-sensitive Help file and only the skimpiest of manuals to guide you through its use, so what follows was largely discovered by trial and error.

Firstly, Modelmaker allows you to create zones across any part of the 61-note semi-weighted keyboard, and to create a two-part model derived from two samples — one that determines the nature of the model at high MIDI velocities, and one for low MIDI velocities — within each. If we keep things at the simplest level, we can use a single zone, insert the same AIFF file into both the high- and low- velocity windows, and press the 'Process' button in the top centre of the screen to generate a model that is playable across the whole keyboard.

The Modelmaker startup screen.Once you have done this, a new window appears (see right) and asks you some questions regarding the type of model you wish to generate. This is the moment at which you realise that the alleged intelligence of the Neuron — its promised ability to take a sample and create a unique model and unique parameters derived solely by its Neural network — is not what it claims to be.

The Modelmaker startup screen.Once you have done this, a new window appears (see right) and asks you some questions regarding the type of model you wish to generate. This is the moment at which you realise that the alleged intelligence of the Neuron — its promised ability to take a sample and create a unique model and unique parameters derived solely by its Neural network — is not what it claims to be.

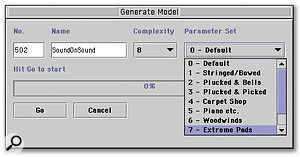

In the FAQs on their web site, Hartmann state: "Neuron analyses and recognises the sounds that are played into it. From that, it selects a set of specific parameters that characterise this sound". As things stand, this is not true. As you can see in the screenshot below, the model generator offers just a handful of options; 10 complexities, and 10 parameter sets, to be precise. What's more, the user decides which of these to use to process the samples, not the Neuron. The parameter sets (which become the Scape and Sphere parameters in the processed model) have been set up to be most suitable for particular types of sound: bowed instruments, plucked instruments, pianos, woodwind... and so on. The fact that you select these manually does mean that you can create models with, for example, a vocal sample manipulated using 'string' parameters, or a piano sample manipulated using woodwind parameters, but this is rather different from the claim that — for each model — the Neuron itself generates unique parameter sets derived from the source sample(s).

'Complexity' determines how accurately the sample is modelled. Hartmann suggest that maximum complexity produces an output identical to the input, whereas low complexities give interesting results. The former is not always true — the differences are plainly audible — and whilst I expected to find interesting side-effects from deliberately setting low complexities (much as you might deliberately sample at eight-bit resolution for creative reasons), this was not always evident either. Indeed, experiments with vocal samples (mine) and real flute samples (also mine, although you wouldn't pay to hear me produce them) demonstrated that the differences between complexities '1' and '10' could sometimes be very subtle, despite the significant differences in model sizes produced using these options (of which more in a moment).

Notwithstanding this, the combination of the Complexity and Parameter Set controls means that, for any given sample or set of samples, you can create 100 different models with subtly different characteristics. It's not as flexible as the Neuron's marketing materials lead you to expect, but you have to admit that it's more adaptable than the one set of voice parameters provided by all other synths (with the honourable exceptions of the Korg Z1 and a handful of other multi-model physical modelling instruments).

If you now press 'Go', and if everything works as it should, Modelmaker gives you a 'Successful' message, and you are then free to drag and drop the created folder (which contains the model and its associated files) into the appropriate folder in your FTP client, after which you can load it into the Neuron itself. It will then appear in the location you specified as its 'number'. There are such 512 locations, but beware... the number you specify in Modelmaker (which is '502' in the screenshot below) is where the model will appear, overwriting anything that previously existed in that location.

Selecting a Complexity and Parameter Set for the model.Once loaded, your model is treated no differently from the ones that resided in the instrument when shipped from the factory. However... I encountered two significant problems with Modelmaker; one in the time domain, and one in the frequency domain.

Selecting a Complexity and Parameter Set for the model.Once loaded, your model is treated no differently from the ones that resided in the instrument when shipped from the factory. However... I encountered two significant problems with Modelmaker; one in the time domain, and one in the frequency domain.

Firstly, I could find no way at first to make the models continuous. Using short sounds as the basis for the models created short, one-shot models. I have no problem with this, because one could argue that sometimes the percussive nature of a sound is an important factor that should become part of the model. But when I offered Modelmaker extended samples, I expected to obtain models that I could set to sustain indefinitely. However, I was not able to create sustaining sounds in this way, and no solution was available on the Hartmann web site, in the Neuron manual, in any of Hartmann's promotional literature, or in Modelmaker itself. I contacted Hartmann, who explained that if you set loop points in your source AIFF(s) using a sample editor capable of doing this, such as BIAS Peak or TC Spark, these loop points are carried over into the model when Modelmaker does its construction work. Having learned this, I went to look at some sustaining sounds in the Neuron's factory set to see how they had been achieved, and discovered that many of the continuous models display prominent loop points, which are possibly discontinuities in the models that have been generated at the transition points of looped samples. To be fair, Hartmann say in their on-line FAQs that "If you want the model to sound perfect, the loop of the original sample has to be perfect as well".

The second problem lay in the way that the models work across the keyboard. I had expected that a model derived from a single sample would produce smoothly varying timbres with no discontinuities (which, by and large, it did) but also that models built from multisamples placed in multiple Modelmaker zones would interpolate smoothly from one note to next as you play up and down the keyboard (which they do not). Indeed, building a complex model from a multisample showed that the Neuron is extremely prone to generating shocking discontinuities of tone and amplitude as you play from, say, C to C#, or from C# to D.

Thinking about it, I realise that this disassociation of one sample from the next in the model-making process allows you to place sounds with different characteristics on different notes within a single model (much like a multisampled drum map). But, if asked which facility is the more important — smooth modelling that creates a sound that is useable from the bottom of the keyboard to the top, or the ability to mimic multisampling, I would say the former. After all, if you want to place different sounds in different areas of the keyboard, you can always use the Neuron's four-part multitimbral Setup mode (of which more in a moment).

Building models at high complexities can take many, many, times real time. Sample a couple of notes with a total duration of a few seconds, and you can wait minutes before Modelmaker finishes its deliberations. Only then can you undertake the tasks of FTPing, loading and using the model. What's more, the models are much larger than the original samples. To demonstrate this, I took a 268K AIFF file and processed it using the default parameter set at complexity '1' and complexity '10'. The resulting models filled 1.1MB and 3.0MB respectively! Of this, most of the space was occupied by the Spheres which, depending upon the nature of the sample and the model parameters used, required between 80 percent and 90 percent of the total data.

Given these figures, one could conclude that you will fill the Neuron's 20GB drive using models derived from just 2GB of sample data, which is a little over six hours of monophonic, 16-bit 44.1kHz audio. It may sound a lot, but it's not the 60 hours that a 20GB drive would initially suggest.

The Blender

As already noted, the Blender (shown above) is far more than a two-channel mixer for the outputs of the dual Resynators: it also offers a range of configurations that allow you to apply the Scape of one Resynator to the Sphere of the other.

Photo: Mark EwingThere are numerous options to control this, including the exotically named Mix Singlesphere, Chromophonic, Dual Sphered, Intermorph, Dynamic Transsphere... and others. To explain what each of these does would be to rewrite the manual, and there is neither time nor space for this. To summarise, they control which Scape or Scapes are passed to which Sphere or Spheres, and — where crossfades and blends are concerned — how long it takes from one configuration to evolve into another. One of the simpler examples of this is Dynamic Transsphere, which cross-fades Scape 1 to Scape 2 and passes the result through Sphere 2.

Photo: Mark EwingThere are numerous options to control this, including the exotically named Mix Singlesphere, Chromophonic, Dual Sphered, Intermorph, Dynamic Transsphere... and others. To explain what each of these does would be to rewrite the manual, and there is neither time nor space for this. To summarise, they control which Scape or Scapes are passed to which Sphere or Spheres, and — where crossfades and blends are concerned — how long it takes from one configuration to evolve into another. One of the simpler examples of this is Dynamic Transsphere, which cross-fades Scape 1 to Scape 2 and passes the result through Sphere 2.

It took considerable time to get to grips with the possibilities offered by the Resynators and Blender, but it's actually rather simple when you become familiar with things. The difficulty is not in understanding the components, but in getting anything useful out of them. Just as early synthesists discovered that Moog Modulars and ARP 2500s offered infinite possibilities that yielded silence or — at best — unusable noises, so it is with the Neuron. These noises will do little for conventional musicians, who will find that it takes longer to coax anything they might find useful from the synth. On the other hand, strange noises may well excite those working in Hollywood's sound-effects suites no end!

Slicer & Mod

Next in the signal path lies the Slicer. This is an LFO with two modes called Vertical and 3D, and just two parameters: Depth/Spread and Rate. You would think that, with such limited options, it would be simple to describe this, but Hartmann have again chosen to be obscure in the manual, talking of Slicer generating updrafts and downdrafts which change the altitude of a 3D sonic cloud... To find out what was going on, I selected the sine wave for Resynator 1, removed all modulations within the sound, and defeated the Silver section and effects that we will discuss shortly. I now had a pure tone emitted by both channels, left and right. Switching the Slicer to 'Vertical', selecting the triangle waveform and dialling in an appropriate rate and depth, I achieved nothing more than amplitude modulation in each channel, but applied 180 degrees out of phase with one another: in other words, when the left channel was loudest, the right channel was quietest, and vice versa. This was rather disappointing; I had achieved nothing more nor less than LFO panning. Selecting some of the other models produced the same result, and selecting the sine and square waves in the Slicer produced amplitude modulation: in other words, tremolo. So much for updrafts and downdrafts!

Photo: Mark EwingThe description of the 3D mode is equally pretentious, talking about clouds sweeping crossways through sonic soundscapes. What this appears to mean is that, if the Neuron is in Surround mode (of which more shortly), the LFO operates in three dimensions: left/right, front/back, and modulation depth. Performing the same experiments as above showed that, in addition to amplitude modulation, the 'spread' parameter creates pitch modulation for a range of chorus-style (and more extreme) effects.

Photo: Mark EwingThe description of the 3D mode is equally pretentious, talking about clouds sweeping crossways through sonic soundscapes. What this appears to mean is that, if the Neuron is in Surround mode (of which more shortly), the LFO operates in three dimensions: left/right, front/back, and modulation depth. Performing the same experiments as above showed that, in addition to amplitude modulation, the 'spread' parameter creates pitch modulation for a range of chorus-style (and more extreme) effects.

A more conventional LFO resides in the Mod section (shown below), and offers rate (zero to 20Hz), delay, global depth and waveform parameters. On their web site, Hartmann claim that these depth and rate parameters are infinitely variable, but they are not; as explained in the manual, the rate is quantised in 0.1Hz steps.

There are 12 LFO waves, including random and the 'positive' sine and triangle waves that are essential for correct imitations of some forms of vibrato. You can route the LFO simultaneously to the volume, pitch and modelling parameters of each of the Resynators, as well as to the Blender and the filter cut-off frequency, with the depth determined individually at each destination.

Unfortunately, neither the Slicer nor Mod currently offers MIDI Sync, which I think is an oversight. Given that so many of the Neuron's characteristic patches are sliced and diced, it seems crazy that you can't sync them to the rest of a track. A fix for this is apparently on the way, but not until that fabled OS v2.0 upgrade.

Shaper1 & Shaper 2

Like much of the Neuron, the first two velocity-sensitive Shapers offer more than might be indicated by their controls (shown below). Sure, each can act as an ADSR and affect multiple destinations simultaneously, but you can also combine them in a 'free' mode, in which they can act as a single five-stage envelope with the levels and transition times determined by the user. Well, this isn't strictly true. The Sustain time is the Gate time, and the Release level is always zero. This means that there are eight parameters, rather than the 10 you might expect. This explains the name '4L/4T' (four levels, four times) used in the Neuron's manual. The '4L/4T' mode is mutually exclusive of the dual ADSRs (ie. you can't have both simultaneously). There's also a repeat mode that turns the Shapers into three-stage ADR waveform generators that you can use as complex LFOs. This is welcome and useful, recalling the trapezoid envelope generators of the EMS VCS3.

In ADSR mode, the Shapers have numerous destinations within the Resynators, although there's no modulation matrix as such. Shaper 1 is hard-wired to the amplifier controlling Resynator 1's audio level, and is also wired to each of its model parameters. Sure, this means that the same contour is used in all cases, but you can determine the level at which it is applied in each case. Likewise, Shaper 2 controls Resynator 2. There are fewer destinations in free mode.

Silver, Setup, Surround, & Master Effects

Following the Slicer, the audio signal reaches the module that "lets you put a lustrous shine on sounds", as Hartmann put it. Despite its high-falutin' title, Silver is simply a multimode filter plus a brace of insert effect units. The filter types on offer — but only one at a time — are low-pass (6dB, 12dB and 24dB/octave), 6dB/octave high-pass, and a band-pass of unspecified slope. Each is resonant, but none self-oscillate in the true sense, needing an input from elsewhere to produce a sound. You can modulate each of the filters using Shaper 3 (which is similar to Shapers 1 & 2, but, for obvious reasons, lacks the '4L/4T' mode), the LFO, and keyboard velocity.

Alongside the filter lie the effects, split into Frequency-based effects and Time-based effects. The former includes EQ, compression, distortion, ring modulation, decimation, and Sp-warp, which is a form of frequency modulation. The latter includes Stereo Spread (which delays one channel with respect to the other to create out-of-phase effects), delay, a phaser, a flanger, and chorus. Some of these are well specified with, for example, selectable modulating waveforms and acoustic feedback in the ring modulator. Others, such as the EQ, are basic, while yet others — the phaser, flanger and chorus — are as you might expect.

The third of the Neuron's orange joysticks lies in Silver and, as in the Resynators, you can use this to determine the parameters for the Silver module. And, as in the Resynators, you can record and replay the stick's movements to generate one-shot or cyclic modulations within the sound.

The final element within Silver is a button to select Surround mode. Hartmann claim that the Neuron is the first synth designed to work in 5.1, and I must admit that — while I use many keyboards and modules with six or even eight outputs — I can't immediately think of one that treats them as a surround setup allowing panning across the soundfield. Nonetheless, the Neuron doesn't offer complete 5.1 freedom; for the moment, Surround only operates in the Neuron's four-channel multitimbral mode (named 'Setup' by Hartmann). Surround capability in the single-patch Sound mode is yet another upgrade slated for OS revision 2.0.

Photo: Mark EwingThere are 512 Setups, and each allows you to allocate up to four sounds on up to four MIDI channels, with independent volume, pitch, stereo output assignments and pan for each. You can also define highest and lowest notes for each, and highest and lowest velocities, meaning that this is where you define keyboard- and velocity- splits. In addition, there are parameters for each Sound that define its mix level, its master delay send, and its master reverb send (I'll come to the Master Effects in just a moment). In Surround mode you can also position each sound in the front right/ front left/rear right/rear left field, with additional parameters to boost or cut the level of the sound in the centre and low-frequency effects (LFE, or subwoofer) channels. The simplest way to do this is with the joystick in the Silver module, which is why — although it is not strictly a 'Silver' function — the surround on/off button is found here. As always, you can record and replay movements of the joystick, allowing you to create 5.1 pans and sweeps. I was unable to test the Neuron in a true 5.1 context, because my review studio is as yet stereo, but by monitoring each of the channel pairs in turn, it seemed that everything was functioning correctly.

Photo: Mark EwingThere are 512 Setups, and each allows you to allocate up to four sounds on up to four MIDI channels, with independent volume, pitch, stereo output assignments and pan for each. You can also define highest and lowest notes for each, and highest and lowest velocities, meaning that this is where you define keyboard- and velocity- splits. In addition, there are parameters for each Sound that define its mix level, its master delay send, and its master reverb send (I'll come to the Master Effects in just a moment). In Surround mode you can also position each sound in the front right/ front left/rear right/rear left field, with additional parameters to boost or cut the level of the sound in the centre and low-frequency effects (LFE, or subwoofer) channels. The simplest way to do this is with the joystick in the Silver module, which is why — although it is not strictly a 'Silver' function — the surround on/off button is found here. As always, you can record and replay movements of the joystick, allowing you to create 5.1 pans and sweeps. I was unable to test the Neuron in a true 5.1 context, because my review studio is as yet stereo, but by monitoring each of the channel pairs in turn, it seemed that everything was functioning correctly.

Following the Silver module, there are two master effects: a stereo delay followed by a stereo reverb, the output from which appear only on stereo output 1. Output pairs 2 and 3 are always dry, except in Surround mode, in which case the affected signals are sent to the front/rear pairs, and the centre and LFE channels are dry.

The number of parameters provided for the master effects is not overwhelming. So, although there are independent delay times for the left and right channels, the feedback, damping and mix values are the same for each. Disappointingly, there are no multi-tap or cross delays. Likewise, the reverb offers just five types — small room, medium room, hall 1, hall 2, and plate — with just mix, reverb time, diffusion, and damping parameters. Two extra parameters offer detune amount and time for the reflected signals. These are confusingly named: the effect is actually LFO pitch modulation of the reverb, with depth and rate controls.

In addition to providing the envelope for the filter cutoff frequency, Shaper 3's Attack and Decay controls also double as mix controls for the master Delay and Reverb.

Controllers & MIDI

The last of the control sections contains the performance controls: a joystick, a wheel, and a knob (shown on the next page). These are complemented by the inputs for the pedal controllers and, of course, the keyboard's velocity and channel-pressure sensitivity.

Of these, the one that I like most is the aftertouch, because it allows you to select four destinations, with individual depths for each. This, together with the three physical controllers (each of which can also affect four destinations) and the pedals, form a true control matrix, allowing you to route each to almost every parameter within the Neuron. There are far too many destinations to list here, or even to present in a table, which gives you an idea how flexible the system can be. Strangely, the factory sounds do not take advantage of the control available, which is a shame.

The Neuron's MIDI system is straightforward, offering SysEx dumping and loading of individual sounds and setups as well as complete dumps/loads of all of each, albeit, of course, at a much slower rate than using the USB/Ethernet/FTP connection.

I found a couple of significant bugs in the MIDI implementation. Firstly, there seems to be a slight latency that becomes particularly noticeable when playing rapid passages using a remote keyboard. Secondly, the Neuron seems neither to send nor receive Program Change messages (I checked using Korg and Roland synths at the far end of the MIDI cables, with the same results in each case). Again, Hartmann are apparently aware of these problems, and they are due for correction in OS revision v1.3. More interesting, and certainly more positive, are more than 100 fixed MIDI CCs that control many aspects of the sounds, including the Scape and Sphere parameters. The opportunities offered by this are obvious, and I can see many users creating hugely complex sounds by drawing CC curves in computer-based sequencers.

In Use

There's been much talk about the Neuron's sounds, so, as soon as I received the review instrument, I was keen to hear what it had to offer. On the basis that most manufacturers place their most impressive sound in the first patch location, I waited for it to boot, saw the name 'Nata' appear, and began to play. My first impressions were good: it's a vaguely oriental sound comprising an ethereal pad and a plucked arpeggio. However, the sound is swamped in effects and reverb, so I switched them off, and was presented with something which to me, sounded very Wavestation-esque. The next patch, was based upon a drum loop and sounds like... a treated drum loop. You may be tempted to think that it's the Neuron itself creating the rhythmic patch, but it's not; it's merely using the rhythmic model of an existing sample.

Photo: Mark EwingAfter a few days of experimentation, I was becoming discouraged. Many of the Sounds were of high quality, but the interest was coming from the effects, not from the unaffected Resynators. I would happily have used some of these sounds, although there was little to tempt me to replace my Korg Trinity or Triton, or indeed to give a Yamaha Motif or Roland V-Synth a run for its money. Where were the towering spires of sound, the ephemeral tinkling, and the celestial beehive that I had been promised? To be fair, the ability to choose inappropriate parameters in Modelmaker later opened the door to a great deal of experimentation, but the results were still not as diverse or radical as I had expected.

Photo: Mark EwingAfter a few days of experimentation, I was becoming discouraged. Many of the Sounds were of high quality, but the interest was coming from the effects, not from the unaffected Resynators. I would happily have used some of these sounds, although there was little to tempt me to replace my Korg Trinity or Triton, or indeed to give a Yamaha Motif or Roland V-Synth a run for its money. Where were the towering spires of sound, the ephemeral tinkling, and the celestial beehive that I had been promised? To be fair, the ability to choose inappropriate parameters in Modelmaker later opened the door to a great deal of experimentation, but the results were still not as diverse or radical as I had expected.

Other problems revealed themselves as I delved deeper. Take model 157: 'Tape Choir' as an example. This is clearly intended to be an imitation of the Mellotron eight-voice male choir, even to the extent that the bottom 'C' lasts for just eight seconds or thereabouts. But the duration of the notes becomes shorter and shorter, and the timbre becomes progressively more 'munchkinised' as you play up the keyboard until, at the top, the sound goes 'eep' and expires after less than a second. This, of course, is exactly what you would expect from a single, one-shot sample mapped across a keyboard, and not what you should expect from a physical model. From what I know of physical modelling, it seems to me that a better way to create this type of patch would be to map a continuous version of the sound across the whole keyboard and then specify a fixed duration at any pitch. Unfortunately, the Neuron does not work like this.

The problems with 'Tape Choir' don't stop there, though, because as you play up and down the keyboard, the patch displays unpleasant side-effects that sound like the results of bad multisampling. There's a particularly ghastly jump between C3 and C#3, worse than anything I've heard coming from a PCM-based synth in many, many years.

I decided to look for a better choral model that was already 'looped'. This was where I ran into yet another problem. Consider model 283: 'Ohhchoir': this displays exactly the sort of 'bump' that you would expect from a badly looped sample. This bump is particularly noticeable on C3 (in fact, on all the Cs) as well as other notes such as F3. Again, you have to ask what's going on. If the bump were the result of bad looping in the source sample, why didn't Hartmann reject it, as any PCM synth manufacturer would? If the bump is a consequence of discontinuities in the model, why didn't Hartmann generate a new model?

I soon discovered that the deficiencies in 'Tape Choir' and 'Ohhchoir' were not aberrations, as I found when I switched off the effects swamping a patch that uses model 156: 'Classchoir'. This suffered from similar problems. What's more, there were even octave discontinuities within this model; A2 is 13 semitones above G#2! And 'Classchoir' wasn't the only model to suffer from this problem, as I discovered when experimenting with some of the brass models.

I suspect that these bumps and discontinuities are in part a consequence of the current limit on Neuron model sizes to just 12MB. Given the storage figures discussed earlier, it would seem likely that it's only possible at the moment to create three or four high-complexity zones across the keyboard, so it's little surprise that problems of this kind arise. Apparently, this limit is due to disappear in OS version 1.3, so the problem may be ameliorated in the future. But until then, it's not nice.

Equally disappointing is the discrete nature of the two sounds generated from the high- and low- velocity samples in Modelmaker. I think that — at the very least — we could expect these to crossfade from one to the other. As it is, they are discrete, resulting in unpleasant transitions across their boundary. Finally, I also noticed some granularity becoming audible in some of the parameters if you switch off the effects and wiggle the Resynator joysticks. To be honest, I could continue describing the models' and Resynators' deficiencies, but I think it's clear that some things are awry, and whether they are problems with the initial software release, or just bad factory programming of the supplied models, Hartmann should fix them.

Putting these worries to one side for the moment, let's assume that the Resynators and models work as they should. How does the rest of the Neuron perform?

Hartmann make huge claims for the user interface, describing it as "something entirely apart from what users have encountered with conventional synthesizers". However, there is nothing on the Neuron's control panel that has not been seen before, whether it is opto-encoders (the knobs), continuous wheels, X-Y joysticks, or LCD menus. What is true, however, is that they have not been combined in this way before. Sure, the tiny screen makes the parameters rather inaccessible, and the Resynators' parameters offer only the merest hint as to the changes you'll obtain when you alter them, but if you treat the Resynators, Blender and Slicer as largely serendipitous controls, and the Shapers, Silver and Effects as you would on any other synth, it all comes together. I liked the inclusion of the Snapshot function, which records the current parameter values, allowing you to backtrack along a chain of user-defined 'Undos' if you find that you've travelled up a sonic cul-de-sac.

Moving the Resynator joysticks can effect dramatic changes in the character of the selected Neuron sound, depending on the source sound and the model parameters that appear on the four screens surrounding each joystick — and thanks to the Stick Automation feature, these joystick movements are recordable.Photo: Mark EwingNow, what about polyphony? Hartmann claim that the Neuron "has at least eight voices of polyphony and a maximum of 32". In the single-patch Sound mode, you'll be lucky to get eight voices, but 32 is out of the question. Many Sounds started stealing voices on the sixth or seventh note. In the four-part multitimbral Setup mode, even eight is an exaggeration. I managed to program Setups that made the Neuron monophonic, but there's worse; listening to the voice-stealing and glitching that occurs as the Neuron attempts to allocate six voices to a four-part Setup is simply awful.

Moving the Resynator joysticks can effect dramatic changes in the character of the selected Neuron sound, depending on the source sound and the model parameters that appear on the four screens surrounding each joystick — and thanks to the Stick Automation feature, these joystick movements are recordable.Photo: Mark EwingNow, what about polyphony? Hartmann claim that the Neuron "has at least eight voices of polyphony and a maximum of 32". In the single-patch Sound mode, you'll be lucky to get eight voices, but 32 is out of the question. Many Sounds started stealing voices on the sixth or seventh note. In the four-part multitimbral Setup mode, even eight is an exaggeration. I managed to program Setups that made the Neuron monophonic, but there's worse; listening to the voice-stealing and glitching that occurs as the Neuron attempts to allocate six voices to a four-part Setup is simply awful.

Finally, there's another quirk; the lack of any conventional transposition capability in Sound mode. The transposition controls in the Resynators are not simple pitch controls; they fundamentally affect the nature of the sound. Only in Setup mode can you transpose a Sound so that it plays in the same way at a different position on the keyboard.

So, what's good about the Neuron? Firstly, there's stick animation — the joysticks in the Resynators offer a form of control not found elsewhere, often over sound-shaping parameters that are not found elsewhere. This is particularly true when you start using stick animation, making it simple to create sounds that are not obtainable from any other source. To my ears, the Korg Wavestation comes close, but there are places where the Neuron leads but the Wavestation cannot go. Furthermore, another enhancement promised in v2.0 is 'stick zoom', which I assume means increased resolution for finer adjustments. That would be nice.

Secondly, there's the sound quality of the pads and textures. Despite the limitations of Modelmaker and the problems with the models and Resynators, the Neuron's effects can turn a rather dry sound into something much more interesting, and for this the instrument has a character that you may like. Whether the resulting patches are enough of an improvement over similar sounds available from cheaper alternatives, and whether they justify the Neuron's price tag is, of course, another question (or two).

Thirdly, there's the serendipitous nature of the programming system. Normally, I'm not a fan of the 'infinite number of monkeys' approach to synthesis, but nonetheless, uninformed twiddling with the Resynators can lead to interesting results. But having said that, I'm still convinced that it will take time and understanding to get the best from the Neuron.

Finally, if you have a penchant for sampling and mangling existing sounds, or warping vocals, the Neuron is ideal; you're more likely to create something odd and interesting here than on a conventional sampler.

A Neural What?

Throughout this review, several mentions are made of Neural Networks without explaining what they are. In brief, they are simplified computer models of the parallel processing that takes place in the human brain. Millions of times less complex than a real brain, Neural Nets are hopeless at the conventional computing tasks for which billions of sequential calculations per second are so appropriate, but they have the ability to detect patterns within large amounts of data. This makes them highly suitable for use in areas such as speech recognition and resynthesis (yippee!), but also for recognising your number plate as you pass through an electronic speed trap (boo!).

And the Future...?

Clearly, The Neuron is still a work in progress. Many facilities within the synth itself are unimplemented (the ability to receive external audio being a particularly obvious case) and I hope that Modelmaker is still in its infancy. I have mentioned a number of upgrades and bug fixes planned for release in versions 1.3 and 2.0, and Hartmann Music are also promising RAM upgrades, external drives, CD burners, and USB memory sticks in version 1.3. At some undetermined point in the far future there may even be a 'digital 5.1 edition' with an ADAT interface offering six-channel surround without going via the analogue domain.

These are all useful ideas, but I would like to propose a more fundamental improvement. Given that the Neuron is essentially a PC in synth clothing, why not give it a video monitor output and a full operating system? The Technos Axcel mentioned elsewhere in this article was fully integrated in 1988, and samplers of that era — such as the Roland S770 — offered full, graphical user interfaces, so this should not be beyond the Neuron's capabilities.

The other alternative would be to take this process one step further; the Neuron could be implemented as a software environment combining both the Modelmaker and Neuron components on one computer. This would make it cheaper, more open-ended, and probably more flexible. With a dedicated control surface featuring the joysticks for use alongside a MIDI keyboard, this could provide all the existing facilities, and more. The only disadvantage would be the entirely computer-dependent nature of the resulting system, with all that this entails for portability and stability — but the Neuron is already tied to a computer for all its Modelmaker-related resynthesis functions anyway.

Conclusions

Hartmann Music's literature suggests that a "trailblazing technology" appears every 15 to 20 years, and that the Neuron is one such product, containing "technology that in the near future will reshape the perceptions of the entire computer industry". Wow! I think that that is the boldest claim I have encountered in over 30 years of gear lust and nearly two decades of product reviewing. But quite apart from Hartmann's own contribution to the art of hyperbole, there has also been a fair amount of eulogy heaped upon the Neuron by the press, to the extent that it has been nominated for — and even received — various awards, one of which was presented fully nine months before the first units were shipped from the factory. Given its current condition, I feel this was unwise, and suspect that people have been carried away by the undeniable fact that it is different, without waiting to investigate its weaknesses as well as its promised strengths. When it works as it should (which, in my view, means waiting for OS v2.0 or beyond) it may look rather different, but for now, I can't wholeheartedly praise it; the best I can offer is, "watch this space".

What Is Resynthesis?

The Neuron generates its sounds using a form of resynthesis named 'Multi Component Particle Transform Synthesis' by designer Stephan Sprenger. However, resynthesis has already been with us for a long time.

Many people have suggested that the PPG Realiser (born 1986, died 1987 when the company folded) was the first commercial resynthesizer, but I view it as more of a modelling synth, similar in concept to today's virtual analogue synths. Nonetheless, there was one true resynthesizer announced in the 1980s. It was the Technos Axcel.

The basis of the Axcel was radical at the time, although it is far more widely understood today. In short, the system loaded a sample, analysed how the frequencies that comprised it changed during the course of the sound, and then rebuilt a close approximation to the original using a bank of amplitude-modulated digital oscillators. Of course, you could have done the same thing using an enormous additive synth, but you would have had to define incredibly complex multi-stage envelopes for every frequency contained within the sound. Thankfully, the Excel did this for you.

One advantage of this form of resynthesis is that the model of the sound can be much smaller than the original sample, and it becomes even smaller if you are prepared to compromise the accuracy somewhat. The second is that you can manipulate the parameters of the model to create new sounds based on the original, warping it into completely new timbres, or retaining enough of the original to be recognisable. Depending upon the complexity of the system, you can also perform tricks such as formant detection, which enables you to transpose sounds over a wider range with reduced munchkinisation. Furthermore, whereas short samples turn into brief blips at high pitches, the sound regenerated using a model can be extended in ways that cannot be achieved when replaying the original.

You might expect the Axcel to have been extremely basic compared to today's resynthesis systems, but it was not. It offered 1024 multi-waveform 'harmonic' generators, with 'intelligent' 1024-step pitch envelopes, plus similarly 'intelligent' volume envelopes and amplifiers. After resynthesis, the output from the Axcel's sound generator was passed through a pair of multi-mode filters, and you could affect aspects of the sound using 'intelligent' modulators, all adjustable in real time. If this sounds familiar, I'm not surprised; it is in essence the structure of the Neuron. Indeed, it's uncanny how much of the philosophy behind the Axcel is evident in the Neuron... not just the signal path, but even the real-time modification of the models (performed on the Axcel using a touch-sensitive screen rather than joysticks).

Things have moved forward considerably since 1988. Resynthesis is no longer the mystery it once was, and numerous hard- and software synths offer some form of it. Likewise, the science of resynthesis itself has progressed, and Stephan Sprenger's system goes way beyond building simple FFT models.

There's no reason why resynthesis should be based on sine waves, and any number of alternatives exist, each with individual strengths and weaknesses. This then leads us to the aspect of the Neuron that — if Hartmann Music's claims are to be accepted at face value — makes it different from other resynthesizers. Instead of using a single type of model to analyse and recreate all the sounds presented to it, the Neuron (or, rather, the Modelmaker software that creates the models) uses a form of processing called a Neural Net to create a unique model for each sample, such that it can be recreated and manipulated within the synth.

As discussed in the main part of this review, the natures of these models are not completely free on the Neuron, but are constrained by the 10 parameter sets provided by Modelmaker. Nonetheless, these provide significantly greater freedom than was available using the Axcel's single frequency/amplitude analysis. What's more, rather than create a single model for an evolving sound — which may be appropriate for some moments, but not for others — it is claimed that Modelmaker is capable of generating an evolving set of multiple models that 'morph' smoothly from one to the next. Confused? Then imagine a drum loop that contains kick drums and cymbals playing at different times. Clearly, a single model would be less than ideal for resynthesizing this, so the idea of creating several sub-models and stringing them together is sensible.

Unfortunately, no-one at Hartmann is handing out any information regarding the exact nature of the models generated for the Neuron. This makes it virtually impossible to test the veracity of their claims. Nonetheless, it's not difficult to understand what's going on, at least in part. Take, for example, the sample of my flute that I created when investigating Modelmaker. A suitable model derived from this sample should contain information about the frequencies contained in the note, the relative amplitudes of the tonal and noise components, the positions of the formants, the overall frequency response, and the perceived size of the cavity within which the sound acquires its unique timbre. If these are modelled successfully, you could then attach parameters to them, with names such as Low Turbulence, High Turbulence, Warm, Cold, Large, Small... and so on, each controlling an aspect of the resynthesized sound. And that's what happens when you use Modelmaker and the Neuron.

Some people have questioned whether the Neuron really is a resynthesizer, or whether the internal drive is holding samples that are mangled in some way by the synth engine. To a large extent, this is Hartmann Music's fault. By inventing meaningless terms, they have obscured many aspects of the synth.

The facts are these — after invoking Modelmaker and asking it to process the source samples, a minimum of four files are produced: 'Mname', 'map.script', and as many Scapes and Spheres as are appropriate. 'Mname' is a text file that contains exactly what you would expect: the Model Name. The 'map.script' file is another text file, and contains the information about which source samples were used where, and what user-defined parameters you have applied.

The Scape and Sphere files are much larger... indeed, the Spheres are much larger than the original samples. Whether these contain the original sound or not is open for debate. On Hartmann's web site, it says that "these models contain the actual sound", while elsewhere on... umm... Hartmann's web site, it says that "after analysing the sampled audio data you feed into it, the samples are discarded and only the model information is kept". Who's right? Hartmann or Hartmann? You tell me.

Pros

- For most of us, it's a new method of synthesis.

- New, animated sounds are possible.

- It's clearly suitable for SFX and foley.

- It holds promise for the future.

Cons

- Some factory sounds have discontinuous, glitching models, and it's all too easy to recreate similar sounds yourself with Modelmaker.

- The sounds are flat without the effects.

- Polyphony is limited.

- Modelmaker requires an external computer, and its communications system is overly complex.

- The sound engine is not suited to deterministic programming, and users trying to fathom it will be frustrated by the esoteric terminology.

- It's too noisy for a quiet studio.

- At present, it's far from finished.

Summary

At present, the Neuron is something of a mixed bag. The original concept of an 'intelligent' modelling instrument capable of deriving all a sound's parameters and model information from the source samples has been downgraded, and what's more, it currently exhibits deficiencies in its sound generators, very limited polyphony, and poor programming. On the other hand, it shows promise for the future. I suspect that you're either going to view it as an expensive work-in-progress, or love it unreservedly and defend it against all and any criticism.