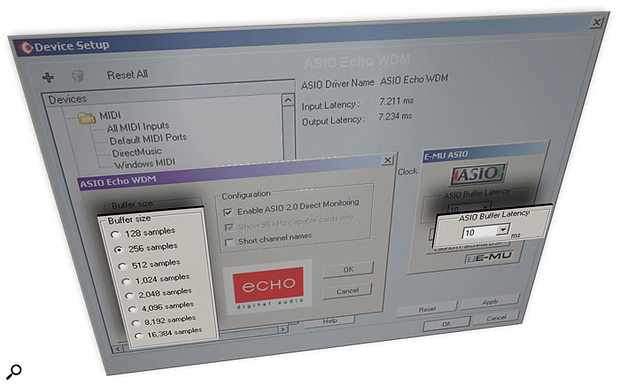

Choosing ASIO drivers, where possible, should help you achieve the lowest latency, using the Control Panel window provided by your particular audio interface. Here you can see the Control Panels for the Echo (left) and Emu (right) ranges, as launched from the Cubase SX Device Setup window.

Choosing ASIO drivers, where possible, should help you achieve the lowest latency, using the Control Panel window provided by your particular audio interface. Here you can see the Control Panels for the Echo (left) and Emu (right) ranges, as launched from the Cubase SX Device Setup window.

If you're tempted to go and make a cup of tea in the gap between pressing a note on your keyboard and hearing it play on your soft synth, you need help, and quickly...

The SOS Forums are still awash with queries from new PC musicians asking why they get a delay between pressing a key on their MIDI keyboard and hearing the output of a soft synth on their computer. Sometimes this delay may be as much as a second, making 'real time' performances almost impossible. Newcomers to computer music soon cotton on to the fact that this is because of 'latency' and 'buffer sizes', but are often left wondering just what to adjust and what the 'best' setting is.

Setting the correct buffer size is crucial to achieving optimum performance from your audio interface: if it's too small you'll suffer audio clicks and pops, while if it's too large you'll encounter audible delays when performing in real time. The ideal setting can depend on quite a few different factors, including your particular PC and how you work with audio, while the parameters you're able to change, and how best to do it, can also vary considerably depending on which MIDI + Audio application you use.

Buffers: The Basics

Let's start by briefly recapping on why software buffers are needed. Playing back digitised audio requires a continuous stream of data to be fed from your hard drive or RAM to the soundcard's D-A (digital to analogue) converter before you can listen to it on speakers or headphones, and recording an audio performance also requires a continuous stream of data, this time being converted by the soundcard's A-D (analogue to digital) converter from analogue waveform to digital data and then stored either in RAM or on your hard drive.

No computer operating system can do everything at once, so a multitasking operating system such as Windows or Mac OS works by running lots of separate programs or tasks in turns, each one consuming a share of the available CPU (processor) and I/O (Input/Output) cycles. To maintain a continuous audio stream, small amounts of system RAM (buffers) are used to temporarily store a chunk of audio at a time.

For playback, the soundcard continues accessing the data within these buffers while Windows goes off to perform its other tasks, and hopefully Windows will get back soon enough to drop the next chunk of audio data into the buffers before the existing data has been used up. Similarly, during audio recording the incoming data slowly fills up a second set of buffers, and Windows comes back every so often to grab a chunk of this and save it to your hard drive.

If the buffers are too small and the data runs out before Windows can get back to top them up (playback) or empty them (recording) you'll get a gap in the audio stream that sounds like a click or pop in the waveform and is often referred to as a 'glitch'. If the buffers are far too small, these glitches occur more often, firstly giving rise to occasional crackles and eventually to almost continuous interruptions that sound like distortion as the audio starts to break up regularly.

Making the buffers a lot bigger immediately solves the vast majority of problems with clicks and pops, but has an unfortunate side effect: any change that you make to the audio from your audio software doesn't take effect until the next buffer is accessed. This is latency, and is most obvious in two situations: when playing a soft synth or soft sampler in 'real time', or when recording a performance. In the first case you may be pressing notes on your MIDI keyboard in real time, but the generated waveforms won't be heard until the next buffer is passed to the soundcard. You may not even be aware of a slight time lag at all (see 'Acceptable Latency Values' box), but as it gets longer it will eventually become noticeable, then annoying, and finally unmanageable.

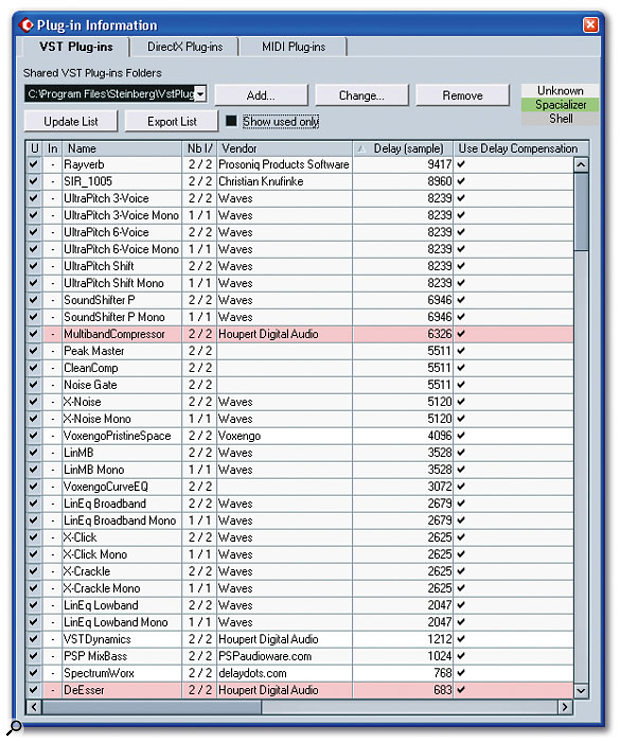

During recording, the situation is even worse, since you normally won't be able to hear the incoming signal until it has passed through the input buffers and reached the software application, then passed through a second set of output buffers on its way to the soundcard's D-A converter, and then to your speakers or headphones. 'Monitoring' latency is double that of playback latency. Some plug-ins add latency to the audio path, as revealed by this Plug-In Information window in Cubase SX. The window shows which plug-ins exhibit additional latency when used, and whether or not to automatically compensate for it.

Some plug-ins add latency to the audio path, as revealed by this Plug-In Information window in Cubase SX. The window shows which plug-ins exhibit additional latency when used, and whether or not to automatically compensate for it.

Plug-in effects can also add their own processing latency, particularly compressors, limiters and de-essers that look ahead in the waveform to spot peaks, plus pitch-shifters and convolution-based reverbs that employ extensive computation. Any tracks in your song using these effects would be delayed compared with the rest, so to bring them all back into perfect sync most MIDI + Audio applications now offer automatic 'delay compensation' throughout the entire audio path. Unfortunately, this can also add to the record-monitoring latency, although some applications (including Cubase SX) now provide a 'Constrain Delay Compensation' function, which tries to minimise the effects of delay compensation during the recording process, by temporarily disabling those plug-ins whose inherent latency is above a certain threshold (you set this in the Preferences dialogue). Activating this function during an audio recording or soft-synth performance may allow less 'real-time' latency. You can then deactivate it to hear every plug-in again.

Zero-latency Monitoring

As you can see, latency can be a complex and potentially confusing issue. Fortunately, many audio interfaces provide so-called 'zero-latency monitoring' for recording purposes. This bypasses the software buffers altogether, routing the interface input directly to one of its outputs. However, as I explained in my article 'The Truth About Latency' (see 'Further Reading' box), the total latency value isn't just that of the buffers. The audio signals also have to pass through the A-D and D-A converters on their way into and out of the computer, which adds a small further delay.

Converters usually add a delay of about 1ms each, but there may be other hidden delays as the signals pass through the soundcard, caused by the addition of features such as sample-rate conversion for rates of 32kHz or lower, which are sometimes not directly supported by modern converters. So 'zero'-latency monitoring actually means about 2ms latency, which is still almost undetectable in most situations. It's incredibly useful during recording, because the performers can listen to their live performance without any audible delay, which makes it far easier to monitor via headphones, for instance. However, it does have one unfortunate side-effect: you can't hear the input signal with plug-in effects, which prevents you from giving vocalists some reverb 'in the cans' to help with pitching, or guitarists some distortion, for example. One way around this is to buy an interface with on-board DSP effects and use them instead. Another is to use an external hardware unit to add effects. In both cases it's generally possible to connect them up such that you can hear the performance with effects, but still record it 'dry', for maximum flexibility during later mixdowns.

Unfortunately, you can't use zero-latency monitoring with soft synths or soft samplers, whose waveforms must always be passed though a set of software buffers before being heard. So we need to keep the buffer size as small as possible, to minimise the time between hitting a note and hearing the result.

Value Judgements

Some audio-interface manufacturers make life easy for you by directly providing the playback latency value in milliseconds at the current sample rate in their Control Panel utilities — although I've come across a few that provide incorrect values! Many applications also provide a readout of this latency time. If your audio application or soundcard provides a readout of buffer size in samples instead, it's easy to convert this to a time delay by dividing the figure provided by the number of samples per second. For instance, in the case of a typical ASIO buffer size of 256 samples in a 44.1kHz project, latency is 256/44100, or 5.8ms, normally rounded to 6ms. Similarly, a 256-sample buffer in a 96kHz project would provide 256/96000, or 2.6ms latency.

Your particular MIDI + Audio application (this screenshot shows Cubase SX 3) may support various audio-interface driver formats. The preferred options, if you have a choice, are ASIO, WDM, DirectX and MME, in that order.

Your particular MIDI + Audio application (this screenshot shows Cubase SX 3) may support various audio-interface driver formats. The preferred options, if you have a choice, are ASIO, WDM, DirectX and MME, in that order.

Most of you will have spotted that running songs at a higher sample rate means lower latency, and some musicians assume that this is a major reason to make the switch. Unfortunately, doubling sample rate also doubles CPU overheads, since twice as much data has to be processed by plug-ins and soft synths in the same time period. You'll thus only be able to run half as many effects and notes as before, so do take this into account.

I've also mentioned in the past (notably in SOS June 2003) that the setting for audio interface buffers doesn't only affect latency, but also CPU overhead. However, many musicians forget this as they struggle to achieve the lowest possible latency for their system. The problem is that while the audio interface drivers take a negligible CPU overhead of their own to get started each time before the actual buffer filling and emptying takes place, and then to terminate afterwards, this small constant overhead can become increasingly significant at lower latency values. If, for example, your buffers are running with a sample rate of 44.1kHz and have a 12ms latency, they only need to be filled and emptied about 86 times per second. But if you attempt to reduce buffer size to 64 samples at 44.1kHz, to achieve a latency of 1.5ms, you have to fill these buffers 689 times a second, and each time you do the drivers consume their little extra overheads.

However efficient the driver programming, this will produce a noticeable hike in your CPU load, although some interfaces have more efficient drivers than others. Over the years I've noticed that nearly all interfaces give very similar values for CPU overhead, as measured inside applications such as Sonar and Cubase, when their latency is 12ms or higher. They can, however, vary considerably below this value, and USB and FireWire interfaces can sometimes impose a significantly higher overhead at low latencies than do PCI or PCMCIA interfaces.

Acceptable Latency Values

Here are some thoughts on acceptable values for different recording purposes:

- Vocals: This is the most difficult example, because anyone listening to their vocals in 'real time' will have headphones on, and therefore have the sounds 'inside their head'. A latency of even 3ms can be disconcerting in these conditions.

- Drums & Percussion: I suspect most drummers will prefer to work with latencies of 6ms or under, which should provide an 'immediate' response.

- Guitars: Electric guitarists generally play a few feet from their stacks, and since the speed of sound in air is roughly a thousand feet per second, each millisecond of delay is equivalent to listening to the sound from a point one foot further away. So if you can play an electric guitar 12 feet from your amp, you can easily cope with a 12ms latency.

- Keyboards: Even on acoustic pianos there's a delay between your hitting a key and the corresponding hammer hitting the string, so a smallish latency of 6ms ought to be perfectly acceptable to even the fussiest pianists. Famously, Donald Fagen and Walter Becker of Steely Dan claimed to be able to spot 5ms discrepancies in their performances, but the vast majority of musicians are unlikely to worry about 10ms, and many should find a latency of 23ms or more perfectly acceptable with most sounds, especially pads with longer attacks.

Driver Options

Your application may support more than one driver format, in which case you've first got to decide on the best one. You'll have the easiest time if you can choose ASIO drivers. These generally offer the lowest latencies and CPU overheads, and are also supported by a wide range of host applications, including Steinberg's Cubasis, Cubase, Nuendo and Wavelab, Cakewalk's Project 5 and Sonar (from version 2.2 onwards), and Emagic's Logic Audio.

Sonar's WDM driver with Kernel Streaming support is capable of really low latencies once you've tweaked its Buffer Size slider.

Sonar's WDM driver with Kernel Streaming support is capable of really low latencies once you've tweaked its Buffer Size slider.

The host application generally provides a button labelled 'Control Panel' that launches a simple utility unique to your audio interface, with a small selection of preset ASIO buffer sizes from which to choose. Playback generally stops as soon as the Panel appears, so that you can change the size, although some interfaces won't adopt your new value until you close and then re-launch the host app. However, there is sometimes a way around this. Cubase, for example, has a tick box labelled 'Release ASIO Driver in Background' that will give the Control Panel full control of the interface while its window is open, and then return control to Cubase as soon as you close it, with the new buffer size in force.

Anyone running Tascam's Gigastudio will require GSIF drivers, which mostly provide a fixed but low latency of between 6ms and 9ms and thus will work very well from day one without any tweaking. However, some modern interfaces provide a range of GSIF buffer sizes under Windows XP, generally chosen using a similar utility to the ASIO Control Panel.

WDM drivers are also capable of excellent performance with some applications (particularly Sonar), but generally take more setting up to achieve the lowest latency values, since you can often choose both the number of buffers and their sizes. Where and how to adjust these depends on the individual application (see next section). If your audio interface only has WDM drivers that provide mediocre performance but your application is ASIO-compatible, try downloading Michael Tippach's freeware ASIO4ALL driver (http://michael.tippach.bei.t-online.de/asio4all). This is a universal ASIO overlay that sits on top of any device's existing WDM driver to provide it with full ASIO support, and often far lower latencies.

DirectSound drivers can often provide good playback performance but rarely offer equivalent recording performance, so in the absence of ASIO or WDM drivers this often makes them a good choice for playing soft synths. Finally, some applications, such as Sound Forge, only support MME drivers. Even with Sound Forge's Buffer size (found in the Wave page of Options/Preferences) set to its minimum 96kb size you'll still hear a noticeable delay in some circumstances.

Latency Hints & Tips

- If you're streaming samples from a hard drive for an application such as Gigastudio, HALion or Kontakt, using a drive with a low access time will help you achieve the minimum latency.

- During playback and mixdown, latency largely determines the time it takes after you press the play button to actually begin hearing your song. Few people will notice a gap even as large as 100ms in this situation.

- If you're running a pre-mastering application such as Wavelab or Sound Forge, you don't often need to work with a low latency. Few people notice the slight time lag between altering a plug-in parameter and hearing the audio result when mastering, even when this lag is 100ms or more. The only time I find a high mastering latency annoying is when I'm bypassing a plug-in (A/B switching) to hear its effect on the sound. You ideally need the change-over to happen as soon as you click the Bypass button, but most people still won't notice a latency of 23ms in this situation.

- When using a hardware MIDI controller for automation you may not need low latency, but it's generally preferable when inputting 'real time' synth changes such as fast filter sweeps, to ensure the most responsive interface between real and virtual knobs.

- During monitoring of vocals you may be able to use 'zero-latency' monitoring for the main signal, but still add plug-in reverb using a latency of 23ms or more without causing any delay problems, as long as you use the effect totally 'wet'.

Some Application Examples

Each audio application tucks away driver and buffer-size choices in a slightly different place, although menus labelled Audio, Preferences or Setup are a good place to start looking.

- Sonar: Sonar runs a 'Wave Profiler (WDM Kernel Streaming)' utility when you first start the application, to determine the optimum size for its buffers at all available sample rates, but you can run this again at any time by clicking the Wave Profiler button on the General page of Audio Options. This sets up the MME and WDM drivers for a safe but conservative latency, whose current value can be seen below the Buffer Size slider in the Mixing Latency section on this same page (see screen above). You can nearly always move this slider further to the left to reduce latency (typically 99ms), as well as lowering the setting for 'Buffers in Playback Queue' if it's not already set to its lowest value of two. Move the slider to a new value, close the Audio Options window, then restart playback of the current song and listen for clicks and pops, as well as checking the new CPU-meter reading. In my experience, this meter reading can double between latencies of about 12ms and 3ms.

If you prefer to use Sonar with ASIO drivers, you can change the Drive Mode in the Playback and Recording section of the Advanced Audio Options page from WDM/KS to ASIO. Then exit Sonar and restart it for the change to take effect. The Mixing Latency of Audio Options will now be greyed out, and you can click on the new ASIO Panel button to launch the familiar control panel for your particular audio interface. When you close this, the new value takes effect immediately. However, the Effective Latency value shown in Audio Options only gets updated the next time you launch this window. According to my tests with Sonar 's CPU meter, ASIO drivers generally give slightly lower CPU overhead than their WDM counterparts at latencies lower than about 12ms.

- Cubase SX 3: Click on Device Setup in the Device menu and then choose 'VST Audiobay' (or 'VST Multitrack' in SX 1 and 2) in the left-hand column. The type of ASIO driver can be chosen in the drop-down box at top right. By far the best choice is a true ASIO driver, such as either the ASIO Echo WDM or EMU ASIO entries shown in the screen shot on page 118. You can then choose a suitable buffer size by clicking on your new driver's name in the left-hand column, and then on the Control Panel button.

The 'ASIO Multimedia' driver is generally the worst choice, since this will require you to set up the number of buffers and their size by hand, using the Advanced Options launched from the Control Panel button, and will always result in much higher latency. The best I've ever managed with my Echo Mia card is three buffers of 1024 samples, resulting in a playback latency of 93ms. Rather better is 'ASIO DirectX Full Duplex Driver', which my Echo Mia card can run with a single 768-sample buffer, resulting in a reasonable latency of 17ms.

- Wavelab: Click on Preferences in the Options menu, and then on the Audio Card tab. Here you can choose different drivers for Playback and Recording, which can be useful if you have several interfaces installed in your PC (this only applies to the MME-WDM options — choosing any ASIO driver for playback greys out the recording selections). Unusually, choosing an ASIO option still allows you to select the number of buffers, so it's important to give this the lowest setting (three) if you want really low latency.

The MME-WDM options default to six buffers with a size of 16384 bytes each, giving a huge latency of 557ms at 44.1kHz, but you can nearly always reduce this to four buffers of 16384 bytes (371ms) with no problems. If you go lower, you may find that playback is glitch-free but that juddering occurs when you display the drop-down lists of plug-ins in the Master Section. My Echo Mia card can manage five 4096-byte buffers producing 116ms of latency, which is quite low enough for most mastering purposes. However, choosing ASIO drivers is generally a much better option if they are available.

- Native Instruments soft synths: Most of these can run either as VST or DX Instruments inside a compatible host application, or as stand-alone synths using ASIO, DirectSound or MME drivers. If you choose either of the latter two options there's a single Play Ahead slider to tweak for the lowest latency. Many interfaces manage the lowest MME setting of 10ms under Windows 98SE, but under Windows XP 40ms is more typical. DirectSound drivers generally manage 25ms or less, which can be perfectly acceptable, under all versions of Windows.

Further Reading

- Choosing A PC Audio Interface: SOS November 2004

www.soundonsound.com/sos/nov04/articles/pcmusician.htm

- DSP-assisted Audio Effects & Latency: SOS April 2004

www.soundonsound.com/sos/apr04/articles/pcmusician.htm

- Using Hardware Effects With Your PC Software Studio: SOS March 2004

www.soundonsound.com/sos/mar04/articles/pcmusician.htm

- PC Musician Jargon Buster: SOS February 2004

www.soundonsound.com/sos/feb04/articles/pcmusician.htm

- The Truth About Latency: SOS September 2002

www.soundonsound.com/sos/Sep02/articles/pcmusician0902.asp

- The Truth About Latency Part 2 : SOS October 2002

www.soundonsound.com/sos/Oct02/articles/pcmusician1002.asp

- Hear No Evil: SOS August 1999

www.soundonsound.com/sos/aug99/articles/zerolatency.htm

- Mind The Gap: SOS April 1999

The Best Latency

So what's the best buffer size for your system? This isn't straightforward to answer. If you mainly play soft synths and soft samplers, or you're recording electric guitar, a 6ms ASIO or WDM/KS latency (256 samples at 44.1kHz) is probably low enough to be unnoticeable, and won't increase your CPU overhead too much. However, if you're one of the many musicians who don't notice a 12ms latency, adopting a 512-sample buffer size at 44.1kHz will probably allow you more simultaneous notes and plug-ins.

The best way to find out is to set a latency value of about 23ms (a buffer size of 1024 samples at 44.1kHz), and then choose a soft-synth sound with as fast an attack as possible (slow-attack pads can easily be played with latencies of over a second). See if you can detect any hesitation before each note starts, while you're playing in real time from a MIDI keyboard, and don't be embarassed if you can't! If you can detect a hesitation and you find this latency noticeable or even annoying, reduce the buffer to the next size down and try again, until you decide on a latency that works for you. This way you won't be wasting your processor's time by making it constantly fill unnecessarily tiny buffers.

Whatever the latency value you choose, you may have to adopt a lower one when monitoring vocals during recording, if you want to add plug-in effects 'live'. Set the buffers inside your particular MIDI + Audio application to a high latency of about 23ms and then start playback of an existing song. Watch the application's CPU meter and note its approximate reading. Now choose the next buffer size down and try again. If playback is still glitch-free, keep going, a setting at a time, until tell-tale clicks and pops start to appear. If you're lucky this won't happen until a 3ms or even lower latency, and may not even occur at the lowest available setting provided by your audio interface. But as soon as you hear even a single click, move back to the next buffer size up and try again, until you're sure that the current buffer setting is the lowest that is totally reliable. At the same time, note any increases in the CPU meter reading compared with its 23ms setting — if it's risen considerably, you'll probably find it preferable to only use this low latency during recording when you really need it for monitoring purposes, returning to your previously chosen playback setting at all other times.

It's rare to run into any problems other than glitching when you're trying low ASIO or WDM buffer values. However, with MME and DirectSound drivers you may experience intense waveform breakup that can sometimes even crash your PC, so be very cautious when setting these driver buffer sizes below about 40ms. Change the value in small steps, and stop as soon as you start to experience glitching.

Many musicians adopt a two-stage approach — a low latency value during the audio-recording phase and a more modest one during soft-synth recording, playback and mixdown, when they can add more plug-ins. And while these procedures may sound complex, they should only take you an hour, at most, to complete, and you only need to perform them once with a particular combination of audio interface, driver version and PC. Once you've found the most suitable latency value for playback and the lowest possible latency supported by your particular PC, you'll know you're making the best use of your processor in all situations.