I've heard that with Windows XP, old versions of Norton Speed Disk do not work and that the latest version of Speed Disk included in Norton Utilities has a number of features removed and is no better, if not worse, than the defrag utility that Windows XP comes with. I'm about to move up to Windows XP to take advantage of Cubase SL, and have used Speed Disk in the past in favour of the defragger that came with Windows 98 because of the huge speed difference.

Ross Copping

SOS Contributor Martin Walker replies: It's not just a case of older versions not working — when moving to another operating system it always pays to be careful with utilities such as Speed Disk that work at a low level, since they have the potential to do some serious damage to what is, after all, unknown territory for them. If you're intending to update an existing Windows installation to XP, you should first investigate when XP-compatible updates or upgrades have been released for all such utilities, and, if so, you should install these before XP; otherwise you should uninstall them altogether.

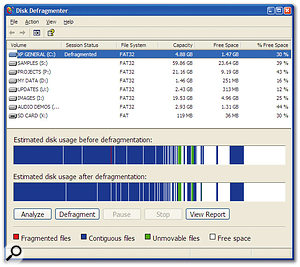

The Disk Defragmenter utility bundled with Windows XP.As for the speed difference, there are two issues — the time it takes to defragment a drive, and how much faster it operates afterwards. I discussed the various approaches to defragmentation most recently in SOS April 2003, and although using the Disk Defragmenter bundled with Windows 98 was like watching paint dry, personally I've found the bundled Win XP defragmenter to be very effective. It has a handy Analyse function that lets you view how fragmented the drive contents are before committing yourself, and once you do, it's far quicker to use.

The Disk Defragmenter utility bundled with Windows XP.As for the speed difference, there are two issues — the time it takes to defragment a drive, and how much faster it operates afterwards. I discussed the various approaches to defragmentation most recently in SOS April 2003, and although using the Disk Defragmenter bundled with Windows 98 was like watching paint dry, personally I've found the bundled Win XP defragmenter to be very effective. It has a handy Analyse function that lets you view how fragmented the drive contents are before committing yourself, and once you do, it's far quicker to use.

It can also optimise disk layout for both boot and application launches, laying out data in contiguous chunks to eliminate excessive head seeks. If you have Task Scheduler enabled it will start optimising after the first boot, so your second boot should be faster. However, while Windows XP is constantly fine-tuning file positions on each boot, Microsoft estimate that 90 percent of the optimisation is done in the first couple. Even with Task Scheduler disabled you can run the new Defragmenter by hand to achieve the same improvements.

The only other advantage of Speed Disk in the past was its ability to place the swap file (or page file) outermost on a partition to provide the fastest performance, but with many musicians now routinely using 512MB or more of RAM, this becomes less important, especially as some people are routinely disabling the swap file altogether.

Although I was an enthusiastic user of Norton Utilities 2000 running on Windows 98SE, I've not personally upgraded to the latest 2002 version compatible with XP, largely because XP's own utilities all seem so much more effective than in previous versions of Windows, and because XP itself seems to need far less tweaking for audio purposes than its predecessors. Why not install Windows XP and see how you get on before deciding whether or not to invest in a suite of utilities?