Dynamics control without compression, tuning for dummies, and vocoding for fun and profit... There's all this and more in part one of our complete vocal production masterclass.

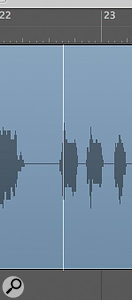

Four nodes have been created, two at each region boundary, by holding down the Control and Option keys while clicking on the region title bar with the automation select tool.

Four nodes have been created, two at each region boundary, by holding down the Control and Option keys while clicking on the region title bar with the automation select tool.

Involving vocals in your project brings with it a unique set of challenges. Lyrics must be intelligible, but the 'hard edges' of the sounds, the bits that make them clear, must not be too intrusive. Pitches must be in tune, in relation to the rest of the project, and yet retain those characteristics that distinguish humans from synthesizers, part of which is an indefinable inaccuracy of frequency. The performance should be dynamic, to convey expression, but in order to be balanced effectively with the other tracks in the project, these dynamics need to be controlled, the 'louds' not exceeding a set maximum and the 'quiets' still audible. Vocalists, with the producer's encouragement, will record as many takes of their performance as necessary to capture every phrase as perfectly as possible, but the magic that happened in take one of verse one might not happen again until take five of verse two.

With all the above in mind, a wide range of tools and skills has developed for recording and processing vocals, wider than for any other instrument, and in this article we're going to look at the use of some of them in Logic 8: dynamics control (without the use of dynamics processors), adjusting timing of phrases and words, and tuning.

The Marquee Tool: Your New Best Friend

Vocalists usually include dynamics in their performance. That is, the variations in volume that make a performance human, expressive, emotional — and difficult to mix. Human vocalists (and most of them are) also tend to move around a little when performing, introducing yet more volume variation into the recording. Using a compressor when recording, and again when mixing, is one way of controlling these 'unfortunate' characteristics, but it can alter the tonal character of the voice and accentuate unwanted parts of the performance, such as breathing. This is not appropriate for many styles of music, so a different approach to dynamics control is required, for which track automation is perfectly suited. The aim is to create a separate volume 'handle' for each word or phrase.

Typically, a vocal recording consists of multiple passes that are 'comped' into the perfect take. Quickswipe comping using Take folders makes this process straightforward and was covered in some detail in October 2008's Logic workshop (/sos/oct08/articles/logicworkshop_1008.htm). If you follow those instructions, you will probably choose to end up with a flattened Take folder exported to a new track. You may therefore already have separate regions representing phrases, but may wish to break these down further into individual words. Alternatively, if you're lucky enough to be working with a singer who can perform more than two words without having to re-record, you may not have created regions via the comping process, but will still need individual regions for each word or phrase. In both cases you could use the marquee tool to create the required regions:

1. Click-drag with the marquee tool to make a marquee selection.

2. Audition the selection by pressing the space bar to play.

3. Adjust the selection by holding down the shift key with the marquee tool. Remember to click outside the existing selection, or the selection will disappear.

4. Turn the selection into a region by clicking it with the pointer tool.

As an alternative to the marquee tool, you can use the Strip Silence function (in the local Audio menu of the Arrange area) to automatically create regions from events. This is covered in more detail in the Logic articles of SOS May 2004 (/sos/may04/articles/logicnotes.htm) and March 2008 (/sos/mar08/articles/logictech_0308.htm).

During the processes outlined above, it should go without saying that obvious errors can be removed, either by trimming the regions you create or by simply selecting and deleting.

Automatic For The People

Shows four automation nodes, two at each edge of the marquee selection. The volume has been raised for this selection by dragging with either the automation select or pointer tools.

Shows four automation nodes, two at each edge of the marquee selection. The volume has been raised for this selection by dragging with either the automation select or pointer tools.

Now that you have created individual regions for each event (by which I mean each word or phrase) turn on Automation view (default keyboard shortcut 'A') and create volume 'handles' for each region, by choosing the Automation Select tool from the toolbox (looks like the pointer tool, but with a wiggly tail!), holding down the Control and Option modifier keys, and clicking on a region. Four nodes will be created, two on each region boundary, enabling you to alter the volume for that event (by dragging with either the automation select or pointer tools) without affecting the volume of other regions (see screen shot below).

Using the marquee tool, it is possible to achieve the same effect without creating lots of separate regions, if that suits your musical material. Choose the marquee tool as your 'Command (Apple)-click tool' (see 'Selecting Tools' box), hold down the Command key and make a marquee selection. Remember that marquee selection is governed by the rules of 'snap', as determined by the snap setting in the drop-down menu at the top of the Arrange area. So it's worth noting that holding down the Control key while selecting with the marquee tool suspends the current snap setting, allowing division-based selection. Adding the Shift key to this key combination allows tick-based snap, the resolution of which is governed by the horizontal zoom setting. Release the Command key to return to the pointer tool and, holding down the Control and Option keys, click on the marquee selection. This time an automation handle is created for the selection without creating a new region.

Simple dragging is one way to change the timing of words or phrases, and is simple if the event in question is in its own region. But you are most likely to want to adjust timing while listening to the word or phrase in context with the rest of the track, while Logic is playing. To achieve this, you can use the nudge facility. Having checked the key command settings (see 'Key Commands' box on next page), use the appropriate key command to set the nudge value, then nudge the region backward or forward in time. Note that you can't nudge a marquee selection until it has been turned into a region.

As automation has been created (whether it's visible or not) you may, while nudging, get a prompt from Logic to move the automation. You do want to do this, but the prompt popping up all the time while you're nudging becomes annoying, so just un-tick 'Show this message again'.

Vocal Tuning For The Untrained

The debate surrounding vocal tuning has been raging ever since the producers of Cher's 'Believe' claimed they achieved that famous effect with a Digitech Talker vocoder pedal (see 'Recording Cher's 'Believe'' in Sound On Sound February 1999, /sos/feb99/articles/tracks661.htm). Nice try, guys! So just to add fuel to the fire, here's a way to tune a vocal even if you are not sure what notes the vocalist was supposed to be singing in the first place, or what key the song is supposed to be in. It does depend on you having a reasonably musical ear, but you don't need to be formally trained. You don't even have to have an intimate knowledge of the melody and harmony of the song, which will often be the case if you didn't write it — and sometimes even if you did!

First of all, create an instrument track with a timbrally 'neutral' sound (such as a piano). By neutral, I mean a sound that's not interesting enough to interfere with your concentration. Pianos are wonderful, of course, but we've all heard them before and we're able to forget about the timbre of the sound and concentrate on the pitch. Note that you'll have to decide whether to mute the vocal track during this process. A very wayward vocal performance may disturb your concentration, whereas a more controlled recording might inform your decisions. So try muting and un-muting as you proceed.

Listening to the relevant section of the song, repeat the note C3 along with it until you can answer the following question: 'Does this note fit, or not?' The answer should be fairly clear — if it does not fit, you'll immediately notice a clash of harmony. Sometimes the answer is immediately 'yes', as you can hear the note in the backing or the melody. Sometimes you may not be sure — it doesn't clash badly, but neither can you hear its exact match in the backing. Move up a semitone to C#3 and repeat. Keep a written note of the result for each semitone until you've tested all 12 and got back to C (C4, that is). You might even want to start on C4 and go to C5, depending on the pitch area of the music you're listening to.

Shows five notes still selected (C, D#, F, G and A#), and a response time of zero milliseconds. The result will be a machine‑perfect vocal performance with only five notes allowed!You should now have a list of 'Yes', 'No' and 'Maybe' responses for all 12 semitones of the octave, so you're ready to tune the vocal. Insert Logic's Pitch Correction plug-in on the vocal track. In the plug-in interface, de-activate the notes that you answered 'No' to and leave the 'Maybes' on for now. (See top screen, opposite.)

Shows five notes still selected (C, D#, F, G and A#), and a response time of zero milliseconds. The result will be a machine‑perfect vocal performance with only five notes allowed!You should now have a list of 'Yes', 'No' and 'Maybe' responses for all 12 semitones of the octave, so you're ready to tune the vocal. Insert Logic's Pitch Correction plug-in on the vocal track. In the plug-in interface, de-activate the notes that you answered 'No' to and leave the 'Maybes' on for now. (See top screen, opposite.)

Experiment with response time. A lower response time will tune the notes faster, so at a certain point the vocal will lose its natural swoops and glides and start to take on the robotic sound so popular with fans of Victoria Beckham. With a fast response time, and deselecting each of the 'maybes' in turn, you can quickly hear which ones are 'in' and which are 'out'. Having decided on notes, readjust the response time to taste. The default setting of 122ms is not a bad compromise for 'average' vocal material – it's almost like people who know what they're doing wrote this software! With reasonably accurate vocalists who just need a little 'cheering up', you can leave all 12 semitones selected, but many recordings benefit from more selective tuning, as outlined here. (Note that to return any parameter, either in a plug‑in or on the mixer, to its default setting or value, you can Option-click it.)

One further tuning trick I have seen used with vocals is, having tuned them, deliberately detuning the result — usually slightly sharp. The theory here is that if everything is perfectly in tune, some of the nuances of frequency that give a part 'identity' in a mix are removed, and the part gets 'lost' in the mix. Secondly, most vocalists will naturally glide up to notes, not down, so even if they end up perfectly in tune on each note's final resting pitch, the 'average' pitch perceived by the listener is flat (too low). Sharpening the whole performance can make a 'correct' performance sound brighter and more confident for this reason. Moving the detune slider 10-15 cents sharp can help to reveal a part that is getting swamped in this way, without turning up the level.

All the above skills rely on an intuitive sense of what is right for the project, and you can't learn that by reading a technique column. Mixing is a musical process and will therefore require practice, just like playing a musical instrument. Sometimes, leaving the original performance well alone, warts and all, is the right course. Soft Cell's 'Tainted Love' is a case in point: if the vocal had been tuned or fixed in any way at all, that song would have none of the 'identity' that helped it to sell millions.

From The Sublime To The Daft (Punk): Vocoding

If you're not content with simply tuning vocal tracks and it suits the style of music, you might want to go the way of Daft Punk or Kraftwerk and add some vocoder action — always a good idea if your vocalist is so bad you don't want anyone to recognise them!

1. First, use the Pitch Correction plug-in with a very fast response, to achieve a robotic sound.

2. Bounce this track and re-import to a new audio track.

3. Double-click the new region to open the Sample editor and choose Audio to Score from the Factory menu.

4. Make sure you have the playhead lined up with the beginning of the audio region on the Arrange area, and a new instrument track selected. This will be the destination of the MIDI region you are about to create.

5. In the Audio to Score dialogue, choose an appropriate preset. You will need to experiment a bit, as they each give slightly different results, and as this is a 'creative' process there is no definitively correct choice.

6. Click the process button to analyse the audio region and create MIDI events that Logic thinks are the correct rhythm and pitch. This is not always as successful as you might wish, and may require editing in the Piano Roll to remove unwanted glitches or to adjust incorrect pitches (although sometimes it is just these 'mistakes' that give the part its character!).

It might be interesting at this point to consider how a vocoder works. In essence, vocoding is a technique whereby an incoming audio signal (sometimes called the 'modulator') is 'analysed', and this analysis is applied to a synthesized sound (the 'carrier') created by the instrument. This is usually achieved by passing both the analysis and synthesized signal through corresponding banks of band-pass filters, resulting in the unique sound typified by Laurie Anderson's 'O Superman', and nearly everything Kraftwerk have ever released. Many vocoder artists work in real time, playing the synthesizer at the same time as singing or speaking the modulator signal into a microphone attached to the instrument, but the method described here allows you to recreate this effect 'after the event', on previously recorded material. To achieve this in Logic, then, continue with the following steps...

7. Call up a Vocoder instrument as the input to the Instrument track, and set its side-chain input to the audio track on which sits the bounced audio region (the modulator). Logic Pro's EVOC20 Polysynth contains several presets (in the Vintage Vocoder folder in the Library) that recreate the classic vocoder sounds of the '70s and '80s, and are, of course, infinitely tweakable.

8. Choose a suitable sound, and the vocoder will play the pitches in the MIDI region, modulated by events in the audio region. Mute the audio track to hear just the vocoder output, if you wish.

The Audio to Score dialogue. Experiment with the presets and other choices here to achieve a suitable conversion of your audio recording into MIDI events

The Audio to Score dialogue. Experiment with the presets and other choices here to achieve a suitable conversion of your audio recording into MIDI events

It's a long way from the high-fidelity audio you lovingly recorded in that wonderful acoustic space, but a lot of fun while the fashion lasts (actually, it's probably already over by the time you read this)!

'Tab To Transient' In Logic

Since Logic's inception, users have bemoaned the lack of a 'Tab to Transient' feature, as found in Pro Tools, for making selections or simply navigating via the transients in audio regions. With the advent of Logic 8's marquee tool, this functionality did become available – but, judging by the number of questions on various forums, its arrival has mostly gone unnoticed. Here's how it works.

Make a marquee insertion (to use ProTools language) by clicking once on a region with the marquee tool (see accompanying screen). The right arrow moves the right edge of the selection to the right, while the left arrow moves the right edge of the selection to the left. Shift‑Right arrow moves the left edge of the selection to the right, and Shift‑Left arrow, unsurprisingly, moves the left edge of the selection to the left. While the marquee selection remains a single insertion point (represented by a thin white line) it can be moved to the left with the left arrow and to the right with Shift right-arrow.

An 'insertion point' created by single‑clicking on an audio region with the marquee tool. Use of the arrow keys gives 'Tab to Transient' selection.This will take a little practice to get used to, but has one significant advantage over ProTools: you can move the right‑hand edge of a selection to the left. How many times in Pro Tools have I overstepped the mark and extended the selection one or two transients too far, simply wanting to retrace my steps a couple of backward tabs to correct the selection? Pro Tools boffins (you know who you are!) will maintain (correctly) that transient‑based selection is only accurate when moving from left to right (especially when, in both programs, you can't control the sensitivity of transient detection), and for that reason Pro Tools users are obliged to nudge back to before the transient at the start of the selection and try again. However, for making selections in less 'transient' material such as vocals, this facility gives Logic the edge — for now…

An 'insertion point' created by single‑clicking on an audio region with the marquee tool. Use of the arrow keys gives 'Tab to Transient' selection.This will take a little practice to get used to, but has one significant advantage over ProTools: you can move the right‑hand edge of a selection to the left. How many times in Pro Tools have I overstepped the mark and extended the selection one or two transients too far, simply wanting to retrace my steps a couple of backward tabs to correct the selection? Pro Tools boffins (you know who you are!) will maintain (correctly) that transient‑based selection is only accurate when moving from left to right (especially when, in both programs, you can't control the sensitivity of transient detection), and for that reason Pro Tools users are obliged to nudge back to before the transient at the start of the selection and try again. However, for making selections in less 'transient' material such as vocals, this facility gives Logic the edge — for now…

Key Commands: Nudging

Nudging is an example of a Logic function that is only available as a key command (as opposed to a menu option or a tool). The Key Commands window is available from the Logic Pro menu.

The Key Commands window, showing default key commands for nudging event position, and custom key commands for setting nudge values.Type 'nudge' into the search field to show all nudge options. The default key commands for nudging are Option‑right‑arrow and Option‑left‑arrow. If this isn't what you're seeing, you can restore defaults by choosing 'Initialise all key commands' from the Options menu (in the Key Commands window). To set your own key commands, select the command, click the 'Learn by key label' button, then press the key (or key combination) you wish to assign to the command; finally, unclick the 'Learn by key label' button. In the screen shot, I have set key commands for changing nudge values, based on the default key commands for nudging.

The Key Commands window, showing default key commands for nudging event position, and custom key commands for setting nudge values.Type 'nudge' into the search field to show all nudge options. The default key commands for nudging are Option‑right‑arrow and Option‑left‑arrow. If this isn't what you're seeing, you can restore defaults by choosing 'Initialise all key commands' from the Options menu (in the Key Commands window). To set your own key commands, select the command, click the 'Learn by key label' button, then press the key (or key combination) you wish to assign to the command; finally, unclick the 'Learn by key label' button. In the screen shot, I have set key commands for changing nudge values, based on the default key commands for nudging.

Selecting Tools

Many of the actions described in this article rely on juggling two or three tools, combined with a variety of modifier keys. And while we're on the subject of modifier keys, I have used the Control, Option and Command terminology. If you currently call these Control, Alt and Apple, my choice may be irritating for you, but you'll be grateful if you ever enroll on an Apple Logic Pro course, as that's how they'll be referred to!

Assigning tools is an essential skill that will greatly speed up your use of the toolbox. To assign a standard (or left mouse-click) tool, left mouse-click on the appropriate tool from the left-hand drop-down tool menu at the top right of the Arrange area. Alternatively, press Escape and choose from the list, or press Escape and type the keyboard shortcut indicated by the tool of your choice. The Command-click tool is chosen in the same way as the alternative method just given, but holding down the Command modifier. You can also choose a Command‑click tool from the right-hand drop-down tool menu at the top right of the Arrange area.

It's also possible to assign a tool to the right button of a two-button mouse, by going Logic Pro / Preferences / Global and choosing the Editing tab. Clicking on 'Is assignable to tool' from the Right mouse Button pop-up menu will cause a third tool menu (the Right-click tool menu) to appear to the right of the two existing menus.

Automated Response

Don't forget that the Pitch Correction plug-in's Response and Detune parameters can be automated. It may be that at one point in the song you need to tighten the pitch right up, in which case the response time needs to be fast, but at another the natural tuning of the performer is preferable, so the response time can be increased. You could adjust these parameters manually, using the techniques described in this article, of course, but you could, alternatively, use a slider or rotary control on your keyboard to adjust the chosen parameter 'on the fly', by assigning the parameter to the control slider or knob using Automation Quick Access, as follows:

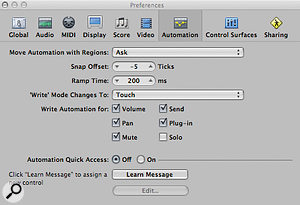

1. Choose Automation Quick Access from the Options / Track Automation menu.

2. Click 'Learn Message' in the dialogue that appears (see the accompanying screen, right).

3. Move the slider or knob that you want to assign.

4. Click 'Done'.

5. Create a lane of automation for Response time (or Detune).

6. Set the automation mode to 'Latch' or 'Touch', depending on the requirements of the situation.

The Automation Preferences dialogue that appears when you choose Options / Track Automation / Automation Quick Access.

The Automation Preferences dialogue that appears when you choose Options / Track Automation / Automation Quick Access.

7. Press the space bar to play. You can now write the automation as the song plays, using the assigned slider or knob.