Universal's 2001 recreation of the classic 1176 compressor.

Universal's 2001 recreation of the classic 1176 compressor.

Since Bill Putnam's sons founded UA anew, the company have become well known for software emulations of old gear, as well as for their hardware recreations of vintage classics. We find out how they go about it...

It's no secret that Universal Audio, as we know them today, were founded by Jim and Bill Putnam Jr, sons of the the highly respected engineer Bill Putnam Sr, inventor of the 1176 FET compressor, and studio engineer to such greats as Frank Sinatra, Nat King Cole, Ray Charles, Duke Ellington and Ella Fitzgerald (for more on Bill's life and work, see the article on studio pioneers in SOS October 2004, or surf to: www.soundonsound.com/sos/oct04/articles/rocketscience.htm). A large part of the modern Universal Audio's business is in building authentic reproductions of vintage analogue studio processors.

When Bill Sr passed away in 1989, the brothers were clearing the family home and came across their father's design notebooks, which documented every technical problem he'd ever solved. That was the trigger for the brothers to set up Universal Audio and to recreate these designs using the original components and circuits. The company name came about because one of Bill Putnam Sr's companies back in the '50s was also called Universal Audio. Today they have an impressive complex in Santa Cruz, California.

At the heart of the Universal Audio range are the LA2A and 1176 compressor/limiters. The LA2A 'leveling amplifier' as it was orignally called, uses valve circuitry, hand-wired components and has just three controls on the front panels. The design dates back to the 1960s and uses a photocell as the gain-reduction element, illuminated by an electro-luminescent panel. The design was originally patented by Jim Lawrence, and the unit was manufactured by Teletronix in Pasadena, California, but the rights to the design passed to Bill when he bought the company that owned them in the mid-'60s. Three different versions of the LA2A were produced before it was discontinued around 1969.

The 1176 'limiting amplifier' was designed by Bill Putnam himself, and he experimented with Field Effect Transistors (FETs) as gain-control elements. In effect, they can be used as voltage-controlled resistors. After a great deal of research, Bill Putnam found a simple solution using the FET as the bottom leg in a voltage divider prior to a preamp stage. The output transformer used in this design is also critical, as it uses additional windings to provide feedback, making it an integral part of the output amplifier. This had to be accurately recreated for the modern version of the 1176. Brad Plunkett subsequently revisited the circuit in the early 1970s with a view to making it less noisy, which is where the 1176LN came about. Apparently further design improvements followed, resulting in at least 13 revisions of the 1176, though aficionados still claim that the D and E 'black-face' versions sound the most authentic. Joe Bryan in Universal Audio's travelling studio/demonstration bus.

Joe Bryan in Universal Audio's travelling studio/demonstration bus.

Mighty Joe Bryan

After putting the old analogue designs back into production, the brothers Putnam turned their attention to creating equally accurate-sounding digital replicas of the vintage gear in the form of plug-ins that run on their UAD1 DSP card. I was interested to know how a company with such a solid background in analogue, high-end signal processing got into the DSP card, software plug-in market, and the man to provide those answers was Joe Bryan. Prior to joining Universal Audio, Joe worked with Ensoniq, and then as part of the Korg R&D group working on the Wavestation and OASYS projects. Joe claims that he was electrocuted under his mum's piano at the age of four and from then on, he knew he had to work with electronics!

According to Joe, one of the challenges of the Korg OASYS project was to get sufficient DSP power to make it respond and play like a musical instrument, and not like a computer. He clearly has a lot of respect for what was achieved back then, even though the only part of OASYS that came to market was a DSP card.

"For particular reasons, the OASYS keyboard was never realised — it never shipped, so I left and went to join a startup company in silicon valley that was doing very high-performance DSP targeted at desktop computers. I reasoned that if this worked, then there would be high-performance DSP available on everybody's desktop, but the company went out of business and the project was abandoned. So I picked up the technology and brought along some of the engineers I'd been working with back in 1998, and approached several different companies — and got some interesting responses! I can't mention names, but some formerly high-profile plug-in companies thought that what we were proposing was impossible.

"We had been working with Jonathan Able and Bill Putnam Jr for years, so we approached them at Universal Audio and they recognised immediately the potential of teaming up high-performance DSP with quality algorithms of the type that the Kind Of Loud guys were doing at the time. That was the start of the UAD1. The concept was to use as much DSP as we could possibly get, then focus on the quality of the algorithms."

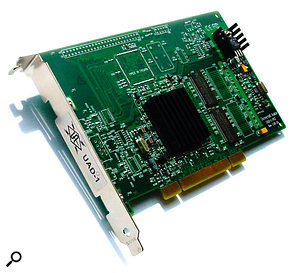

Of course, native platforms are getting more powerful every few months. What can the UAD1 offer that isn't already available in the native plug-in domain? The UAD1 DSP card.

The UAD1 DSP card.

"One difference is that the performance available from our DSP is typically an order of magnitude higher than for the DSPs commonly used in other products. It is a proprietary DSP that was designed for a totally different purpose — graphics — and the company spent around $60,000,000 developing it. That level of investment is not something that can can be supported by the audio industry — technology is rarely a limiting factor, just the economics of the application of the technology. It's very different from the Motorola 56K-series DSPs, because ours is floating-point and much faster. We wanted to achieve the fastest performance for the minimum cost, which we did, and then we used that as a basis for developing the highest-quality plug-ins without worrying about running out of power."

I imagine the greatest challenge was to measure the characteristics of those classic pieces of hardware and then to create algorithms to reproduce their sound with sufficient accuracy. Also, there must be some artifacts that are crucial to the sound, while others may be difficult to model and have no significant effect on the sound. How do you know which parameters are important?

"It was a very big deal, though removing the DSP performance constraints helped us considerably. As you start to approach the ideal scenario, the performance requirements go shooting up. Fortunately we have Dr Dave Berners and Dr Jonathan Able, who are world-class algorithm designers. Focusing on the parameters that had to be modelled was a big challenge, as there's always a corner of the performance space that can't be reached by the model. For example, if you're going to run a +32dB level into an 1176 and turn everything up, the plug-in isn't going to replicate that! That would saturate all the amps and transformers and be almost impossible to model — but then nobody would use an 1176 like that in practice. Also, do you model line noise and hiss? Is that desirable? We decided no, because there's no advantage of having that."

I guess that you do have to look at dynamic distortion mechanisms and also the precise attack and release curves, as well as the quirky way ratio changes with level.

"Those are very important attributes, and are modelled as closely as we can possibly get them. Basically it is within the tolerance of unit-to-unit variation. The 1176 is a very difficult process to model because of its feedback structure, and the attack time is actually less than one sample. In the mathematical realm where everything is quantised to single samples, you have to play very complicated mathematical games to replicate that. It doesn't actually require up-sampling because of the shape of the knee, and the DSP guys are very good with these things — they have all these tricks they can play using a predictive approach that basically calculates all the possible paths and then selects one as the starting point for the next sample. The LA2A even has a photon value in there that goes between the L panel and the cadmium cell."

The LA2A plug-in for the UAD1 card.

The LA2A plug-in for the UAD1 card.

That sounds almost like quantum computing!

"The multi-stage release is actually a quantum process, and that's what they model. Different products have different modelling requirements — the Pultec EQ is a very clean passive EQ with make-up gain and plenty of headroom, so it is a fairly linear process. What is challenging is that because it is a passive EQ, in order to match the amplitude and phase responses, you have to up-sample. There's a few other plug-ins that up-sample too, so we look at each case individually to see what that algorithm needs. By contrast, some of the algorithms, such as the Fairchild, turned out to be relative easy to do after the experience we'd gained doing the LA2A and the 1176."

Audio Unit Compatibility

The introduction of Apple's Logic v7 with its Audio Units checking utility tripped up a lot of plug-in designers, but the UA plug-ins now seem fully compatible. I wondered if this caused Joe and the UA team any problems.

"We have been working with Apple very closely and they've made changes to their AU engine based on feedback we've given them, though we still had to modify our existing code to work with Logic 7. Initially they were somewhat hands-off with us, because they thought native plug-ins were the right way to go, but once they heard what we were doing with our algorithms, they realised that they would benefit from the added quality we could bring. However there are some remaining issues related to the way audio data is buffered in Logic. That causes problems if you run a UAD1 plug-in in a live track at the same time as having UAD1 plug-ins in playback tracks. You should really only use one or the other — not both together. In fact, I had a conversation about this with the former Emagic guys yesterday — they've worked with us in the past, and I'm hopeful that this will be resolved in the future."

Of Plates & Latency

You've just announced a vintage plate reverb plug-in, Plate 140, which relies on modelling, despite the success of convolution-based reverbs. What are the advantages of doing it your way?

"There are two or three benefits. We did sample the reverbs and measure the impulses as a base-line reference, then after we had come up with our modelling algorithms, we took them back into the studio and made changes to get the sound as close as possible to the original plates. A plate is essentially linear, though there are some non-linear aspects to it, but I think the advantage of modelling is that the processing load on the DSP is a fraction of what it is with a convolution reverb. Also, you can adjust the parameters of a model, whereas an impulse response is essentially fixed. You can't adjust the damping on an impulse reverb; you have to switch to a different impulse. Also the group delay of an impulse reverb is huge compared to our modelling algorithm, so there's a big benefit to the user in doing it our way."

The new Plate 140 plate reverb emulation for the UAD1.

The new Plate 140 plate reverb emulation for the UAD1.

A plate reverb has a very fast build-up of complexity, whereas a typical digital reverb algorithm starts with an FIR — in effect, a multi-tap delay line — to create the early reflections, then recirculating comb and all-pass filters are used to build the late decay. How do you achieve an adequately fast build-up of density with modelling?

"That was probably one of the biggest challenges — getting the basic tonality of the plate was more straightforward. Getting the late field smoothness was also relatively straightforward, but achieving that initial build-up, as you say, was the biggest challenge, and we had to play around with several different reverb architectures to realise that. The filtering also has a tremendous impact on the sound, and we did a combination of listening and analytical optimisation to get the most out of the DSP we had available. With an impulse-convolution approach, you have to process every sample, but you don't actually need every sample, just the samples that matter. So we used an analytical approach to optimise our algorithms to reduce the number of taps and feedback networks that were required. That meant we could allocate the remaining DSP to the more difficult parts of the task. For example, within a plate, high frequencies travel much faster than low frequencies, so we could do the early build-up just on the higher frequencies using an analytical approach. Jonathan Able is just brilliant on reverbs and also, Will Shanks spent days listening to all the different plates so we could get the right voicings for various applications. As plates are pretty linear within a broad range, any transducer-based non-linearities, and other non-linearities, can be emulated using some of the standard processes that we've added on top of the reverb."

DSP-powered plug-ins generally have latency issues which double whatever latency you would normally have with the application running native plug-ins. Is this also true of the UAD1?

"Yes, that's the way it works. As we look at it, there are three primary applications for DSP within the digital audio environment — tracking, where you're monitoring in real time, mixing and mastering. We have focused initially on mixing, and we're looking more closely at mastering. Frankly, tracking is something that personally I don't see being low enough latency if you're really serious about it, even with native plug-ins. If you want to process as you track, use an analogue processor. Even if you just need to hear some vocal EQ, compression and reverb while tracking, those can be supplied using outboard hardware relatively easily. I like using our Nigel guitar processor, but for me, nothing really beats an amp."

Interested Third Parties

Unlike the competing TC Powercore system, UA have not opened up the UAD1 platform to third-party plug-in developers. I asked Joe if that was a deliberate strategy, and if so, why.

"Now it is, but initially we were planning to announce some third-party relationships. It's not something we're ruling out entirely, but at the moment we don't see the need to open the platform up, as it seems to serve our customers best to focus on giving them everything we can and not diverting our development resources towards third-party support. Also, from a piracy point of view, if there's a native plug-in available now, it makes no sense to port it to our platform, as there's a high likelihood it's already been cracked. That means the only plug-ins that would be worth considering are ones that aren't out yet or ones that are only available on other DSP platforms that have robust protection. It would also mean converting the code for our floating-point processor, though it's easier to go from fixed-point to floating-point than the other way around."

Against Piracy

I suppose one of the attractions of the DSP platform is that you can protect your software from piracy more effectively.

"When I first designed the card, we hadn't figured out how we were going to do the copy protection, but we knew that we would be doing it, so we added the hardware that gave us the capabilities. Then, when we released the Cambridge EQ plug-in, which was the first plug-in we sold separately, we turned on the protection. Software piracy is a function of how easy it is to steal versus how easy it is to buy. We make it as easy to buy and as hard to steal as possible. Some of the copy-protection schemes I've had to deal with are so unwieldy that it's easier to use the crack! Our authorisation has nothing to do with the computer — it's keyed to the UAD1 card, so if you want to load the software onto multiple machines and then move the hardware around, you can do that. In fact, I do that myself — I have a Magma chassis that I move between my desktop machines and my laptop.

"It also makes things a lot easier when you come to change computers. It's a challenge system where you have to register only once, then pay using a credit card or whatever. The other important thing to bear in mind is that if a software company goes out of business and you're using a system where you have to reauthorise every time you change computers or swap out a hard drive, you're stuck with a plug-in that no longer works. Our authorisation is a simple text file that you can copy from computer to computer, or you could even write it down! We don't want copy protection to penalise the existing customer."

Talking of portability, have you considered putting the UAD1 in an external box connected by Firewire, for use with laptops?

"Laptops and compact desktop computers are the fastest-growing parts of the marketplace, so this is important for us. We are a small company, and so far we've been focusing on producing the plug-ins, but we've also been looking at Firewire for a long time. I'm on the AES Firewire committee and have been watching all the protocols, and frankly, it's not really 'soup' yet! All the Firewire solutions have problems, whether it is stability, latency or capacity — which is a limiting factor, particularly at high sample rates. It's just getting to the point where it's do-able. The whole Firewire thing is, shall we say, under active consideration."

New Vintages

Your main focus so far has been on recreating vintage equipment, and the new plate plug-in follows in that direction, but what other vintage areas are left for you to conquer? My own view is that nobody has got tape echos and tape-flanging emulations quite right yet, but does this interest you?

"Absolutely. The focus we've had up to now has been on more linear processes such as EQ. Compressors are non-linear, but still lend themselves to being physically modelled quite easily. Things like saturation distortion are also fairly straightforward, but taking it to the next level, where you have distortion with no time dependent, such as in transformers, it gets more complicated.

Testing a dual-channel 2-1176 compressor/limiter at Universal Audio's California factory.

Testing a dual-channel 2-1176 compressor/limiter at Universal Audio's California factory.

"Going to tape modelling is also a big step, because nobody really figured out how tape worked. The best models that were available were two dimensional, but it's actually a three-dimensional process. To do that correctly requires a tremendous amount of effort, and it is something we're working on, but we don't want to do a compromise solution."

One thing that nobody seems to have modelled is the way the tape recording process changes phase — and few put the record equalisation before the saturation process. If you put a square wave into a tape machine, you don't get anything like a square wave out of it, so perhaps that needs to be modelled first before the time processes, wow and flutter and feedback filtering are applied? What's more, the saturation elements need to be in the feedback loop so the sound deteriorates properly as it is recirculated.

"And many people do take the simple approach of just recirculating via a filter, perhaps adding some pitch modulation, but I think it's getting there now, and more accurate modelling will eventually happen. The other thing that's appealing to us is going into non-linear equalisers that colour the sound. This coloration comes from several different effects. Obviously you have amplitude and phase characteristics, but adding the right type of saturation is the next dimension to get into. One of the things we're trying to do first is cover all the bases so that the card we're offering gives the user a broad palette of processing they can use immediately, and then provide extra options within those areas. Frankly, a lot of the things we build is stuff that we want to have for ourselves."

So, is there any area that you have a strong personal interest in?

"Yeah, my background is in synthesis, and I still think the next generation of synthesizer that performs like a real instrument has yet to be built. I've built acoustic instruments, I play acoustic instruments; I know what they sound like. Synthesizers are convenient and they can make some interesting sounds, but even modelling a subtractive synth properly is a challenge. Going to the next step, where physical modelling is happening effectively still hasn't been done properly. The last synthesis project I was involved in was the Korg OASYS, which was a great-sounding product, and I think that was as close as anybody has come to it yet."

From what I can see, part of the problem is that the market tends to be customer driven, yet customers don't often know what is possible, and most don't know that they want something until they hear it.

"It's a big problem, and companies have to have a lot of balls and put a lot of money into what they believe in. Mr Kakehashi, the head of Roland, is one of my heroes, and one of things he's done — and I'd like to get to the level where we can emulate that — is that he has products that make a lot of money in the mass market which he uses to fund research, and he knows they may never make a profit. They're fun to do, they push the envelope, and the benefits find their way into other products later. The guitar-synth stuff he's done took a lot of investment, and probably only benefits a handful of people, but it would be wonderful to be able to do something like that. We're a growing company, so hopefully we'll get to the point where we can have a fun projects group."

Analogue Parts In The Digital Age

Though Joe lives and breathes DSP circuitry and code, he is also very passionate about great analogue equipment. Universal Audio are still best known for building faithful replicas of the LA2A and 1176 compressors, and I wanted to know how they manage to source the original components, especially things like the photocells and electro-luminescent light sources used in the LA2A. Obviously, this isn't something they have to worry about when designing algorithms, as digital bits are not known for becoming obsolete!

"It's a big challenge, because the cadmium photo-resistors and the electro-luminescent panel are both absolutely critical to the design. We went to the manufacturers after measuring the exact performance and response time of the originals, and they asked us how many we wanted. When we told them how few it was, they almost fell about laughing — it just wouldn't have been cost-effective to make them to that spec in that quantity! However, after we did the algorithms for the LA2A, we learned for the first time how they actually worked and what gave them their characteristic sound, and once we'd done the physical modelling, we were able to go back to the manufacturer and tell them exactly what we needed. This was a more realistic spec that they could meet in our quantities, and the consistency was far better too. Now it's not a big voodoo thing, where nobody dare change any part of the process for fear of ruining the batch.

"The electro-luminescent panels are also problematic, and we've just gone through the process of qualifying a new vendor to meet the demand that we have. Again, being able to draw on the DSP technology to find out what parameters really matter has helped enormously."

To The Future

Where else do you go with the hardware now that you've replicated the vintage analogue products that you wanted to do?

"Mic preamps are like shoes — you can never have enough of them. Selling mic preamps is like selling shoes to people with foot fetishes! The same is true of microphones, and there's no mic or preamp that is perfect for all applications. You have two things that interact with each other, so our new mic preamps were designed to complement the existing product line. You can either have an extremely clean and transparent preamp, or you can have one that interacts with the mic to provide more of a coloration to the sound. There are so many dimensions in the physical space of the signal itself — it's not just phase response and frequency response. One number that I hate is the specification that quotes total harmonic distortion plus noise. Frankly it's the level of distortion at each harmonic that matters, not the overall figure. In fact, some of the old tube gear was specified with a distortion of one percent, and it sounded great. It's the way the harmonics come up with a tube — it's the second, then the third, and there's pretty much nothing else. With a modern op amp, the level of the harmonics may be much less, but those harmonics go all the way up to the Nyquist frequency and beyond." An 1176 (below) and a 6176 channel strip (above) undergoing an extended 'soak-test' at the UA factory. Several LA2As and a 2-1176 can also be seen in the background.

An 1176 (below) and a 6176 channel strip (above) undergoing an extended 'soak-test' at the UA factory. Several LA2As and a 2-1176 can also be seen in the background.

And the more feedback you use in an op-amp circuit to keep the steady-state distortion low, the more dynamic distortion you get under real-world signal conditions. The performance might look great on a test sine wave, but still sound bad with a real signal.

"That has a huge, huge impact and in hi-fi circles, the trend is towards minimum feedback. Feedback has benefits in lowering the output impedance and lowering the distortion, but an amplifier does not have infinite bandwidth, and so any corrective signal is necessarily going to be wrong to some extent. The circuit may measure absolutely perfectly with a sine wave, but a sine wave is the laziest signal an amplifier can handle. You put a complex signal in there and everything goes to hell, because of the temporal relationship of the harmonics within the signal, the crest factor and the phase response — all these things add up to affect the sound, and there's a lot of subjective and anecdotal evidence to point at what matters, but we don't really know exactly what it is for sure.

"There are two approaches, the first being the analytical approach where you say that if the numbers are right, then it must sound good. Then there's the subjective approach, where you start from a basic functioning circuit and then modify it until it sounds good. It's like tuning an instrument. We tune all our stuff in our studio, but then there's a difference between listening to things in isolation and listening to them in a mix. If it's a microphone preamp, we try to select the applications that we are going to be using it for, pick the mics, run them into the unit and then see how they sound. We try to get as broad a palette of applications as we can where it sounds good in the mix. Rich Williams, who designed the 4110 and 8110 units, had the designs for the microphone preamps in the studio for months, and he had trim pots on his prototypes that he'd tune as he mixed a session. There's no other way to do it. We do all the analytical stuff as well, but we have to get the sound right. Ultimately, the sound is all that matters."