Pitch correction of vocal parts is now commonplace in all but a few genres. Find out how to craft a professional-sounding result.

Over the last decade or so, vocal tuning has become increasingly accepted as part of the music post-production process. In a similar way to other performance 'fixing' processes, such as drum quantisation, it's now used so much, on almost all commercial releases, that engineers and the general public alike have become accustomed to hearing it. I'll leave the rights and wrongs of pitch-processing to others to debate, but I would say that we engineers and producers should remember that tuning plug-ins are intended to help us achieve the best sound we possibly can — and unless we're trying to create a deliberate effect (a Chris Brown-style robo-voice, say, or a Cher-like warble), we really don't want the tuning process itself to be noticeable in the final mix.

Celemony's Melodyne pitch-processing software is one of that select bunch of tools that we can genuinely call revolutionary. When it first burst on to the scene, it seemed a miracle that you could treat recorded audio in much the same way as you could MIDI notes. Initially a stand-alone application, it soon became available as a plug-in. Later still (2009) Melodyne Editor wowed people with the ability to manipulate the pitch of individual notes within a single polyphonic audio file.

Having initially chosen Melodyne simply because it was cheaper than Antares Auto-Tune, I soon noticed that there were very few audible artifacts in the tuned audio. I found it intuitive to use, but while instant results were possible, it took time and experimentation to get the best results. In this tutorial, I'll share some tips and techniques that should lead you rather more quickly to pitch-perfect productions.

Melodyne remains the only game in town if you want to work with polyphonic audio, but for vocal tuning that's usually unnecessary. The two other leading pitch processors are Auto-Tune and Cubase/Nuendo's built-in VariAudio, and although the tools are slightly different, much of my more general advice applies equally to users of those tools.

Pitch Preparation

Let's face it: tuning vocals is not the most fun you can have in music production, but good preparation makes the tuning process much simpler and more speedy. Sometimes I tune vocals on my own projects, but I also do this work for other engineers and producers. In both scenarios, I tend to work in the same way, starting with the creation of a dedicated tuning project, in my DAW, that's separate from the main mixing project. This helps me to keep all the vocal-tuning work neatly together, while still allowing it to be recalled quickly if further edits are required. Another good reason for working in this way is that it avoids having half a dozen instances of the Melodyne plug-in hogging computing resources while mixing. While that might not be the limiting factor it once was, tuning needn't be a real-time process, and I'd rather leave resources available for other tasks.

You can use a stand-alone version of Melodyne, but I prefer to use the plug-in in a DAW session, so I create a new empty project (I use Logic Pro, but the principles apply to any DAW) and import the vocal tracks. I also import a rough instrumental mix of the song, which is important, as it provides the context you need to make the pitch and time adjustments to your vocals. It's quite common to be working with many layers of lead and backing vocals, and to use different tracks for different song sections, so rather than having dozens of instances of Melodyne running, I'll start with an instance on one track, perform my Melodyne processes, and print the result to a new track before moving on.

Partly this is, again, a way of managing computer resources, but it's also an insurance policy: in my early vocal-tuning career I had too many experiences of part of a vocal erratically shifting out of sync during playback, or even cutting out completely. In other words, while Melodyne's a great tool, no software is infallible, so it's good to know that the vocal line you've just laboured over is saved as audio in your project.

How to print the results will vary from DAW to DAW, but in Logic, I usually send an output from the un-tuned vocal track to a bus and create a new mono track, with the input set to the same bus. I then record the result on the new track as it's playing through. It's possible to do a bounce offline, of course, but you really want to listen through and check the result, so this real-time approach works well.

That's a very basic overview. I'll get into more detail later, but first let's consider how best to set up Melodyne so that using it is intuitive...

User Preferences

When you first open an instance of the Melodyne plug-in, it's a good idea to make the plug-in window as large as possible; just click and drag out its bottom right corner. Having a dual-screen setup is extremely useful, but it's not essential. Indeed, I can work reasonably quickly and efficiently even on a 17-inch laptop screen.

Before you dive in and start tuning, spend some time familiarising yourself with the user-preference options. Everyone will have their own idea on how best to set things up (that's why they're called user preferences) but I'll explain how I like to tweak things and why.

Clicking on the top-left corner of the plug-in window reveals the preference settings for the Time and Pitch Grid, as well as general View options. A new Melodyne instance always defaults to 'Semitone Snap' in the Pitch Grid preferences, and I prefer to set this to 'No Snap'. Why? Because I want to be able to move the notes freely on the grid. Vocal tuning is not an exact science, but something that's best finessed while using your ears; sometimes moving a note ±15 cents sharp or flat of the 'perfect' pitch can make all the difference in the world.

Similarly, the Time Grid preferences allow you to determine how the notes will snap to the grid. This is something I often have enabled, but with the 'Dynamic' option ticked, so that the snap resolution is finer the more I zoom in. This is very helpful when working on material that's been recorded to a click or programmed on a grid, with a fixed tempo, but should you need to break free from the constraints of the grid, holding down the Alt key while adjusting the timing of a note bypasses the snap function, and allows you to make finer adjustments.

The 'View' menu presents lots of options for what information is to be displayed with the analysed audio. The only settings I generally have ticked are 'Show Pitch Curves' and 'Show Note Separations'. Pitch Curves are the thin lines that join the notes to show the variation in pitch, and Note Separations are the vertical lines between each note, which make it easy to see how and where Melodyne has decided to separate the notes in a performance. This is particularly useful when splitting a single note into smaller pieces, as we'll discover a little later.

Try This At Home!

As far as I'm concerned, that's it for the preparation stage, so we're ready to look at a practical example. You can download and use audio example 1 if you want to work through the process with me, and my instructions will assume that you are doing this.

First, loop a region, then click Melodyne's 'Transfer' button. If you've done it right, this button will turn orange. Now play the looped section. Once Melodyne has analysed the audio, it will appear as orange Blobs (Melodyne's terminology, not mine!). Now, when you press play on your DAW, you're hearing the audio that's captured inside Melodyne, rather than the audio whose waveform is displayed on the DAW track on which the plug-in is inserted — so any edits you now make on that DAW track will not affect what's played back (unless you bypass Melodyne).

Now magnify the captured area using the horizontal and vertical sliders, so that the Blobs are as large as possible, while still seeing the loop you've set. The slider in the bottom-right corner of the plug-in controls how large the Blobs will appear on the grid.  There are a number of different sliders for zooming in on the desired section of audio in Melodyne. The bottom right one adjusts the size of the Blobs themselves.

There are a number of different sliders for zooming in on the desired section of audio in Melodyne. The bottom right one adjusts the size of the Blobs themselves.

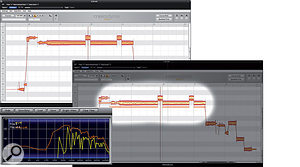

Before you do anything else, listen back to the passage and look at how Melodyne has analysed the vocal. In audio example 1, the vocalist sings, "Comfort, it comes to me...”. Mostly, he's singing legato and staccato notes, which Melodyne has tracked accurately, but notice that the singer bends the note up at the start of the words "Comfort” and "to”. Melodyne has interpreted these as single notes, so we'll need to separate them before further processing, to prevent these nuances of performance from being lost or minimised. Use the Note Separation tool to split the note where the vocalist hits the B note in "Comfort” and the C# on "to”. Melodyne is generally very good at tracking pitch, but some nuances are usually missed. In these two screens, you can see where two notes were read as one, and how the author has used the Note Separation tool to split them at the appropriate point.

Melodyne is generally very good at tracking pitch, but some nuances are usually missed. In these two screens, you can see where two notes were read as one, and how the author has used the Note Separation tool to split them at the appropriate point.

If the vocals have been well sung and require very little tuning, as in this example, Melodyne's Correct Pitch function provides a really quick way to get everything sitting in the right place. Highlighting the captured vocal and clicking the Correct Pitch button opens a window with Correct Pitch Centre and Correct Pitch Drift slider controls. As long as any note splits such as those we've just done have been performed, pushing both sliders up to 100 percent usually makes for a decent starting point. From there, only a few minor tweaks will usually be needed to get the vocal sounding really sweet. As long as you've done all the necessary note splits, it can be good to set the pitch-centre and pitch-drift correction settings to 100 percent. For one thing, this will show you clearly if Melodyne is trying to force a Blob to the wrong note!

As long as you've done all the necessary note splits, it can be good to set the pitch-centre and pitch-drift correction settings to 100 percent. For one thing, this will show you clearly if Melodyne is trying to force a Blob to the wrong note!

I found that the Correct Pitch function in my example shifted the word "to” down to C natural, when it should have been a C#. Correcting this by clicking and dragging makes the line sound much better, and A/B comparison with the original untuned vocal should reveal a subtle improvement, as demonstrated in Audio Examples 2 and 3. However, to achieve the best result, a little further attention is still required.

Attending To Details

Listening more forensically to the last example, you should be able to hear that the vocalist takes a tiny amount of time to settle into the notes at the start and end of the word "Comfort”, as well as during the legato transition between "to” and "me”. If you want Melodyne to give natural-sounding results with such fine details, you need to split the notes and work on the resulting parts separately — so let me break this down and take you through how I assessed and processed each note.

• "Comfort”: In the first syllable of the word, there were two small wavers in pitch at the beginning of the note, and then a slight variation about a third of the way through. First, I split the note into four sections at the appropriate points. This made it possible to use the manual Pitch Tools to bring all four parts of the note into tune. It's not uncommon to find considerable variation within a note. In these cases the best results are usually obtained from splitting the note into even smaller segments, and using the manual pitch-correction tools. I find the Pitch Drift tool the most useful for this job, because it irons out those little deviations, but the Pitch Transition tool (which appears when you hover the mouse over the start of any Pitch Transition line, if you have the main Pitch tool selected) is also invaluable, as it smooths out any joins created whilst splitting and moving the audio. In this instance, it was also useful for slightly accentuating the bend into the note. The final process was to slightly raise the pitch of the tail end of the syllable, so that it dropped sweetly from the B to the G#.

It's not uncommon to find considerable variation within a note. In these cases the best results are usually obtained from splitting the note into even smaller segments, and using the manual pitch-correction tools. I find the Pitch Drift tool the most useful for this job, because it irons out those little deviations, but the Pitch Transition tool (which appears when you hover the mouse over the start of any Pitch Transition line, if you have the main Pitch tool selected) is also invaluable, as it smooths out any joins created whilst splitting and moving the audio. In this instance, it was also useful for slightly accentuating the bend into the note. The final process was to slightly raise the pitch of the tail end of the syllable, so that it dropped sweetly from the B to the G#.

Now for the second syllable, "fort”. The only part of this that I felt needed addressing was the very tail, where the note drops off slightly. Using exactly the same Splitting, Drift and Transition tools, I was able to bring this nicely into line.

• "Comes”: This is delivered with a nice vibrato, but it also drops in pitch a little at the end. A touch of the Transition tool smoothed the edit, but I found that I also wanted to use the Modulation tool, just to calm the vibrato effect a tiny bit on the tail of the note. This is a powerful tool and over-use may inadvertently introduce the robo-voice effect, so proceed with caution!

• "To”: I simply nudged the initial note down to B and added a whisker of Drift with the Drift tool. I liked the way the part rose to C#, but could hear the tail of the note go slightly sharp. Using the Drift tool on the whole note would make it dip in pitch in the middle (not pleasant!), so to straighten it out unobtrusively, the note needed to be split.

• "Me”: This final word sounded fine, and just a touch of the Drift tool straightened the note out perfectly. The building vibrato at the end rounded off the whole line neatly.

With all your tweaks done, it's important to listen back to the part, comparing the tuned with the untuned original. You're checking that you've got the pitching right in the context of the track, and listening out for any artifacts that may have crept in during processing. If you've just followed this example and the tweaks are subtle, you shouldn't detect any such artifacts, but you do want to be sure! In audio examples 1 (original) and 5 (processed), you can hear that the notes have become more definite and strong, but, importantly, the character that defines the vocal part remains intact. The difference between the two examples is subtle, but it's noticeable, and such changes add up, particularly when there are a number of different parts.

Tempo Changes & Timing

Everything we've covered so far has been about finessing a part in a track with a fixed tempo and time signature, but not every track is so regimented! Trying to use Melodyne without telling it about a tempo or time-signature change will result in the playback from the plug-in slipping gradually out of sync with the rest of the track. In a track with a single, fixed tempo, the Chain button next to the tempo display will remain grey (unlit), but if Melodyne detects a tempo change, the button will slowly flash orange. Note that Melodyne hasn't acted on the tempo change: it's simply telling you that the plug-in is no longer in sync with the DAW.

Although it's easiest to use Melodyne on material that's played at a fixed tempo, it can also handle tempo changes — and will even alert you if it has lost sync with the DAW and present you with options to remedy the situation.

Although it's easiest to use Melodyne on material that's played at a fixed tempo, it can also handle tempo changes — and will even alert you if it has lost sync with the DAW and present you with options to remedy the situation.

The next example (audio examples 6 and 7) changes at bar 69, from 150bpm in 3/4 time to 160bpm in 4/4. To get Melodyne to 'learn' the tempo change, you need to set up a loop around the bar where the tempo changes. Clicking on the Chain button opens a sub-window where you can edit the tempo preferences. Click on 'Tempo Variation', and then, leaving the sub-window open, play the looped section through. Melodyne should pick up the tempo change automatically. In the example, it displays 150-160bpm.We can now capture this vocal, safe in that knowledge that Melodyne and the DAW will remain in sync.

Now let's look at this vocal part in more detail. The line is "The voice in your ear is the voice in my head”. Listen to the untuned and tuned parts (audio examples 6 and 7). You should be able to hear that I've done quite a bit of work. I had to split the words "In your”, "ear” and "head” before I applied any processing. I accentuated the bend into the word "ear”, but then decided to straighten the word "head”, so that it stayed on the B note. Why? It just seemed to have more impact. You always need to be thinking in this way, applying your musical judgment throughout.

The next thing that I noticed was that the words "in your” and "is the voice in my” sounded a little rushed. Such aspects of a performance can be easily tweaked by using the Timing tool to slightly shift the notes. I find the Activate Grid setting a little extreme for this job; you usually lose the feeling from the vocal line if all the words start exactly on the beat. It's much more effective to use your ears to find the natural rhythm and perform manual edits. Listen again to audio examples 6 and 7. The last line, in particular, sounds better when the phrases are more evenly spaced.

The Amplitude Tool

The final thing to note about this line is the slight variation in level between some of the words. Most notably, the first word ("the”) is rather quiet, and the second time it occurs, the word "voice” is slightly over-accentuated. These level issues could have been gradually smoothed out with some suitable limiting and compression, but using the Amplitude tool I was able to raise or lower the amplitude of those words by a few decibels, and that made all the difference. Although best known for its pitch processing, Melodyne includes a number of other useful vocal editing tools. Listen again to the final tuned and edited vocal (audio example 7). It already sits in the mix better than it previously did, and to me it makes sense to perform such adjustments while tuning, as you're already listening critically to the vocal part.

Although best known for its pitch processing, Melodyne includes a number of other useful vocal editing tools. Listen again to the final tuned and edited vocal (audio example 7). It already sits in the mix better than it previously did, and to me it makes sense to perform such adjustments while tuning, as you're already listening critically to the vocal part.

Formant Tweaks

The Formant tool is another useful feature. In very simple terms, formants are the harmonic frequencies that occur in the human voice. They help to define its timbre and play a very important part in how we identify the sound of words as they're spoken or sung. Changing the formants can enable you to alter the perception of how a vocal has been performed. Let me explain this with another example...

The vocal line in audio example 8 is "I'd love it if you loved to watch”, and it has already been tuned using the processes explained earlier in this article. It's now ready to be printed back into my project. However, if you listen to the long, drawn-out word "loved”, you can hear a lot of mid-range and high frequencies around the 700Hz-8kHz region. In fact, using a frequency analyser on this note reveals that the fundamental, at around 350Hz, is quite quiet, but that there's a lot of upper mid-range energy. It's not hard to guess that this will need to be controlled in the mix in some way, to prevent the vocal from sounding harsh.

Traditional mixing tools such as EQ and compression will help, of course, but the Formant tool can also take us in the right direction. When the tool is selected, Melodyne displays formants as thick lines. If I select the long, held note, I can drag all the formant bars down, lowering the harmonic frequencies a touch. In performance terms, this gives the impression that the note is being sung more from the diaphragm than the throat, as you can hear in audio example 9. As you can see from the spectrum analyser screen (bottom left), the fundamental of the unprocessed vocal part is quite weak relative to the mid-range harmonics. The Formant tool (see the 'Tools Of The Trade' box, eslewhere in this article) can be used to drag down its formants (bottom right), creating an impression that the part was sung more from the diaphragm than from the throat.

As you can see from the spectrum analyser screen (bottom left), the fundamental of the unprocessed vocal part is quite weak relative to the mid-range harmonics. The Formant tool (see the 'Tools Of The Trade' box, eslewhere in this article) can be used to drag down its formants (bottom right), creating an impression that the part was sung more from the diaphragm than from the throat.

Final Thoughts

As I wrote this article, I realised just how difficult it is to articulate the judgements I make and the processes I apply when tuning a vocal track. But while it may take me a few thousand words to explain the processing of only a few vocal lines, the job itself becomes quick and intuitive once you know what to look out for. The key thing that you need to keep in mind is that your aim is always to enhance the vocal take where needed, while striving to preserve the expressiveness of the vocalist's performance. It's a real balancing job, and neglecting either side of the scales will leave you with a less than perfect result. Get both right, though, and you'll be surprised at the difference subtle tuning makes to even the best vocal take. Do remember, however, that vocal tuning is a tiny part of the overall production process, and that it's only going to make a significant improvement if the vocal performance and recording are good to start with.

Finally, note that humility and diplomacy are required skills: this is absolutely not about crushing egos! Tuning is usually an operation best performed when the band aren't around. I'd much prefer a vocalist to come into the studio during mixing and think that their vocal sounds amazing, without knowing that it has been tuned!

Audio Examples

The examples I've used in this workshop are taken from the tracks 'Hold' and 'Manson', that I recently mixed for a band called Blisseyes (www.facebook.com/blisseyesband). The vocals were tracked using a Peluso P12 valve microphone, a Focusrite ISA828 preamp, Lynx Aurora converters and Pro Tools 10. Many thanks to the vocalist, Ryan, and the band for allowing me to use the track. You can find all the examples on the SOS web site at the address below.

Layered Vocals & Backing Vocals

When you have more than one vocal line, and particularly when you're tuning backing or other layered vocal parts, there are a few things that are worth particular consideration.

First, although it's outside the remit of this article to explore the subject in depth, remember to pay attention to the timing of your layered parts, including note and syllable onsets and lengths. Getting that right (which isn't necessarily the same thing as getting it theoretically perfect!) is as important to the feel of the track as is the tuning itself. It's possible to perform timing edits using Melodyne, of course, but I find that it's often more practical to edit multiple tracks on the DAW arrange page, as the waveforms of the different tracks provide useful visual clues.

When it comes to pitch-processing, you'll usually find that it's possible to tune backing vocals more aggressively than lead vocals, because the parts are less prominent in the mix and any artifacts will therefore be less noticeable. But there's no reason to tune the backing vocals harder than the lead part unless absolutely necessary. In other words, I find that the best way to deal with layered vocals is to treat each part individually as if it were another lead vocal: try to use the same subtlety of approach, and to retain as much of the character of the natural vocal as possible.

Sometimes, you'll find that when the individual parts are all added back together in the track, they begin to create unnatural 'phasing' sounds, particularly with longer, held notes. The vocals may also seem to move around the stereo field. The reason for this is simply that they've been tuned too harshly; it's really just a different symptom of the user error described in the preceding paragraph. The more aggressively vocals are tuned, the more they take on the characteristics of pure tones (sine waves). The natural timbre, harmonics and tiny pitch variations that give each part its individual character are diminished to the point that there's a phased, beating effect when the parts are mixed.

The Pitch Modulation sub-tool is the biggest culprit for creating these unnatural-sounding effects, so it's best to use it as sparingly as possible. If you find you still have problems, try swapping out the offending vocal for another take. With caution and subtlety, you'll find that even a double-tracked lead vocal can be perfectly tuned and layered up against the lead without creating any unnatural-sounding effects.

Global Tuning

Sometimes you may need to tune a vocal to fit a track that has not been recorded at a standard pitch. For example, the whole song may have been played slightly sharp or flat. Melodyne's Global Tuning feature can compensate for this quickly and efficiently. In fact, since the release of Version 2, Melodyne has also had the ability to continuously alter the Global Tuning, should you have a reason to do so. Selecting Scale Editor and Selection and Master Tuning from the top-left menu displays a Reference Pitch ruler. You can then click and drag any note on the ruler up or down to alter the global pitch. The grid changes in real time, so it's very easy to see what you're doing and to get the notes properly aligned and centred again. Once a note is clicked, a frequency ruler is also displayed, to help aid fine adjustment.

Workflow

Looking at a whole track of lead, double-track and backing vocals that need tuning can seem daunting, and is best managed by splitting a song into bite-sized chunks, tuning a whole verse or chorus at a time, and working on all the parts in each section before moving on to the next. If the track doesn't have many words, perhaps I'll tackle a verse and chorus at the same time, but otherwise it's best to concentrate on small sections and get them right, rather than rushing through the process. I try to tune with the same degree of accuracy in every project, but each track and each voice is different, so my only hard and fast rule is that if I notice the tuning when listening back to the track, I've gone too far.

Tools Of The Trade

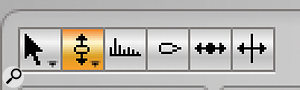

One of the first things any would-be Melodyne expert needs to do is learn the names and functionality of the various different tools that can be found in the toolbar. Here's a quick summary of their functions, running from left to right:

1. Main tool: This is a context sensitive tool which allows you to perform a number of functions, including moving the start/end of a note, altering the note pitch and separating or stretching notes. The Alt key is used as a modifier to enable finer adjustments.

2. Pitch tool: Used at the start or middle of a note, this allows you to manipulate a note's pitch. Double-clicking will quantise the note to the nearest semitone, and moving the tool to the end of the note gives you control over the pitch transition between the selected note and the next one. Note-modulation Sub-tools are available from a drop-down list.

3. Formant tool: See the main article for a more detailed discussion of formant processing, but this tool essentially gives you control over the vocal timbre. Again, moving the cursor to the end of a note makes the tool control the transition between the formant of the selected note and that of the next one.

4. Amplitude tool: This can be used to raise or lower the level of individual Blobs: although you could alternatively use DAW automation, it can make sense to use this tool to take care of problematic level variations in the vocal recording at the same time as you're scrutinising and tweaking pitch.

5. Time tool: Used at the beginning or middle of a Blob, this will enable you to move the Blob forward or backward on the timeline, and at the end of the note it performs a time-stretch. Quantise and non-snap options are also available via double-click and the Alt key respectively.

6. Note separation tool: Clicking above or below a Blob separates a note into two, whereas clicking on the note allows you to move or delete separations.