Our ears are the most important tool we have, but they’re surprisingly unreliable. We explain how to interpret what they’re telling you.

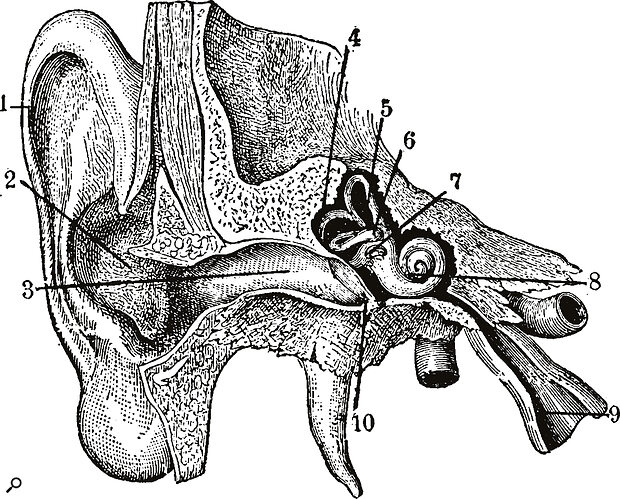

Use your ears. That’s the advice drummed into us since day one of our musical lives. But what if your ears aren’t always to be trusted? The human hearing system is the most powerful machine in each and every one of our studios. It’s a microphone with the ability to selectively choose what it listens to, with in‑built compressors and dynamic EQs to protect it from damaging noise, and a system of analogue‑to‑digital conversion that is capable of translating the air pressure from a farting speaker into Bach or Beethoven.

Every sound you hear is delivered by a miracle of nature, but like any piece of technology, the ear has its limitations. Room acoustics, tiredness, headphone EQ and stress are some of the factors conspiring against our hearing. When the brain is not able to understand the signals it is receiving, or is distracted by other sensory information, it can fill in the gaps with auditory hallucinations. For a classic example, just recall the last time you patted yourself on the back for your clever use of a compressor before realising the bypass button was still on.

If you’ve ever eagerly opened a laptop to listen to the fruits of last night’s recording work only to be greeted with a sonic mess, you’ve experienced our hearing’s tendency to fall off the wagon when tired. The wearier our ears become, the more likely we are to allow a mix to mutate out of shape. Rides or hi‑hats creep up in volume way past where they need to be; vocals that appear appropriately loud loiter 5dB under where they need to be in the cold light of day; effects chains containing a traffic jam of plug‑ins render an initially perfectly good sound into a fuzzy mess. While the advice of just using your ears has its merits, the reality is we need to be mindful of our auditory system’s strengths and weaknesses and know when to use the right tools to support it.

Where Our Hearing Fails

From birth we develop a belief that our experience of the world reflects exactly what is happening. We later learn our experience is by no means the full picture but instead the interpretation that our sensory systems create for us. These interpretations are not absolute and are tailored to fit the parameters of what evolution has decided we need to be told. For example, the importance of the human voice has had a profound effect on how our hearing has developed. Our ears prioritise the frequencies where the human voice resides.

Susan Rogers is renowned for her engineering work with Prince, and is now Professor of Music Production and Engineering at Berklee.To gain a better understanding, I spoke with Grammy Award‑winning mix engineer and producer Susan Rogers, who first rose to prominence as an engineer for Prince in the 1980s and now works as a Professor of Music Production and Engineering at Berklee. “Evolution made sure that we paid extra close attention to sounds that have survival connotations, so the human hearing mechanism evolved to be most sensitive in that region between 1 and 5 kHz, and in particular speech consonants,” she explains. “The difference between ‘bat’, ‘cat’, ‘sat, ‘hat’ and ‘rat’ can have serious implications out there in the world. If you say to someone ‘There are hats in that cave,’ it means something different to ‘There are rats in that cave.’”

Susan Rogers is renowned for her engineering work with Prince, and is now Professor of Music Production and Engineering at Berklee.To gain a better understanding, I spoke with Grammy Award‑winning mix engineer and producer Susan Rogers, who first rose to prominence as an engineer for Prince in the 1980s and now works as a Professor of Music Production and Engineering at Berklee. “Evolution made sure that we paid extra close attention to sounds that have survival connotations, so the human hearing mechanism evolved to be most sensitive in that region between 1 and 5 kHz, and in particular speech consonants,” she explains. “The difference between ‘bat’, ‘cat’, ‘sat, ‘hat’ and ‘rat’ can have serious implications out there in the world. If you say to someone ‘There are hats in that cave,’ it means something different to ‘There are rats in that cave.’”

While nature decided to focus our hearing on human voices, the sub frequencies of a kick drum were clearly not on its priority list. For proof, just look at the spectral analysis of any song with a strong kick drum, where the amplitude curve bumps highest around the 60Hz region and descends in volume as it approaches the midrange. In dance music, especially, in order to feel the bass of a kick drum (and not just hear its high‑end snap), we have to turn it up much louder than everything else. Even then, we layer it with clicks and high‑frequency material to make it even more audible. We simply can’t hear low end as accurately as we can sounds that occur in the midrange. Instead, we rely on our sense of touch to feel the pressure of the bass and complement our hearing, but there are also times when using VU meters or spectral analysers can help us make accurate judgements about low end.

Susan Rogers: Auditory adaptation happens in the first couple of minutes of listening. If you really need to hone the latter half of the song with your highest acuity, you might want to park that playhead a couple of minutes in...

The human voice, on the other hand, has its own problems. A classic rookie mixing mistake in dance music is assuming the level of a vocal is appropriately loud, only to play the track in a club and discover to your horror it’s not loud enough to be properly heard. We tend not to notice vocal volume being lower than it should be because our ears are better at picking out vocals, particularly during lengthy studio sessions, when our ability to accurately hear other frequencies and differentiate volume changes begins to wane. A quick solution to this is to play the track at a very quiet level and raise the level of the vocal until it can be clearly heard.

Tiredness poses one of the greatest threats to our ability to hear accurately. As our energy wanes our brains pull back on the amount of resources devoted to listening. “It’s just like when starting out on a run and the body and the muscles don’t know what you’re doing,” says Susan. “As far as they know, you might be just running to the end of the block but after you run a little bit, the body figures out you’re not going to be able to keep this up unless it keeps some energy in reserve. So at first you expend a lot of energy but right away your body is smart enough to pull back so that you can sustain that activity. That’s the definition of adaptation. It’s the metabolism pacing itself in order to maintain whatever it is you’re doing.”

This is why it’s important to do your most critical listening when you first begin work. It’s also why frequent breaks or even sleeping on a mix or arrangement are so important to retaining clarity.

“Auditory adaptation is something we’re all very familiar with,” Susan continues. “The first bite of something is delicious, by the time you get to the end of the plate you’re inert to it. Auditory adaptation happens in the first couple of minutes of listening. If you really need to hone the latter half of the song with your highest acuity, you might want to park that playhead a couple of minutes in and take a break. When you hit play, listen from the middle of the song to the end. If you play the entire song from the beginning, adaptation will kick in and that’s going to reduce the sensitivity of the hearing mechanism.”

Just like we bump a social media post to the top of a thread to retain the attention of a group, taking a break and recommencing work allows you to bump your auditory system to the top of your body’s priority list. Varying tasks has a similar effect. Work on your bass elements for a while, then move onto your drums or vocals and keep switching up your tasks so that what you’re hearing remains high on your energy system’s priority list.

Are You Experienced?

Many of these problems are more often experienced by novice or even intermediate mix engineers and producers. Those longer in the tooth tend to have a much better sense of where things should sit, gained through years of trial and error and exercising their hearing mechanism. “Folks who listen for a living actually have more acute hearing than folks who don’t necessarily need their hearing on the job,” says Rogers. “The brain is going to devote resources to the auditory system if you need it. It will grow dendritic spines, extra branches on the auditory nerve bundle. The processing nuclei just get fatter and thicker and the brain says, ‘Well I guess you’re really into this sort of thing,’ and so it takes its internal resources and beefs it up.”

Wez Clarke has worked with such high‑profile artists as Beyoncé, Ed Sheeran, Sam Smith and many more.

Wez Clarke has worked with such high‑profile artists as Beyoncé, Ed Sheeran, Sam Smith and many more.

But all the experience in the world won’t help you if you’re listening in a new environment with poor acoustics. Build‑ups of reflections in certain areas of your room can create artificial boosts or cuts at certain frequencies depending on where you are in the room. Your bass might disappear in the listening position yet appear substantially louder than it actually is when you dip your head in the corner. Grammy Award‑winning mix engineer Wez Clarke says: “Learning your room is the most important thing for making sure your mix translates everywhere. Even in a fairly terrible room your brain will eventually learn to ignore the dips and peaks in the frequencies and filter things out as you get to know the room over time. Like, if it’s a bit live, your brain will filter out the reverb time in the room, which is quite amazing.”

Wez Clarke: “Learning your room is the most important thing for making sure your mix translates everywhere.

It’s not as crazy an idea as it sounds. Echo suppression is a trick the hearing system uses to suppress echoes so we can pay closer attention to a direct signal. “If you’re talking with a friend in a store where the walls aren’t too reverberant, and then you walk into a big cavernous parking garage, there will be more echoes, and for the first few seconds, those echoes are going to make it harder to focus on the direct signal from your friend,” says Susan. “But right away your brain is going to suppress those echoes and clamp them down and go back to listening to your friend.”

The same phenomenon Rogers points out explains why it’s so easy to keep piling delay and echo effects onto our channels. Over time our ears simply hear past the echoes, and it’s important to bear that in mind when the ear is demanding more from the ‘wet’ knob.

Echo suppression also highlights the great importance that motivation plays when critical listening. If you’re keen to hear a sound your brain will devote more energy to picking out its frequencies. And if you’re excited by a project, your brain will devote more energy to your listening system than if it were working on something you found boring or unsatisfying.

The auditory nerve bundle is a bidirectional path. When sound excites the cochlea, the signal goes up the chain, but it goes in the opposite direction too. The efferent pathway is the route by which we send commands down from the cortex to the cochlea to the outer hair cells, to tell the cochlea to home in on certain frequency bands, or maybe push the amplitude of some and suppress the amplitude of others. “We’re kind of directing our brain to hear what we want to hear or what we think we’re going to hear,” confirms Susan.

Stress, on the other hand, has a completely adverse effect on your ability to critically listen by making it harder to perform that function fully, or to concentrate, perform and stay on track. “If you’re working for a client who is making your life a living hell and you’re stressed on the job every day, get out, it’s just not worth it,” adds Susan. “You’re not going to be able to process what you’re hearing very well because the stress hormones are going to make it very difficult to do your job properly.”

Making Sense Of It All

My own approach to mixing and producing is perhaps a cynical one. No sense is to be implicitly trusted, and I try to constantly challenge my perception of what I’m hearing. To that end, I use metering and spectrum analysers to provide a safety net. These are particularly useful if you’re on stage or in an unfamiliar environment where your hearing is struggling to make quick and accurate judgements.

For mixing, Wez prefers to rely on his ears and references alone. “Putting an analyser across the mix scares me,” he laughs. “I know a few of my friends who rely on them, but I don’t use them. I do use VU meters to make sure I’m not going above zero, though. That said, if I hear a vocal and there’s a harsh frequency, for speed reasons I’ll pull up an analyser to see where it’s poking out rather than hunt around for it on an EQ.”

Meters or analysers need to be used with caution. It’s very easy to slip into a pattern where the eyes guide the ears rather the other way around. “The human brain has so many more neural resources devoted to what we see, and hearing is so much more crude in comparison,” says Susan. “If you get vision involved, it is going to want to lead the dance.”

That’s especially true of modern workflows centred around DAWs and visual feedback. I find a simple rule to follow is to use your ears first and later check with your eyes. Using a DAW can be very visually distracting and it’s worth looking away from the screen or closing your eyes when you need to make a call on something important. Using a parametric rather than a graphic EQ, for example, is another trick that forces your ears to be the dominant sense. Challenging one sense with the other is especially useful if you’re not mixing in an acoustically treated room or with high‑end monitoring, or haven’t time to acclimatise to the room or speakers.

Reference tracks are also essential to maintaining perspective.“It’s important to compare with other things out there in a similar genre,” says Wez. “If you don’t reference something else, you can get lost in a strange rabbit hole of thinking something sounds great and then you actually reference something that’s out there in the commercial world and realise you’re miles off.”

Loudness

Loudness can affect your perception of sound. Monitoring at too high a level, for example, can skew your perception of a mix, so turn the volume down frequently. You can use an SPL meter to check you’re monitoring around 75dB C‑weighted (an often recommended level for a small room), or, failing that, use your normal speaking voice as a guide to where to set your monitors. It’s necessary to listen loud occasionally, but the rest of the time, monitor at a safe level.

When adding effects and plug‑ins, you should level match the output so that all you’re hearing is the effect, not an increase in volume. If the effect is louder, your brain will interpret it as sounding better.

Finally, if you’re using a reference track that has already been mastered, identify its LUFS (loudness), and apply a limiter to your own mix to raise its loudness to a similar level. This will allow you to make fairer comparisons — but make sure you’re only limiting your mix and not the reference, and routinely take off the limiter to check how the naked dynamics of your un‑limited track sound.

Room For Improvement

Test your space with Room EQ software and treat your room’s acoustics where appropriate. Although teaching your ears to listen through the problems is possible, attending to room modes makes life a lot easier. If working with poor acoustics, try room‑correction solutions like REW or Sonarworks. Use different speakers and move around the room every now and again to challenge your brain’s perception. Also listen in a combination of environments to see how the song translates.

The Author’s Advice

Article author Gavin Herlihy in his studio.

Article author Gavin Herlihy in his studio.

My own approach to mixing involves using various tricks to make sure I’m not being deceived by my ears. For example, setting levels using your ears alone is often the most important part of a mix, so this is best done as soon after waking as possible. Your brain is more alert and less prone to anxiety, thanks to the presence of oxytocin, a hormone which is released in the morning. Your sleepy brain state can also be a creative asset — those emails can wait until your ears are tired. Just getting the volumes to sit well with each other can often take a mix within reach of sounding ‘finished’, provided the elements complement each other appropriately, so it’s an incredibly important part of the process requiring your utmost attention.

After some time throwing faders around to find what feels like the natural fitting of the various elements, I’ll cross‑check levels with my analyser to make sure my ears haven’t missed any untoward levels. Analysers such as Voxengo Span or Waves Paz feature graphs showing amplitude against frequency, and I find these especially useful for checking the balance of midrange and high‑frequency elements with each other. This is not absolute, of course, but it helps to set a visual reference for where the line of balance is; after that, it’s up to your artistic judgement as to whether or not the clap should be louder than the hat, say.

Too rigid an adherence to visually balancing the elements can, of course, lead to a bland mix, and this is one of the greatest dangers of welcoming an analyser into your workflow. The key is often closing your eyes or looking down while listening.

When it comes to EQ, I listen with my ears for unwanted resonant frequencies, but I’ll check the analyser occasionally, too, to ensure I haven’t missed anything. I listen to the low end with my ears to ensure the feeling is appropriately right, but I also keep an eye on the level of the kick or sub bass in my analyser to ensure it is in the range it needs to be. As with setting levels, it can often help to close your eyes or look away from the screen when adjusting EQ.

I mix mostly dance music, where the balance between kick and bass is integral to the success of the track. I often start by balancing the kick and bass with each other using a trick made famous by Nashville mixing legend Jacquire King. This involves using a VU meter to set their levels. Start by raising the kick until the VU meter reaches ‑3, which should be around ‑18dBFS on your peak meter (depending on how your VU meter is set up). I then raise the bass until the points at which both the kick and bass sound at the same time push the needle to 0VU. When two sounds clash in the same frequency area, if they are equally balanced in the mix, the overall volume should rise by about 3dB. Very small increments of change can have a huge effect of how far the needle travels, and VU meters lend themselves well to the process. I usually perform this adjustment at the very beginning of the mix, before any compression or EQ is applied to either element. It is not the final level or balance between the kick and bass by any means, but it is useful to know that both are in balance before I begin carving out spaces using EQ or compression. When balanced, grouping both elements together can help when you need to boost the low end of your mix without upsetting the kick/bass relationship.

It’s also equally important to challenge the balance of the two elements by closing your eyes and adjusting the bass level to where it feels most comfortable to your ears. The difference between the ear‑set and VU‑set fader positions can depend on what is happening with your room acoustics. If you’re dealing with a build‑up of bass around the same frequency range in your listening spot, or you’re in a new acoustic space that you haven’t yet become accustomed to, you might find your subjective bass level is a little too cautious and that there is a little more room for raising the bass.

If I feel my attention starting to wither with tiredness, I’ll have a nap — a move I don’t take lightly, as much like any other audio professional I’m under to pressure to get as much work done as quickly as possible! But sometimes a well‑timed nap can rescue an afternoon — even just 20 minutes’ shut‑eye can save hours of running around in circles. Even better, sleep on it or, if you have the luxury of time, put a song away for a few weeks and forget it altogether. When you do listen again, for a few golden minutes at least, you’ll be able to hear it just like anyone else would. For the same reason, it can help to rotate projects so that you’re not always over‑listening to the same thing.

As much as analysers can help, feeling plays a huge role in the mixing process, and as music is a medium aimed at the ears not the eyes, it makes sense that the hearing system must be in control of the lion’s share of the decision‑making process.