With a record three Album of the Year nominations at this year’s Grammy Awards, mastering engineer Emily Lazar is at the forefront of her profession.

“I guess you could say I was born to be a mastering engineer,” laughs Emily Lazar.

“I grew up in a very musical house. My mom was a guitar teacher and she taught from home, so I had that great opportunity of sitting in on all of her lessons. And then my dad was very interested in finding cool recordings and having interesting equipment. I have vivid memories of him throwing a pair of amazing headphones on me and saying, ‘Oh, listen to the bass in this recording. This is cool.’ Just even listening to specific instruments, I think, is a way of listening that most people don’t come to until later, when they’re really delving into music and finding themselves.”

Like many people who work in studios, though, Lazar’s first experiences of the music business came as an artist and songwriter. “I was that kind of demanding artist that all of the artists that I work with are. I never was satisfied with recordings of my band, and it never really sounded like what I thought it should sound like in my head. And so I was always looking for this way to decode the things that I was hearing inside my head into the actual finished product. And mastering was the ultimate moment for making that happen.

“I had worked as an engineer doing recording and mixing, and I had done a little bit of mastering on the side by myself. But, at that time, it was very much a mysterious dark art. You felt like you really only got the keys to the castle if you were able to understand what the signal flow was, what they were using, how it happened, how it all went down. So the things that I had done prior to actually being in a proper mastering facility were really me guessing and being creative.

“When I was getting my master’s at NYU, I started working at Sony Classical. And so I had a lot of classical training with the engineers there, which was really great because no artists are pickier sticklers about their tone and their sound and making sure that they’re being true to their music than classical artists. And so that was wonderful but intense training. I worked there when I was the graduate fellow at NYU and that job was my graduate internship. I was lucky to be there under the guidance of David Smith and then after that, I worked at a large NY Mastering house specifically with engineer Greg Calbi. After that, I started my own studio in downtown NY called The Lodge.”

Science & Art

Most aspects of audio engineering have a technical and an artistic dimension. Mastering is sometimes seen as being at the technical end of this spectrum, but Emily Lazar happily uses the word ‘creative’ to describe her work. “If you work with a mastering engineer who’s simply transferring from one medium to another, then that’s what it is. Sometimes I think it requires a bit more to cross the finish line. And I think, as the technology has changed, there’s certainly a lot more opportunity to be helpful when people require it. When something comes in and it’s perfect, it’s my favourite day ever and I am happy to transfer it from one medium to another. But I would say those favourite days are pretty rare.

Mastering is sometimes seen as being at the technical end of this spectrum, but Emily Lazar happily uses the word ‘creative’ to describe her work.

“Sometimes it goes both ways. For example, if I’m hearing something, I’ll give someone an additional option. I’ll say, ‘Hey, this is your mix with me taking it to the best that I can in keeping it really close to the original, and here’s where I think you could go if we did these few tweaks.’ Sometimes I’ll even make another recommendation like, ‘Hey, I think I could take your mix even further if, maybe, you open up your mix on your end and do X, Y, and Z prior to sending it to me.’ In those instances I’m usually making suggestions about something that someone could do to a very specific area in the mix. These can include balance, overall width, depth and height or timbre of certain instruments or the overall colour of the effects.”

Stem Mastering

In her aim of helping the artist realise their vision, Emily Lazar pioneered ‘stem mastering’, where she works with separate files for the main elements of the mix. The concept caught on immediately with artists, but other mastering engineers weren’t always so sympathetic to the idea. “I remember when I first talked about stem mastering, all the other mastering engineers went against me, and mixers too. ‘She has no business doing this. What is she, crazy? Why would you do that?’ Now, if you go on everybody’s website, they offer stem mastering.

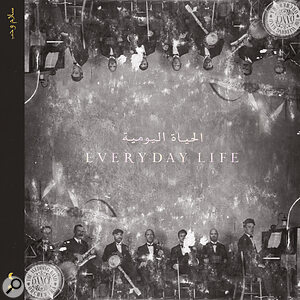

No fewer than three Emily Lazar‑mastered albums were nominated for Album of the Year at the 2021 Grammy Awards.“The reality is it’s just so much easier to fix the drums if you’re just listening to the drum stem and fixing the drum stem, and not ruining the bass because you’re futzing around with the drums on a stereo track. That’s the truth. And people who didn’t want to do it either didn’t know that the power was there to do it, and weren’t capable, or had no understanding of how all of this really actually works and how it is really much harder to dig in and fix something without affecting other things around it that sit in the same frequency range. I mean, it’s actually impossible.

No fewer than three Emily Lazar‑mastered albums were nominated for Album of the Year at the 2021 Grammy Awards.“The reality is it’s just so much easier to fix the drums if you’re just listening to the drum stem and fixing the drum stem, and not ruining the bass because you’re futzing around with the drums on a stereo track. That’s the truth. And people who didn’t want to do it either didn’t know that the power was there to do it, and weren’t capable, or had no understanding of how all of this really actually works and how it is really much harder to dig in and fix something without affecting other things around it that sit in the same frequency range. I mean, it’s actually impossible.

“As the technology changed, immediately I saw the freedom and the great flexibility that we would all have by using stem mixes to achieve the results that we as mastering engineers, producers and mixers, want to get to that final place. I have no interest in touching somebody’s beautiful, perfect mix. If they’re happy with their mix and they’ve come to me and they say, “I love this mix. I just want it to sound like itself but just that much better. In those instances I’m thrilled to do everything in my power to keep the integrity of the mix intact. I’m really all about making sure to preserve those aspects and serve the song in the best way possible.

“It’s only in those moments where I can suggest something that I think may serve the song better, or an area where I hear somebody complaining about something. When they’ve asked me, ‘Hey, you know, the background vocals are not coming out enough in this section.’ Or, ‘The saxophone doesn’t sound right.’ Or whatever. Only then I’ll address whatever the problem is and try to help them solve the issue the best way that I can, given the source material that I have. So if I have stems, sometimes it’s a little easier. Sometimes I open up the actual mix itself, because nowadays, with people mixing in the box, you can do that too.

“It’s a better way to achieve these things because, a lot of times, people are mixing a little bit too loud to begin with. So, once it’s hitting stereo, if it’s not right, it can be a mess. And when I say not right, that’s really not about me, that’s about when somebody says to me, ‘Man, I really wanted this thing to hit hard here and have the chorus lift and instead the whole thing sounds like it’s imploding and the chorus feels small.’ So that’s their description of something that’s wrong in their mix. I don’t usually say to people, ‘your mix is wrong.’ But I will say, qualitatively, ‘I think this part could be better. I think we could lift the choruses up here, if you want. Or we could do this in the bridge or bring back some dynamics. I often suggest adjusting the EQ on effects, as that’s really hard to address after the fact. For example, if a client wants to have overall air and openness on a mix it’s going to be relatively impossible to accomplish if one element in the mix is already too harsh. This happens a lot with the effects on vocals. When they are too harsh and it’s already committed to a stereo mix and you don’t have access to the stems or the mix itself it’s very difficult, if not impossible, to achieve the goal without adversely affecting other aspects of the mix. Trying to creatively solve these problems is the biggest challenge of mastering. I very much enjoy being able to have the dialogue about the problem areas and overall goals with my clients, whether they be the artists themselves, the producer, the mixer, whoever, that dialogue inspires me to deliver the results they are looking for.”

“It’s a better way to achieve these things because, a lot of times, people are mixing a little bit too loud to begin with. So, once it’s hitting stereo, if it’s not right, it can be a mess. And when I say not right, that’s really not about me, that’s about when somebody says to me, ‘Man, I really wanted this thing to hit hard here and have the chorus lift and instead the whole thing sounds like it’s imploding and the chorus feels small.’ So that’s their description of something that’s wrong in their mix. I don’t usually say to people, ‘your mix is wrong.’ But I will say, qualitatively, ‘I think this part could be better. I think we could lift the choruses up here, if you want. Or we could do this in the bridge or bring back some dynamics. I often suggest adjusting the EQ on effects, as that’s really hard to address after the fact. For example, if a client wants to have overall air and openness on a mix it’s going to be relatively impossible to accomplish if one element in the mix is already too harsh. This happens a lot with the effects on vocals. When they are too harsh and it’s already committed to a stereo mix and you don’t have access to the stems or the mix itself it’s very difficult, if not impossible, to achieve the goal without adversely affecting other aspects of the mix. Trying to creatively solve these problems is the biggest challenge of mastering. I very much enjoy being able to have the dialogue about the problem areas and overall goals with my clients, whether they be the artists themselves, the producer, the mixer, whoever, that dialogue inspires me to deliver the results they are looking for.”

It Might Get Loud

Stem mastering, then, can be one way of dealing with the perennial problem of mixes that have become ever louder and more compressed. It’s a complex issue and not one that admits of a simple solution. “The loudness wars are the bane of my daily existence. If I could snap my fingers and make them go away, I would snap them so hard my fingers would fall off. I don’t like to have to compete because something’s a 10th of a dB louder than something else. It’s not the point of what I do, it’s not the artistry of what I do, it’s not the creativity of what I do. I think that problem starts way back in the chain beginning with the demos, because when the demos are blasted then the mixer hears the demos that way and is forced to try to ‘beat’ the demos — the poor mastering engineer is left with already blasted mixes that have to become masters that are even louder and bigger. It’s a mess and obviously terrible for the end result’s overall sound quality.

“But I do also understand that there are people — not everybody — but there are people that want things to sound really loud and aggressive. And that’s fine. I can think of a bunch of records with artists where they were really like, ‘I just want this thing to be screaming. I want it to feel aggressive. I want people to feel pain and fatigue.’ And you’re like, ‘OK, that’s an artistic statement too.’ And that’s valid, it’s totally valid.

“And it’s interesting to think about because this aesthetic also has to do with when you grew up, where you grew up, and what you listened to. So if you grew up on horribly distorted MP3s, or CDs, or now streaming Ogg Vorbis versions of things on Spotify or whatever, these are all just different representations and flavours of what the thing actually is. And there have been artists that have been addicted to the sound of MP3s that have said things to me like, ‘You know that shreddy stuff on the top?’ And I’d say, ‘The distortion that’s not supposed to be there? The compression, is that what you’re talking about?’ ‘Yes. I love that. Makes me excited.’ I’m like, ‘Really? Interesting…’

“And clearly, it’s really about the person turning the knobs: how they heard things, how they want to hear things, what they’re trying to accomplish, what that aural picture is that they’re trying to paint. And that’s the difference between somebody who knows what they’re doing and somebody who maybe gets lucky once, but most of the time makes a big, soupy mess and doesn’t really know what they’re doing. There’s nothing wrong with experimenting and turning and twisting until you like how it sounds. I have no problem with any of this. But I do definitely see the possible pitfalls of people having very powerful tools and not really understanding how to use them. Knowing how to achieve the right overall target levels — decibels versus LUFS. Knowing where you should be regarding loudness and understanding how you should distribute your music, both which formats and at what levels and what stage of the game it should be in those formats and at those levels. It’s a lot and it changes quickly. So, yeah… the loudness wars.”

Going With The Flow

The loudness wars are a relatively new headache compared with perhaps the biggest challenge for any mastering engineer: making a disparate collection of tracks work together as a coherent whole. “The creative part is creating a piece of work that communicates something that makes the hair on people’s arms stand up or makes them cry or makes them jump up and down. That’s what music does, and there are ways to help amplify that through the storytelling. I think when you’re listening to an album, a great sequence is very important for it as a piece of art. That part of the groundwork for an album, creating a space where everything makes sense and fits together, no matter when and where it was recorded or by whom, that’s very important to effectively telling the story.

The Lodge features a huge range of monitoring systems, from large ATC monitors down to multiple Airpods!

The Lodge features a huge range of monitoring systems, from large ATC monitors down to multiple Airpods!

“For people getting into the mastering game right now, instead of just being lost in space and having a collection of songs sitting in front of you and not knowing where to start, I think talking to the people that you’re working with is really helpful. Getting to know them as people can really inform the overall artistic direction, so asking seemingly obvious questions like ‘What were you trying to do here? What happened to you this past year? What is this thing about? Who is your intended audience?’ can make it all come into focus a little bit better and you can then start to decipher how to translate that into sound and into colours of sound. For me, it’s a very colourful experience, working with music, making an album and working with artists. So I usually have conversations that are less about frequency and technical process and more about vibe, colour, flavour, tastes, locations, stories and feelings. That’s what keeps me really interested as a listener. I think it is that perspective that makes my clients gravitate toward me. We have conversations that are more about songs and stories translating more than talking about frequencies.

“When we do have to talk about frequencies, we do. When I hear something and someone sends me a mix to listen to and I think that there’s a buildup of 350Hz, I’m the first person to say, ‘Hey, I got some resonance here that I think is hurting your end result. Go check it out, cut it out, try to do this.’ And I’ll tell them flat out in the most technical terms possible. But that’s really only to help serve whatever is not being communicated through the song, right? Maybe it’s dragging down the vocal, maybe it’s making the drums less powerful, maybe it’s not letting the guitars sing.

“I use this analogy a lot, but I really am like a midwife trying to facilitate the happy, healthy birth for this beautiful baby. And I do believe that artists feel that kind of same feeling that parents do when they’re having their children. It’s obviously more intense when you’re having an actual child, but putting a song out into the world is a very vulnerable moment. Letting it be judged and appreciated can be very nerve‑wracking for a creator. For everybody who’s involved — artist, engineer, producer and mastering engineer alike, it’s their work on the line, and I very much like to help in any way that I can to lay those fears to rest and create the best possible outcome.”

On Streaming

Today, music is consumed mainly through online streaming platforms such as Spotify, Tidal, YouTube and Apple Music, all of which implement loudness normalisation. Some see this as bringing an end to the loudness wars: if all music is normalised to a fixed LUFS value on playback, there’s no advantage in reducing its dynamic range at the mix or master. In practice, though, the situation is more complex than that. As has already been mentioned, competitive loudness is as much an issue during the production process as it is in delivery. And the proliferation of different streaming services, each with its own codec and opaque standards, has proved problematic for conscientious mastering engineers like Emily Lazar.

“Now that so much listening is done on streaming with these weird, distilled streaming versions of their music, and not enough people are actually hearing the WAVs or heaven forbid the high‑res WAVs, I think it’s actually super confusing for people to hear what things sound like against other things. So I think this idea of, ‘It doesn’t have to be as loud because there’s quote‑unquote normalisation’ — which I’m not a huge fan of, because each DSP has their own way of handling the files — I think it’s created quite a lot of confusion. Because, as a listener, you don’t know what’s going on. Every time you update your software, or your phone, you never know what’s going to change. As an artist or creator it’s even more confusing.

Every streaming service sounds different to me. And every different service sounds different to me over time, as operating systems change.

“Every streaming service sounds different to me. And every different service sounds different to me over time, as operating systems change. Who knows? It’s proprietary information for them, how they are compressing and ingesting the files and what they’re actually doing. Nobody really knows. Nobody exactly knows what they’re making. So even just this idea of trying to audition things in an impossible scenario is really, I think, one of the biggest problems for mastering engineers to contend with. That’s the other bane of my existence. The loudness war can be one, and then trying to deal with the never‑ending, always‑changing final resting place for these files. I want to make the master that everyone’s listening to when my name is on it. I don’t want some other thing in between that is changing it yet again.”

Some mastering engineers’ response to this impossible situation is just to create a single master that, they think, represents the best possible version of the track. Not so Emily Lazar, who meticulously researches what will sound best on different platforms and will supply separate versions optimised for each. For similar reasons, she doesn’t only listen on the large ATC, Genelec, ProAc and Duntech systems at The Lodge. “I listen on really large speakers, and really small speakers. So many, so many different things — you would be like, ‘Wow, this is kind of psychotic’ — even down to earbuds, AirPods, different versions of AirPods, other brands of wireless in‑ear headphones. When we’re doing things in Atmos we, of course, listen on every other Atmos playback that we can get our hands on, in‑house we have consumer playback scenarios like the Echo Studio and the Sonos Arc as well as our properly tuned Atmos mastering rooms. And for surround it’s the same thing. So yeah, it’s kind of exhausting. But that’s what it is, right? If I were going to the greatest heart surgeon in the world, I would want to think that he was exhausting all the possibilities to get me the best possible result. And so that’s how I view us as trying to exhaust the possibilities to get people the best possible result.”