SiSoftware's Sandra provides a huge range of modules for investigating and testing various aspects of a PC's performance.

SiSoftware's Sandra provides a huge range of modules for investigating and testing various aspects of a PC's performance.

PC paper specifications can vary so much that it's hard to know how one PC compares to another in terms of its performance with real-word audio apps. Hence the need for tests designed just for music PCs...

Six months ago, in SOS June 2003, I explained how to specify a new PC to suit your needs, taking into account how many audio tracks, plug-ins, software synths and software samplers you intended to run, and just what PC resources each facility consumed. However, there's currently a huge amount of interest in the other side of the coin — just how many of each of these things an existing PC (or Mac) can run, as a measure of its performance. You've only got to look at the adverts for the new G5 Macs to see what a sweat everyone's in about which computer format will 'play more plug-ins'.

Traditional benchmark tests can shed a lot of light on how a particular computer performs general office duties (a particular set of tasks can be timed) and runs games (the frame rate — number of refresh cycles per second, and therefore 'smoothness' and responsiveness — can be directly measured). Even multimedia applications such as MP3 encoding or video transformations can be timed — but performance with music applications can't be so easily quantified.

This is largely because there's no such thing as a standard way of using a music application. Each musician will approach each song in a unique way. While some will (for instance) exclusively record audio tracks, others will mostly rely on triggering external MIDI synths, while others solely use soft synths to create their compositions.

So while the ability to run 1600 bands of EQ plug-ins using only 25 percent of a Mac G5's resources may sound impressive, the truth of the matter is that bundled plug-in EQs tend to be optimised for low CPU overhead rather than ultimate audio performance, and no-one in their right mind is likely to need more than a few dozen at a time in the real world anyway. Personally, I'm not interested in platform wars — I started getting requests from clients about 12 years ago to support the PC, so that's what I bought and learned — but the bigger question still remains: how best can you test the performance of a PC with music applications?

Measuring Hard Drive Music Performance

The number of simultaneous audio tracks you'll manage with a particular drive is primarily determined by its rotational speed, and therefore its sustained transfer rate. However, while this figure is useful as a measure of overall performance, music applications play back audio tracks by reading a chunk of each in 'round robin' fashion, so there's a lot of jumping about by the read/write heads as they access each chunk in turn. The size of each chunk determines how often the heads have to jump to the next location, and this is determined by the 'block buffer size' in your audio application.

So when you specify how many simultaneous audio tracks a particular partition, drive or PC system as a whole can manage, you have to state the format of the audio (16-bit, 24-bit, 44.1kHz, 96kHz, and so on), which determines the amount of data used by each track, and the block buffer size. DskBench (www.sesa.es) does exactly this for the PC, which is why I always report its findings when testing PC systems.

It's important to make sure that the drive has been defragmented before you start, since you don't want additional read/write head activity to compromise the results, and the easiest way to do this is to test an empty drive or partition. Since sustained transfer rate also varies from the outside to the inside of the drive, I also measure the difference in performance for the system partition (nearly always the outermost, and therefore fastest partition), and the separate audio partition or drive if one is available.

Unfortunately, DskBench only provides a theoretical maximum number of tracks, based on its sustained transfer rate running eight tracks with differing buffer sizes, and when running a real audio application under Windows other factors come into play. These include the 133MB/second PCI bandwidth, which may limit the final track number in some cases, depending on how much bandwidth is already being consumed by other PCI devices, such as graphic cards, and more particularly by the current crop of DSP-assisted audio accelerator cards such as TC Works' PowerCore and Universal Audio's UAD1. Moreover, some motherboards may themselves limit the maximum PCI bandwidth, although you're unlikely to find mention of this in their specification. All this will disappear once we're using the forthcoming PCI Express technology, which supports a massive 8Gb/second!

Another factor is the way the audio tracks are constructed. Long and continuous multitrack takes will exercise the hard drive in a rather different way from songs created from lots of short parts scattered over the hard drive.

You also have to ensure that unique data is being read for each track, so that the drive is getting a realistic workout (playing back the same data on multiple tracks is likely to compromise the results, due to buffering), but sadly this means that a huge amount of data is involved. Anyone formulating such a test will probably find that the sheer size of it prevents making it downloadable to other musicians. So ultimately I still stick with DskBench, as it provides an extremely useful ball-park figure for hard drive performance.

Practical Uses Of Test Software

Before we get into discussing music tests, let's pause for a moment to consider what other benefits there are in testing our own PCs — barring the simple one-upmanship often found between Intel and AMD processor users!

One of the most obvious benefits comes with using DskBench (see 'Measuring Hard Drive Music Performance' box, above). Whatever Windows tells you, it can be extremely reassuring to see not only how fast your hard drive is performing, but also that Buss Master DMA has been correctly enabled. Without it, your CPU overhead may be 20 percent or more simply when playing back audio tracks, while with it enabled this figure will typically drop to less than one percent — and you'll be able to run a lot more plug-ins and software synths. Other useful data is available via other test software. The Drives Information feature of SiSoftware's Sandra (www.sisoftware.net) or Powerquest's Partition Magic (www.powerquest.com) will tell you how your hard drives have been formatted: system drives should ideally have clusters of 4Kb or smaller to avoid wasting space when large numbers of small files are likely.

Running a test such as Nero's CD Speed will check that your CD-R/W drive is using DMA. Without it, the top speed of a modern fast CD-R/W drive may be halved and CPU usage at faster speeds may be several times higher. I've also measured drives whose Burst rate has more than doubled once DMA has been enabled. It may even help you decide on the best choice of E-IDE connection to achieve the most reliable burns or fastest transfer rates.

When it comes to RAM, running a test such as Sandra's Mainboard test or using CPU-Z (www.cpuid.com) will show you whether your new RAM has been correctly recognised for CAS Latency by its SPD (Serial Presence Detect) function, for maximum performance, while if you're tweaking its speed by hand, running Sandra's Memory Bandwidth test will show how much (if any) improvement you've achieved.

For most musicians, it's the processor that becomes the limiting factor with audio software, and running benchmark tests for CPU performance will tell you how your particular PC measures up to others. This can help you decide whether or not you need to upgrade and, if you choose to do so, how much improvement you're likely to get with a particular CPU speed and type. Running benchmark tests before and after a Windows or BIOS tweak can also help you see whether or not it has made any measurable difference to performance, particularly when it comes to overclocking the CPU.

Multiple Plug-in Tests

However useful benchmark tests are, they still don't provide a way to quantify music software performance with a particular PC. The only real way to do this is to run music software — and there are two main approaches we can take.

Many plug-ins now consume tiny amounts of CPU overhead, so running multiple instances of them in an application such as Wavelab 4 can make measurements more accurate — here eight instances of Waves' REQ6 take 13.2 percent, so each one takes 1.65 percent of my Pentium III 1GHz CPU.The first involves setting up one task, such as running a particular plug-in or softsynth, or an audio track, and either reporting the resources each instance takes, or ramping up identical tasks until the PC falls over, and reporting the maximum number of instances. The second approach involves the creation of a notional 'real world' song incorporating a selection of simultaneous audio tracks, plug-ins, and soft synths, and reporting on the CPU and Disk meter readings given by the particular application.

Many plug-ins now consume tiny amounts of CPU overhead, so running multiple instances of them in an application such as Wavelab 4 can make measurements more accurate — here eight instances of Waves' REQ6 take 13.2 percent, so each one takes 1.65 percent of my Pentium III 1GHz CPU.The first involves setting up one task, such as running a particular plug-in or softsynth, or an audio track, and either reporting the resources each instance takes, or ramping up identical tasks until the PC falls over, and reporting the maximum number of instances. The second approach involves the creation of a notional 'real world' song incorporating a selection of simultaneous audio tracks, plug-ins, and soft synths, and reporting on the CPU and Disk meter readings given by the particular application.

Running Logic Audio Platinum reverb plug-ins, for example, certainly provides a good measure of performance, and because this particular plug-in is widely used by Logic owners, it's an ideal way to measure the relative performance of various computers. Since Logic Audio is still a cross-platform application (despite the politics), Mac/PC comparisons can also be made. And because reverbs need rather more RAM than most other plug-ins to calculate the lengthy tails, as well as more intensive CPU calculations than most other types of plug-in, it's also easier to get meaningful results without having to run dozens of simultaneous instances.

DNS Studios have a set of four Logic songs available for download, the first of which solely tests Platinum reverbs, and the second the more CPU-intensive Spectral Gate. The third and fourth add 32 audio tracks to check mixing and DSP performance, but since the same sample is used for each track, hard disk activity is minimal. In its favour, this approach largely removes the variables of hard drive and IDE controller performance, fragmentation, format type, and other connected devices on the PCI bus, which makes it easier to directly compare the CPU performance of different computers when running Logic Audio.

However, on the down side, it can scarcely be described as a real-world test, since it's rare to require more than two or three reverbs in a song. Also, while Cubase users could perform a similar test with its bundled Reverb A and B plug-ins, these results perhaps wouldn't have as much practical meaning, since so few musicians seem to use these particular plug-ins. Similarly, Sonar 2 users might not find the Cakewalk Reverb and FXReverb plug-ins too useful for test purposes. The new Sonar 3 includes a brand new Lexicon Pantheon reverb plug-in, which is no doubt destined to be popular, and will perhaps make a more suitable basis for testing.

The huge advantage of using bundled plug-ins for testing computer audio performance is that every user can duplicate your tests, but of course the test results are only useful to users of that particular MIDI + Audio sequencer, and even (possibly) users of the same version as the one tested, as different optimisations may be included in successive versions. One way to avoid such limitations is to take as your test example a plug-in with a variety of applications that is widely used by musicians. This is why over the years I've used Waves Rverb and C4 as test plug-ins.

General-Purpose PC Benchmark Tests

There are already various software test suites available to test certain aspects of PC audio performance, such as RightMark's Audio Analyser (http://audio.rightmark.org, and my current favourite for testing PC soundcard audio quality), PC Magazine's Audio WinBench (www.etestinglabs.com/benchmark) and RightMark's new 3DSound for measuring various aspects of DirectSound and DirectSound3D streaming performance with games, although the latter two are largely irrelevant to musicians. There's also a multitude of simpler utilities aimed at confirming that your soundcard, speakers, and microphone are correctly connected and can play back and record with no problems, such as Passmark's SoundCheck (www.passmark.com).

Most PC magazines also use a range of different tests to measure different aspects of PC performance, but although very few of them bear any direct relation to music making, some can still be useful. Apart from its extensive selection of Information modules about various aspects of a PC's sub systems, SisSoftware's Sandra utility does provide a useful set of CPU Performance, Multimedia Performance, and Memory Performance benchmarking modules that can make it far easier for you to compare your PC's performance with others in PC magazine and web site reviews.

Mad Onion's PCMark2002 (www.madonion.com) measures 'generic PC performance in home and office use', but the free version is a comparatively small 8.5MB download, and provides useful measurements of CPU, memory, and hard drive performance with typical tasks. The Pro version adds, among other options, a Battery test for the life of laptops away from the mains, plus video and DVD performance tests.

Other benchmark suites, like Ziff Davis' Business Winstone, concentrate on specific applications such as Microsoft's Access, Excel, Frontpage, Powerpoint and Word, which isn't a lot of use to musicians, but their Content Creation Winstone applications include Photoshop, Windows Media Encoder, and Sonic Foundry's Sound Forge 5.0c, which are slightly more relevant to our requirements.

Soft Synth Tests

For those who use them in their songs, running soft synths is perhaps a better way to test PC music performance, since it's comparatively easy to create a test song that plays a huge simultaneous number of notes and thus quickly exhausts the CPU power of the PC under test. This provides a more real-world result, as many musicians find that they run out of steam with soft synths playing large number of notes long before they exhaust their PC running plug-ins. This is because while inserting an extra plug-in imposes a small but fixed CPU overhead, launching a soft synth and playing it can result in a widely varying and often taxing CPU load, unless you're careful about restricting maximum polyphony.

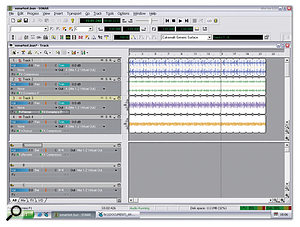

Here's a practical test for the majority of soft synths — a song that starts with four very long MIDI notes, and then introduces four more at a time at the beginning of each new bar. When your PC glitches or 'falls over' you can read off the maximum polyphony you achieved. My PIII 1GHz PC manages 88 notes when running NI's Pro-53.Soft synths use two types of engine: dynamic and fixed. The first (and most popular) type imposes a tiny basic overhead when launched, and then each additional note played consumes a little more CPU. The quickest and easiest way to measure performance with this type of engine is to stagger the start times of long MIDI notes, so that you see the CPU meter slowly rise as it proceeds through the song. Then at the point when the test computer falls over you should know how many notes were playing. I draw in clutches of four new notes at the start of each bar, so by the beginning of bar four there are 16 notes playing, by bar eight there are 32, and so on (see screenshot). I've used this test in the past to compare review PCs using Cubase and Pro-53.

Here's a practical test for the majority of soft synths — a song that starts with four very long MIDI notes, and then introduces four more at a time at the beginning of each new bar. When your PC glitches or 'falls over' you can read off the maximum polyphony you achieved. My PIII 1GHz PC manages 88 notes when running NI's Pro-53.Soft synths use two types of engine: dynamic and fixed. The first (and most popular) type imposes a tiny basic overhead when launched, and then each additional note played consumes a little more CPU. The quickest and easiest way to measure performance with this type of engine is to stagger the start times of long MIDI notes, so that you see the CPU meter slowly rise as it proceeds through the song. Then at the point when the test computer falls over you should know how many notes were playing. I draw in clutches of four new notes at the start of each bar, so by the beginning of bar four there are 16 notes playing, by bar eight there are 32, and so on (see screenshot). I've used this test in the past to compare review PCs using Cubase and Pro-53.

The second type of soft-synth engine has a fixed CPU overhead depending on the maximum number of notes you require, and is used by more complex soft synths. The two main examples that I know of this type of synth are Reaktor and Tassman. Although this fixed overhead can be frustrating when writing songs, it does make it easier to use them for test purposes — you just dial in increasing numbers for the maximum number of notes, and stop just before the CPU runs out. Generally you don't even have to play a song, since the overhead is constantly indicated for the current maximum selected polyphony.

There are still things to watch out for with soft-synth tests. If different functions, such as multiple oscillators, filters and LFOs, can be selectively disabled to reduce CPU overhead, you must fix on which patch you're running, to allow repeatable results. Many soft synths also cap maximum polyphony (the Cubase bundled JX16 at 16 notes and NI's Pro-53 at 32 voices, for instance), so if you want to test with more voices than this, you need multiple tracks, each adding more voices to the overall total.

The Real World Tests

When it comes to creating an all-round test that runs a selection of audio tracks, plug-ins and soft synths, you have some very tricky decisions to make. Unlike the vast majority of mainstream benchmark tests, which are timed from start to finish and therefore provide an accurate and fairly repeatable result, music applications are run in real time, and consume a proportion of the PC's resources. A very slow and an extremely fast PC would both provide useful general-purpose benchmark figures for comparison, but for the same two PCs to complete a real-time music benchmark the test must be carefully structured. It must not exceed 100 percent CPU overhead with the likely slowest PC to be tested, but must still provide figures that exceed 20 percent or so with the fastest PC available, since below this figure it's often difficult to measure some CPU displays accurately, particularly with the bargraph approach of Cubase.

The 'FiveTowers' test for Cubase SX is a useful measure of CPU performance, although reporting an accurate CPU meter reading can be difficult.For the last year or so, Cubase SX users have had the downloadable 'FiveTowers' song to provide some useful comparative test results. Although it does replay a few audio tracks, these are primarily employed to run a selection of the bundled VST plug-ins, along with a further selection of VST Instruments. One huge advantage of any song-based approach is that it ought to work on any platform supported by the host application, but some Mac users have reported very poor results with the 'FiveTowers' test, suggesting that Steinberg haven't optimised their code for the Mac platform.

The 'FiveTowers' test for Cubase SX is a useful measure of CPU performance, although reporting an accurate CPU meter reading can be difficult.For the last year or so, Cubase SX users have had the downloadable 'FiveTowers' song to provide some useful comparative test results. Although it does replay a few audio tracks, these are primarily employed to run a selection of the bundled VST plug-ins, along with a further selection of VST Instruments. One huge advantage of any song-based approach is that it ought to work on any platform supported by the host application, but some Mac users have reported very poor results with the 'FiveTowers' test, suggesting that Steinberg haven't optimised their code for the Mac platform.

Quite a few users posted their results for the first public release (1.0) of the tests, and you can still find this information on Timo's Cubase SX web site (http://cubase.freezope.org/perftest/intro). However, version 2.0 of the test includes "a more realistic balance of VST Instruments and plug-ins, based on user feedback", and is more suitable for current high-end systems. In fact, there are so many plug-ins running that my Pentium III 1GHz machine just hits the Cubase CPU meter end-stops at 99 percent.

Unfortunately, unlike a test that provides a numeric result, the 'FiveTowers' test has to rely on each user reading the Cubase CPU meter accurately, and it isn't that easy to read a bargraph display with ambiguous markings. If you want to perform the test yourself, try temporarily dropping your screen resolution down as far as it can go (640 x 480 pixels for instance). This shouldn't make any difference to the test result, but it will make the meter a lot larger on screen, so you can read its value more easily.

Also, once you lower your latency below about 12ms, CPU overheads start to rise, because of the frequency of interrupts. For this reason you should temporarily increase your latency to 50ms or more, to ensure that your CPU doesn't measure any extra load due to the soundcard buffer size.

Like most of the previously mentioned music tests, the 'FiveTowers' song primarily tests CPU performance, but not the effects of your RAM or hard drives. Also, since few, if any, of the bundled plug-ins and instruments used are likely to be optimised for Pentium 4 processors, P4s will be at a disadvantage.

I've run this test on several recent review PCs and achieved repeatable results that make sense. With care, you can also extract some useful information from the nearly 200 user results posted on the 'FiveTowers' web site, but I'm suspicious of many of them. Sometimes two very similarly-specified systems achieve very different scores, while one user claims to be running an Athlon XP 1700+ whose performance equals that of three other machines running Athlon XP 2700+ processors. Many other Athlon owners with overclocked machines seem to be unsure which column to use for the rated processor speed and which for the overclocked speed. Despite these anomalies, the 'FiveTowers' test is potentially a useful one.

Sonartest

The downloadable 'Sonartest' is a handy way to measure your PC's processor performance with the bundled Sonar plug-ins, and its numeric CPU readout is far easier to read than the Cubase bargraph display.Sonar users looking for a way to test their PCs can download the Sonartest.bun file from the Cakewalknet web site. It uses a mix of Sonar's bundled plug-ins, in both insert and aux send configurations. As in the case of the 'FiveTowers' test, it's important with the 'Sonartest' file to run your soundcard with a high latency of 50ms or more, to minimise the effects of buffer overheads.

The downloadable 'Sonartest' is a handy way to measure your PC's processor performance with the bundled Sonar plug-ins, and its numeric CPU readout is far easier to read than the Cubase bargraph display.Sonar users looking for a way to test their PCs can download the Sonartest.bun file from the Cakewalknet web site. It uses a mix of Sonar's bundled plug-ins, in both insert and aux send configurations. As in the case of the 'FiveTowers' test, it's important with the 'Sonartest' file to run your soundcard with a high latency of 50ms or more, to minimise the effects of buffer overheads.

Like Wavelab, Sonar provides a numeric readout of its CPU overhead, which makes for less mistakes and more accurate results. But just to prove how difficult it is to provide really hard-and-fast answers when it comes to PC performance, on the same web site you can find graphs of the comparative performances of an Intel 2.8GHz P4 and AthlonXP 2800+ running a range of plug-ins. While many of the results put the Athlon at an advantage, others favour the P4 — and, of course, updated versions of the test plug-ins could shift the balance yet again.

Final Thoughts

All tests mentioned here are useful in comparing your PC's performance against other PCs, and some of them are also handy if you want to weed out potential problems with your machine. After all, if your results are very different from someone else's with a similar spec, you may be able to track down an incorrect BIOS or Windows setting that explains the discrepancy. I've spotted quite a few musicians posting on the SOS Forums over the years who are managing comparatively few tracks of audio, but didn't realise that there was anything wrong until talking to others. Often, the solution is only a few clicks away.

The closest to 'real world' tests are those I've referred to that run on your chosen MIDI + Audio sequencer. Ultimately, however, they don't provide irrefutable answers to the question of what is the 'best' machine, since so many factors have to be taken into account — and, of course, there is no definitive way to use music software anyway. Nevertheless, such tests can give you a ball-park feel for how your machine compares with others.

As always, the easiest way to find out whether or not your PC is up to the job you're asking it to do is to try it out for yourself. There are plenty of things you can do to squeeze a little more out of a PC, such as restricting softsynth polyphony, or 'printing' audio tracks with plug-ins to release some CPU back into the pool, but sometimes a look at what other people have achieved with their own PCs can make you realise that it really is time to upgrade.