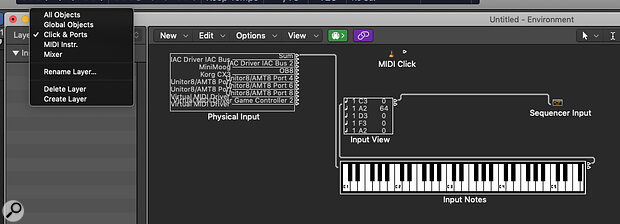

Screen 1: The MIDI input/output window in the Click & Ports Layer.

Screen 1: The MIDI input/output window in the Click & Ports Layer.

We demonstrate how flexible Logic Pro’s MIDI processing tools can be.

In 1983, the second most successful computer networking protocol was born. Unlike those that underpin the Internet, the Musical Instrument Digital Interface (MIDI) was not created by an academic offshoot of the military wing of the United States, but by a consortium of synthesizer manufacturing companies led by Dave Smith of Sequential and Ikutaro Kakehashi of Roland. At first, this new protocol was mostly used to connect synthesizers and drum machines together, but the advent of powerful and affordable computers such as the Atari ST led to the development of the grandparents of the Digital Audio Workstations we use today.

MIDI is a 7‑bit protocol, so it offers 128 discrete musical note numbers (0‑127), 128 velocity values and each of its 128 controllers can output 128 values. Some of these latter data are used for common purposes, such as pitch bend or modulation, but some are left free for the user or manufacturer to implement as they wish. There’s also something called SySex, which allows manufacturers to create specific control data for their products and which is used in such things as MIDI editors and patch librarians. The new MIDI 2.0 protocol, introduced in 2020, is backwards compatible with version 1.0 and adds some new features such as MIDI Polyphonic Expression (MPE) — but the length of time between versions 1 and 2 demonstrates how much they got right first time.

Emagic’s Logic (as was) was an early entrant into the marketplace and was notable for its powerful, object‑orientated MIDI data‑manipulation tools. Although few of us use a MIDI interface to drive banks of synthesizer and effects modules these days, the protocol still underpins all hardware controllers — albeit more often via a USB cable now rather than the old 5‑pin DIN. When Apple took over the development of Logic, they attempted to simplify some of its complex MIDI tools under easier‑to‑use graphical interfaces, but the power remains, and the introduction of the MIDI Scripting tool has actually expanded the software’s data processing capabilities. But before we delve into this, it’s worth spending some time exploring the MIDI processing power that underpins Logic Pro. The Environment still lurks in the Windows menu under the guise of the MIDI Environment, and although many users never venture there, it can still be used to undertake complex processing tasks.

To demonstrate this, here’s how I used it to solve a problem I came across when trying to express my inner Keith Emerson.

When Apple took over the development of Logic, they attempted to simplify some of its complex MIDI tools under easier‑to‑use graphical interfaces, but the power remains, and the introduction of the MIDI Scripting tool has actually expanded the software’s data processing capabilities.

Transformers

My first love was the Hammond organ, and to play one properly you really need real‑time control of the instrument’s drawbars. I find that a virtual organ through a Leslie speaker actually sounds rather lovely, so I’d decided to get a digital organ to use just as a drawbar controller. Foolishly, I didn’t read the MIDI specification before I bought the instrument and I’d assumed that each of the organ’s drawbars would be allocated a separate MIDI controller number and that the position of the drawbars would output values in the 0‑127 data range, as most other controllers do. If that had been the case, it would have been easy to assign them to my software organ’s virtual drawbars. However, this organ had employed a system whereby its 16 drawbars were each allocated a data range of eight, but used the same Continuous Controller number for all of them: drawbar 1 outputs values 0‑7, drawbar 2 outputs 9‑16, and so on. Although Logic Pro has some MIDI data transform functions in the Piano Roll and various filters in the MIDI settings, what is really needed here is a way to transform incoming MIDI data in real time. This is where the MIDI Environment comes in.

Open the Environment, and make sure you’re on the Click & Ports Layer, as in Screen 1 (shown above). You’ll see that a Physical Input object is cabled to the Sequencer Input object via a Monitor object named Input View — the latter really helps you see what’s going on when working with MIDI data. If you click on the Input Notes keyboard object (or use any MIDI controller) you’ll see the Input View display the MIDI channel, note, velocity and any incoming controller data derived from judicious knob twiddling. To change incoming data, a Transformer object needs to be placed between the output and input objects.

Robots In Disguise

To do this, first create a Transformer object from the New menu. Click on the name and drag it somewhere useful. You can rename it from the Inspector — in my case, I’ve called it Drawbar 16 as that’ll be the first I want to address. Now create a couple of Monitors from the same menu, call them Unprocessed and Processed, and move them to the left and right of the Transformer respectively. You can resize these objects by dragging at the bottom right. If you grab the little arrow at the top of an object, you’ll see it changes to a representation of a cable, so drag the Unprocessed object’s cable to the Transformer. In a similar fashion, drag the virtual cable from the Transformer to the Processed Monitor. Next, drag the cable that goes from the Input View Monitor to the Unprocessed Monitor and cable the Processed Monitor to the Sequencer Input. MIDI data should now pass through the Transformer and be displayed identically in all Monitors (see Screen 2).

Screen 2: Routing incoming MIDI data through the Transformer object.

Screen 2: Routing incoming MIDI data through the Transformer object.

I usually like to simulate external MIDI inputs when I’m doing this sort of thing, so I create a Fader object and apply the same conditions as my hardware — in this case, Drawbar 16 on the hardware was set to output CC 20 (an ‘unassigned’ number), with a data Range of 0‑7 on MIDI Channel 1, so I reproduced this in the Fader’s Inspector (Screen 3). If this Fader is then cabled to the Unprocessed Monitor, moving the slider will display its output data in both Monitors. Now we need to transform the incoming limited range of data to create the full throw required by the virtual organ’s drawbars.

Screen 3: Setting the virtual fader’s data range.

Screen 3: Setting the virtual fader’s data range.

Screen 4: The Mapped MIDI data.Double‑click on the Transformer to open the object’s parameters window. What we want to do here is to take the CC 20 data coming in on MIDI Channel 1, with its data range of 0‑7, and transform it to CC 21. The MIDI channel will remain unchanged (remember each drawbar will need its own controller number for the virtual organ), but the data range needs to be expanded to go from 0‑127. The Mode we’ll need to use is called ‘Apply operations and filter non‑matching events’, so that the transform only applies to our specified range and CC number.

Screen 4: The Mapped MIDI data.Double‑click on the Transformer to open the object’s parameters window. What we want to do here is to take the CC 20 data coming in on MIDI Channel 1, with its data range of 0‑7, and transform it to CC 21. The MIDI channel will remain unchanged (remember each drawbar will need its own controller number for the virtual organ), but the data range needs to be expanded to go from 0‑127. The Mode we’ll need to use is called ‘Apply operations and filter non‑matching events’, so that the transform only applies to our specified range and CC number.

Screen 4 shows the Conditions you’ll need to set, and the Operations section (Screen 5) actually performs the modification via the Mapping feature. This is selected from the Operation pull‑down menu under Data Byte 2. The number on the bottom left of the window is the actual data value, the one to the right is the value we want to change it to. As the virtual organ’s drawbars are expecting a data range of 0‑127, but our hardware is only outputting eight data points (0‑7), we’ll have to increment each incoming data point by 18.

You’ll see that 0 is mapped to 0 by default. Leave this one alone. Now increment the left box to 1 and change the ‘mapped to’ box to 18; the left box to 2 and the right box to 36; and so on. You’ll see blue lines displaying the mapping appear on the graph. We’ll also need to change the CC number by incrementing the Add value in the Data Byte 1 Operations box by one. If you now move the fader, you’ll see that the Processed monitor will now display a full range of values, albeit in 18‑bit ‘chunks’ and on CC 21.

Screen 5: MIDI mapping data for the first two drawbars.

Screen 5: MIDI mapping data for the first two drawbars.

The next drawbar also outputs on the same MIDI Channel and CC number, but the data range will now be 8 to 15. Option‑click on the Drawbar 16 Transformer object and drag it to make a copy. Rename it and change its data range in the Inspector and Transform window to 8‑15. Next change the Data Byte 1 Add operation to 2, and repeat the mapping procedure but this time between the 8 and 15 data range. In this fashion, you can quickly map all 16 drawbars to the correct controller numbers and get each to output modified data in real time. You can then save your modifications as a template.

More Than Meets The Eye

I hope this case study has demonstrated how flexible Logic Pro’s MIDI processing tools can be. You’ll have seen that other objects are available in the MIDI Environment’s New menu, including an Arpeggiator, Chord Memorizer and MIDI Delay. Setting these up is almost as complex as using the Transformer, so the welcome introduction of specific MIDI effects plug‑ins in the Mixer was long overdue. These were covered back in the November 2013 issue and are, effectively, simplified ‘front ends’ for Logic Pro’s MIDI tools. However, one of these effects, Scripter, allows the user to create bespoke MIDI processing tools. Part 2 of this workshop will look at how to use it — and how the MIDI Environment will help you debug your creations.