The simple audio‑for‑picture setup of SOS reader Martin Bushell, as featured in Readerzone December 1999. For many applications, Martin's approach of simply playing the video and sequencer manually is perfectly adequate, and there is no need to sync one to the other.

The simple audio‑for‑picture setup of SOS reader Martin Bushell, as featured in Readerzone December 1999. For many applications, Martin's approach of simply playing the video and sequencer manually is perfectly adequate, and there is no need to sync one to the other.

With the growth of digital television and affordable solutions for recording audio to picture, many musicians are keen to get into writing and recording for visual media. In this introduction to the series, Hugh Robjohns investigates basic working methods and suggests some system requirements. This is the first article in a three‑part series.

If you write, record or mix music, it's quite likely that at some point you will want to do it in conjunction with video, computer animation or other moving pictures — and this means addressing the thorny topics of synchronisation and timecode. This article is intended to provide an overview of some of the requirements and practicalities involved in working to pictures, but over the coming months we will also examine some case studies with typical working examples of some of these approaches to see just what is possible, what equipment is required, and how it can be set up to provide the best results.

The Basics

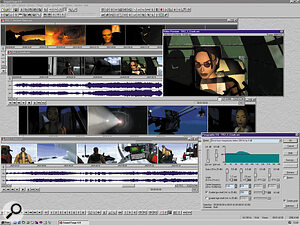

As this example from Nathan McCree and Matt Kemp's soundtrack for Tomb Raider III shows, synchronising audio clips to specific timecode hits in the picture is made easier with software like Sonic Foundry's Sound Forge PC audio editor.

As this example from Nathan McCree and Matt Kemp's soundtrack for Tomb Raider III shows, synchronising audio clips to specific timecode hits in the picture is made easier with software like Sonic Foundry's Sound Forge PC audio editor.

There are many ways of applying sound to pictures (I will concentrate on music here, rather than consider the complexities of dialogue and effects), and the best approach depends on what you want to achieve and how your music will be used. It is perfectly possible to get excellent results with some extremely simple setups. The most sophisticated sound‑for‑picture installations, which are required for frame‑accurate timing, are necessarily complex, but now cost a fraction of what they did a decade ago. There is also a range of intermediate stages between these two extremes, with numerous equipment options and plenty of upgrade paths to follow as your requirements and abilities develop.

The first thing to consider is just how the music is required to relate to the pictures: how tight and accurate does the synchronisation have to be? In many applications, particularly for background music, the requirement is only that the piece should run to an approximate duration — say 29 seconds. There are no specific 'sync points' to hit with a musical effect other than the start and end.

Of course, it helps enormously if you can see the pictures for which your music is intended — partly to get the right feel and mood as you are writing the piece, but also to check the suitability and accuracy of the completed work. This is not essential, and many writers prefer to write 'blind', but it is not usually very difficult to arrange to have a video player and TV in a corner of the studio to view alongside your music system. The fact that the music does not have a precise sync point with the pictures means that hitting the play button on your recorder or sequencer when you see the appropriate picture frame is often good enough to check the results.

Another scenario where this kind of arrangement is perfectly adequate is, strangely, where the requirement is for extremely accurate synchronisation between pictures and sound. Where a strongly rhythmic musical piece is used it is usually necessary to have the picture cuts timed precisely to the beat. Trying to write music to fit ready‑edited pictures would be a complete nightmare, so in this application, as before, it is only necessary to write music to a specified length and tempo. The picture editor will then cut the pictures to fit the music as necessary after the music has been copied into the video editing system.

In both of these scenarios, all that is needed in addition to your existing audio setup is a suitable video player — a domestic VHS machine will suffice quite adequately — and a television. It would be wise to be careful where you put the TV as the magnetic field from the screen can cause interference on electric guitars and some microphones, and the acoustic noise from the line scanning at 15.625kHz is often picked up by any live mics in the room. It is not uncommon for other electrical interference from the TV to find its way into mixers, amplifiers and signal processors too, so listen carefully when you switch it all on. Try using different mains sockets from the audio equipment, and position it all as far away as possible.

An alternative solution, which is becoming a lot more practical now that hard drives are so large and relatively cheap, is to copy the original video into a computer using a video grabber card and something like the QuickTime system. The picture quality may be relatively poor, but it has the advantage of instant cueing, no need to clutter up the studio with extra equipment and the ability to control the video and audio from the same computer screen — often with reasonably accurate synchronisation too.

Ideally, the original video tape of the pictures you are working with should be supplied with the appropriate timecode visible as a time display on the screen (usually referred to as burnt‑in timecode, or timecode‑in‑vision). This will help in the accurate identification of scenes and for repeatable cueing of the tape. One of the audio tracks of the video will probably have the timecode as an audio signal and the other a rough (mono) mix of whatever live‑action sound may be associated with the pictures, perhaps consisting of dialogue and some effects. It could be useful to arrange for the audio premix track to be fed through your studio monitoring, but beware of getting any crosstalk from this into your music recording! Also watch out for timecode breaking through — the powerful square‑wave signals that make up timecode are pitched in the ear's most sensitive region and have a habit of getting everywhere!

With this kind of equipment setup, all you have to do to check the accuracy and suitability of your music is simply to start it replaying when you see the appropriate timecode number on the screen. The steadily incrementing frame counts make it easy to anticipate the precise start point, and with a little practice you should find you can fire off the audio on the exact second. When you are completely happy with your composition and mix, all you have to do is copy it to a suitable delivery medium (DAT, CD‑R, ADAT, DTRS, or whatever — see 'Formatted For Dispatch' box, on page 134) and write a cue sheet stating where you think it should start and how long it runs. The editor or dubbing mixer will subsequently lay it against the pictures and you'll see your name in the credits, hopefully!

Making The Sync

![]() MOTU's Digital Timepiece is a sophisticated synchroniser, capable not only of converting video timecode into MIDI timecode for sync'ing a sequencer, but also word clock for synchronising digital audio recorders.

MOTU's Digital Timepiece is a sophisticated synchroniser, capable not only of converting video timecode into MIDI timecode for sync'ing a sequencer, but also word clock for synchronising digital audio recorders.

The next stage up is where the sound and pictures have to remain precisely locked in synchronism because there are specific cue points which the music has to hit, and this is where things start to get a little more complex. The normal way to work is to make the video machine the 'master' and the audio equipment the 'slave'. This is principally because timecode — the synchronisation medium — counts video frames and so has to come from the video machine. However, this is not the only way to work, and there are professional video machines which can be slaved to timecode sourced from the sequencer or master recorder, which is often a neater and more flexible way of working. Equally, loading pictures into the computer and controlling them from the sequencer is another good solution — more on that later.

Back to the basic approach first, though, and if you have a VHS tape with timecode recorded on one of its audio tracks this could be used as the timecode master source, with your MIDI sequencing package configured to chase‑lock to the timecode — in other words to locate to the required timecode point and then run in synchronism with it. Alternatively, a timecode‑equipped audio recorder could chase the video timecode in the same way.

In days of old, separate timecode synchronisers were required to control audio recorders via their remote control ports, but these days manufacturers tend to build optimised chase synchronisers into their flagship machines, which makes life a great deal easier. Timecode DAT machines, DTRS recorders, MO recorders and MIDI synchronisers are all available with the ability to chase timecode directly.

However, the main drawback with this simple chase‑to‑video approach is that any wow and flutter from the video machine (and VHS recorders generally do not have the most stable transports in the universe) will result in speed and timing variations in the chase‑synchronised equipment locked to the timecode it is putting out. And that could mean an unstable and unsatisfactory recording!

Re‑chasing

There are several ways around this problem, depending on the flexibility of the equipment used. One way would be to take the timecode from the video and re‑clock it using a stable timecode generator. However, these are expensive boxes to have to add, and require a stable video reference feed too, so it would probably be more cost‑effective to purchase a second‑hand professional video player (like a discarded Betacam machine). A lot of timecode‑equipped audio machines are provided with 're‑chase' options where the timecode is used as a position guide rather than as a speed controller. This means that any wow and flutter in the timecode from the video player will not affect the speed of the audio, but that overall synchronisation will be maintained, though potentially with less accuracy. Typical re‑chase programs allow timecode errors of up to a second before they do something about it! In practice, this kind of setup works very well.

A typical arrangement might be to feed timecode from a video machine to a recorder such as the Tascam DA78 or Yamaha D24, set to re‑chase. These machines would then provide either re‑clocked timecode or an MTC signal to synchronise a MIDI sequencer. When working without pictures, the sequencer could be used to control the recorder transport via MIDI Machine Control, but when synchronised to the pictures it will be necessary to 'drive' the system from the video machine. This is often rather cumbersome and slow, but is normally effective and reliable.

As already mentioned, an alternative approach might be to transfer the source video and timecode to the video format within the computer, where it may be controlled from the sequencer with the advantages of instant access to any scene and often much faster sync lockup times. The down side is reduced picture quality (making it hard to read timecode numbers or to see specific action cues) and more clutter on the computer screen!

In next month's article we will take a closer look at the practicalities of setting up a basic audio‑for‑picture system and see what kind of results can be achieved.

Formatted For Dispatch

Once all the issues of synchronisation have been addressed and you are ready to record your music to match the pictures, you have to decide what format to record on to. This is something which should be agreed with the producers of the video, well in advance, and may require discussions with the picture editor and/or dubbing mixer.

Simple non‑sync work, such as background music, or strongly rhythmic music which will be laid before the pictures are cut to it may be supplied as a completed stereo mix on a non‑timecode medium such as CD‑R, DAT, or even a Minidisc. Supplying your greatest masterpiece on a reused cassette may not be the best way to enhance your credibility! Personally, I would avoid data‑reduced formats such as Minidisc if possible, simply because of the unpredictable interaction with subsequent signal processing.

However, for more complex work, it is far better to supply your material on an eight‑track format such as ADAT or, better still, DTRS (the Tascam DA88 format). Any decent dubbing house and post‑production facility will be able to work with DTRS tapes, though there are numerous other formats which may be suitable too, such as MO and hard‑disk formats from Akai, Digidesign, SADiE, Yamaha, Genex and others. One advantage of supplying your work on multitrack is that you can provide a discrete surround sound mix across six channels, along with a straight stereo mix on the last two. Alternatively, you could provide submixes of the various sections of your music (percussion, strings, lead or whatever) so that the final music balance can be performed during the dubbing process, allowing the music to be blended with the live‑action dialogue and sound effects — dubbing mixers often appreciate the flexibility of this approach.

The DTRS format has become something of a de facto standard in the video post‑production world and would be my preferred mastering format for this kind of work, especially now that 24‑bit resolution is available. Of course, timecode is an inherent part of the DTRS format and with the latest generation of machine (the DA78, shown above; see review on page 122), comprehensive timecode and MIDI synchronisation facilities are built‑in, allowing easy interfacing with both a video source and the MIDI sequencing system without breaking the bank.

Video Formats: What You Need To Know

There are two basic video formats to consider: NTSC and PAL. NTSC is the system used in America, among other places, and has 525 picture lines, whereas the 625‑line PAL system is used in the UK as well as in much of Europe. Needless to say, these two systems are totally incompatible with each other! The other major picture carrier to be aware of is good old film, of course — 16mm, super‑16, 35mm and even 70mm formats are still in regular use around the world. But you don't have to rush out and buy a projector: these days it is normal for film to be transferred to a video format for post‑production.

The problem here is the introduction of a host of extra complexities as to the applicable frame rate and correct running speed. This is a vast can of worms which should not be opened by the unwary — particularly if you are working with a digital audio format as well! Timecode, frame rates and sampling rates are all closely interlinked, and getting any element wrong will result in a complete mess. This is an area of the audio map which should be labelled 'Here Be Dragons'!

For the purposes of working with and synchronising audio to pictures, the main issue to resolve is the frame rate — how many picture frames are replayed per second. Synchronisation requires the audio medium and the picture medium to move at the same speed from the same location — something normally achieved with timecode in one form or another.

Longitudinal timecode (the audio variety) comes in three flavours: SMPTE, EBU and the loosely related MIDI (MTC). They all do the same job, albeit in different ways, and have the dual role of identifying each individual picture frame and counting their passage over time. For most practical purposes SMPTE and EBU timecode are one and the same thing — a simple binary code carried as an audio signal composed of alternating square waves at fundamental frequencies of 1 and 2kHz. MTC carries timing data within the MIDI format and there are plenty of devices available to convert between SMPTE/EBU code and MTC.

For the sake of completeness, there is another kind of timecode which applies to video machines called VITC — vertical interval timecode. You may have seen it occasionally on television or videos as a row of constantly changing dots and bars along the top edge of the picture screen. The advantage of VITC is that it can be read when the video transport is moving slowly or even when stationary, whereas LTC (longitudinal code) cannot.

The number of picture frames counted per second is critical in achieving synchronisation between different systems. Traditionally, film has a frame rate of 24fps — 24 frames per second. However, it is not unusual for films made specifically for television to be shot at a higher frame rate (25 or 29.97fps) to simplify the transfer to a video format and audio post‑production and transmission. The PAL television format replays 25 frames per second, which is around four percent faster than the standard film rate. In America, the original NTSC format was devised, back in the days of black and white television, to run at 30 frames per second. Of course, it is no coincidence that both the NTSC and PAL frame rates are half the frequency of the local mains electricity supplies (50 and 60Hz respectively). However, for a series of complicated technical reasons that are not particularly relevant to this article, the Americans had to 'bodge' the frame rate of their NTSC system when they introduced colour, the net result being a reduced averaged frame rate of 29.97 frames per second!

This is where the confusing 'drop‑frame' term comes from — if you examine the timecode options on a piece of equipment you will typically find 24, 25, 30 and 30DF (or 29.97 or some such variation). The latter is a solution designed to compensate for the timing difference between the 30 and 29.97 frame rates. In general, working within the UK you are rarely likely to need anything other than the 25fps setting, but for the technocrats who really want to know, in drop‑frame codes the seconds still count 30 frames (0 to 29) but the first two frames are missed out at the start of each minute, except for every 10th minute! For nine out of every 10 minutes, the minute turnovers look like this (the transition from 1 hour and eight minutes to 1 hour and nine minutes):

01:08:59.28 (Hours:Minutes:Seconds.Frames)

01:08:59.29

01:09:00.02

01:09:00.03

Notice that the first two frame numbers 01:09:00.00 and 01:09:00.01 are missing. However, on the tenth minute the count runs normally like this:

01:09:59.28

01:09:59.29

01:10:00.00

01:10:00.01

This horribly complicated system ensures that the timecode displayed on the replay machine and the clock on the studio wall maintain synchronism with each other (without the drop frame the timecode would appear to lose 3.6 seconds per hour). Fortunately, timecode‑equipped machines can all handle drop‑frame code without the user having to do anything more complex than select the appropriate mode... which is just as well as this kind of thing can cause a severe loss of hair! As I said before, the good news is that if you are working solely with PAL pictures running at the eminently sensible 25fps frame rate, life is very straightforward and synchronisation is almost trivial given suitable equipment.