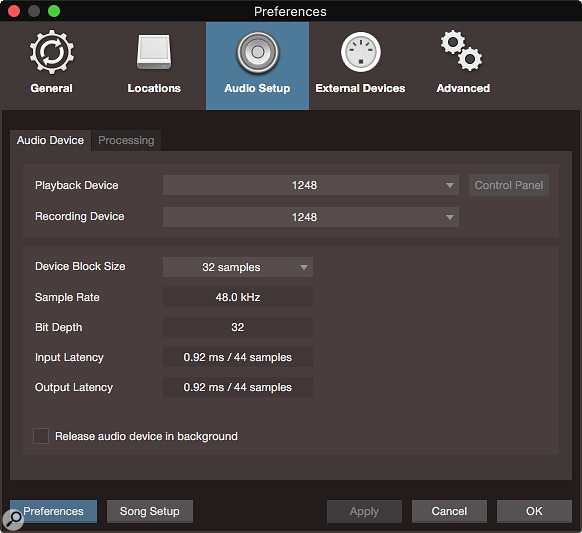

Screen 1: The Audio Device preferences pane. A nice, short, 32-sample buffer is in use here, yielding perfectly workable latencies of less than a millisecond.

Screen 1: The Audio Device preferences pane. A nice, short, 32-sample buffer is in use here, yielding perfectly workable latencies of less than a millisecond.

With Studio One’s advanced features, latency need not be a problem.

When audio data is moved around, it absolutely must be received and played on time, or bad things happen. In DAWs, this stability is ensured by the use of a buffer, a short-term ‘staging area’ your processor can use like counter space in a kitchen to make processes more efficient. But this buffer turns out to sit at the centercentre of competing priorities, a fact that has engendered no small amount of confusion around buffer size settings.

The Fundamental Problem

Recording is impacted by buffer size because buffering necessarily introduces latency (delay) in monitoring the source, and monitoring delay is difficult to stomach when recording a performance. It also complicates the use of virtual instruments, which get delayed as well. Small buffer sizes create less delay, so recording is best done using the smallest workable buffer size, but smaller buffer sizes also push your processor harder, and this proves to be the limiting factor in how small a buffer you can use.

In Studio One, the Audio Setup / Audio Device / Device Block Size setting in the Preferences dialogue sets the basic buffer size. For the lowest monitoring latency, set it as small as you can get it without incurring dropouts, glitches or clicks. I usually use 32 samples, or sometimes 64 samples (for high-res, high-track-count situations) when recording. The input and output latencies in milliseconds produced by the current setting are displayed at the bottom of the dialogue. Round-trip delay time is the sum of the input and output latencies.

This is all lovely, but the Device Block Size buffer is also used for playback of existing tracks and plug-in processing, and, in that context, larger buffers are better because they ease the processor’s workload. My typical buffer size for mixing would be 512 or 1024 samples. Plainly, buffer needs for monitoring and track playback are in fundamental conflict with each other.

Two Solutions

The first approach devised to resolve this conundrum was simply to reset the buffer size smaller when recording and larger when mixing. If you are using a modest or older interface that offers little in the way of bells and whistles or speed, this is the method you will continue to use. It’s a little clunky, but effective enough to have worked successfully for years.

As DSP chips became cheaper, the next solution for the buffer-size paradox emerged as dedicated DSP chips for monitor mixing embedded in interfaces. Real-time, hardware-based mixing has near-zero latency, so inputs are heard without delay and Device Block Size can be optimised for track playback.

PreSonus’s Studio 192 interface offers onboard DSP and, as well, integrates directly with Studio One, a significant feature for recording workflow. Studio One recognises PreSonus interfaces with onboard DSP or, running under Windows, any interface conforming to the ASIO 2.0 DM spec. Then it makes low-latency hardware-based monitoring available, a condition it indicates in two ways on the Audio Setup / Processing pane of the Preferences dialogue. First, the ‘Z’ to the right of the Audio Roundtrip monitoring latency will be coloured blue if hardware monitoring is recognised, and, second, only when suitable interface DSP is seen will the “Use native low-latency monitoring instead of hardware monitoring” option not be greyed out.

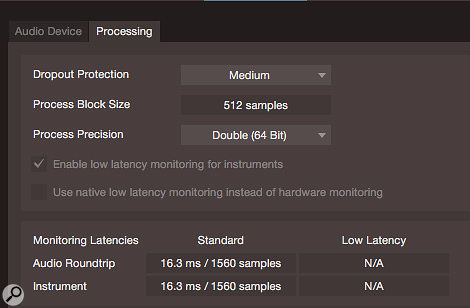

Screen 2: With a 512-sample buffer set for mixing, latency is too high to monitor while recording without either hardware monitoring or native low-latency monitoring. If you needed to add an overdub while mixing a session with lots of tracks and plug-ins, making the buffer smaller could result in glitching.

Screen 2: With a 512-sample buffer set for mixing, latency is too high to monitor while recording without either hardware monitoring or native low-latency monitoring. If you needed to add an overdub while mixing a session with lots of tracks and plug-ins, making the buffer smaller could result in glitching.

This last bit can seem confusing at first blush. What’s going on is that if no interface DSP is found, there can be no option to switch between hardware and software monitoring, so that option is greyed out. An interface with DSP opens the possibility of using either kind of monitoring, or even switching between them.

To use hardware monitoring, untick the “Use native low-latency monitoring instead of hardware monitoring” box. You can freely switch back and forth between hardware monitoring and software monitoring, as circumstances may suggest, just by clicking on the ‘Z’ in the Processor pane. Hardware monitoring is available only on outputs designated in the Audio I/O Setup pane as cue mixes, appearing in the form of a ‘Z’ at the bottom of the channel strip in the mixer. When this ‘Z’ is hollow, low-latency monitoring is not activated and latency is determined solely by Device Block Size. With interface DSP, clicking on the ‘Z’ at the bottom of an output channel strip will turn it blue, enabling hardware-based monitoring.

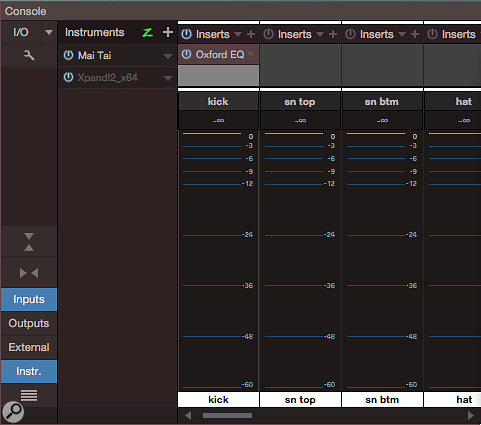

This gives us pretty-darn-close-to-zero latency, plus whatever EQ, dynamics and other processing the interface provides from its DSP. It also means that input signals are no longer run through Studio One on their way to monitoring, so you can’t monitor through plug-ins on the record channel in Studio One’s mixer. You can insert plug-ins on the input channel itself in Studio One, but the signal will be recorded with these effects ‘baked in’, even though you will not hear them while recording, since you are monitoring through the hardware, which precedes the processing,

Screen 3: When using hardware monitoring, latency on the input is of no concern. Here, an EQ is inserted on the input channel, committing that EQ to the recording. When the mixer is in the integrated window, double-clicking the meter on the input channel makes inserts visible.

Screen 3: When using hardware monitoring, latency on the input is of no concern. Here, an EQ is inserted on the input channel, committing that EQ to the recording. When the mixer is in the integrated window, double-clicking the meter on the input channel makes inserts visible.

One interesting technique to try if you have a PreSonus device with onboard DSP is linked hardware/software Fat Channels. Implement a Fat Channel on an interface mixer channel, implement a Fat Channel on the corresponding Studio One mixer channel, and then click the Link button. Now, the Studio One Fat Channel follows changes to controls on the interface Fat Channel. You get Fat Channel processing on your monitors while recording, and then those settings get transferred to the Fat Channel in Studio One so you can work with them after you finish recording.

I have MOTU AVB interfaces with powerful onboard mixing and processing, but they don’t communicate directly with Studio One, so I will never see a blue ‘Z’ for my interfaces. I still use the hardware-based mixing, but I have to operate it from the interface’s control software. Building a cue mix (as opposed to monitoring the main mix) in the interface control software is not much more work than doing it in Studio One. But monitoring while overdubbing is tricky on my system, and would be far more elegant with integrated control of hardware monitoring.

Even without monitoring through effects on the record channel, you still can route a send from the record channel to an effect, generally a reverb or delay, instantiated on a bus or FX channel. This effect return can then be routed to one or more headphone mixes: it will be subject to latency created by the Process Block Size, but for reverbs, that often isn’t a problem.

The Latest & Greatest

Finally, we come to Studio One’s native low-latency processing, which arrived in version 3.5. Studio One now implements a dual-buffer system, controlled by the new Dropout Protection parameter. The Dropout Protection setting determines the size of the Process Block Size buffer, which is dedicated to track playback and plug-in processing.

With two separate buffers to meet two conflicting needs, Device Block Size can be kept low for minimal monitoring latency, while Process Block Size can be set larger to accommodate playing back lots of tracks without dropouts or glitches. As with Device Block Size, estimated latency times for the Process Block Size buffer are shown in the Monitoring Latencies section at the bottom of the pane.

Screen 4: The Processing preferences pane is where Dropout Protection is configured. Note the green ‘Z’s in the Monitoring Latencies area, showing that the MOTU interfaces are fast enough to provide native low-latency monitoring.

Screen 4: The Processing preferences pane is where Dropout Protection is configured. Note the green ‘Z’s in the Monitoring Latencies area, showing that the MOTU interfaces are fast enough to provide native low-latency monitoring.

Native low-latency monitoring requires data to be moved quickly between Studio One and the interface, so this mode only becomes available if Studio One determines that your interface is fast enough. With a qualified interface and Process Block Size set larger than Device Block Size, native low-latency monitoring is available, as indicated by the green ‘Z’ to the right of the monitoring latencies. Depending mostly on your processor, very low latencies can be achieved with native low-latency monitoring. As before, ‘Z’ monitoring is available only on cue mixes, and the ‘Z’ on the mixer channel strip should turn green when you click it.

One compromise in this mode is that although plug-ins can be used on the record channel, they must not introduce more than 3ms latency: if a plug-in is safe to use in this role, its ‘power’ button will turn green. Plug-ins that introduce more latency than this are disabled. As before, you can still send from the record channel to an effect on a bus channel, as long as you can bear the latency.

Latency also can be a downright debilitating problem when playing virtual instruments. The “Enable low-latency monitoring for instruments” tickbox puts that right, but it requires low-latency software monitoring, so ticking the box forces Studio One to use software monitoring.

If all this seems like a lot to absorb, well, it is. It’s all very logical, but it can take a bit of thinking to suss out the best scheme for monitoring while recording and overdubbing, especially given that overdubs can easily happen when one is far down the road mixing. A thought experiment in which you walk through the session and figure out what monitoring is needed at each step can be effective (make notes!), or you can try it in a session (preferably not on a paying client’s time!) and figure it out in action. One thing is clear: low-latency monitoring is the preferable option whenever it is available, whether in software or hardware, and Studio One offers several ways to achieve it. Happy monitoring, everybody!