Key Milestones In Loudspeaker Design

Having mentioned Rice and Kellogg's moving-coil driver patent of 1925 as I started to write the first half of this article, it occurred to me that it would be interesting to conclude this exploration of studio monitor-speaker evolution by attempting to piece together a timeline of this and other key speaker-technology developments. Doing so turned out to be a much trickier and much bigger task than at first it seemed, because one thing I quickly realised on canvassing the opinions of a few speaker engineers and experts was that everyone's perception of what were the 'key' developments, and when exactly they happened, is different! What follows, therefore, is a decidedly personal list, but if anybody wants to chew this over in the SOS Forums I'll be very happy to join in. Meanwhile, without further ado, I give you...

1925: Chester Rice & Edward Kellogg

Chester Rice and Edward Kellogg with the first moving-coil speaker.While it seems to be generally accepted that Rice and Kellogg first developed and patented the arrangement of bits and pieces that we'd recognise today as a moving-coil driver, there were really numerous other engineers on both sides of the Atlantic working on the idea (Ernst Siemens actually patented a moving-coil transducer in 1874). The first commercial product that employed Rice and Kellogg's invention (branded Radiola) also incorporated an internal valve amplifier. So it could be argued that the first 'modern' speaker was also the first modern active speaker.

Chester Rice and Edward Kellogg with the first moving-coil speaker.While it seems to be generally accepted that Rice and Kellogg first developed and patented the arrangement of bits and pieces that we'd recognise today as a moving-coil driver, there were really numerous other engineers on both sides of the Atlantic working on the idea (Ernst Siemens actually patented a moving-coil transducer in 1874). The first commercial product that employed Rice and Kellogg's invention (branded Radiola) also incorporated an internal valve amplifier. So it could be argued that the first 'modern' speaker was also the first modern active speaker.

1927: The First 'Talkie'

The success of 'talkies' created a demand for better-sounding speakers.In 1927 the first 'talkie' film, The Jazz Singer, astounded movie-goers. So speakers went from tentative first steps to filling movie theatres with sound in just a few years. The movie business was a significant factor in the early and rapid development of moving-coil speakers.

The success of 'talkies' created a demand for better-sounding speakers.In 1927 the first 'talkie' film, The Jazz Singer, astounded movie-goers. So speakers went from tentative first steps to filling movie theatres with sound in just a few years. The movie business was a significant factor in the early and rapid development of moving-coil speakers.

1930: The Alnico Magnet

Rice and Kellogg's moving-coil driver employed a cumbersome electromagnet (basically a big coil of wire) to energise its voice coil. It worked but it was expensive and impractical. Alnico (an aluminium, nickel, cobalt alloy) permanent magnets arrived in 1930 and almost immediately made the electromagnet redundant in speakers.

1945: End Of The Second World War

World War II fast-tracked many engineering careers — some of which later bore important fruit in terms of loudspeaker technology.The Second World War is not exactly a technological development, but it was significant nonetheless. The extraordinary rate of radio and radar development during the War meant that when the conflict was over, a large number of electronic engineers, whose training, expertise and careers had been fast-tracked by necessity, were looking for things to do with their skills. This was especially so in the UK and is perhaps a significant reason why this small island later became disproportionately influential in audio engineering. The combination of available engineering talent, post-war optimism, an explosion of entertainment broadcasting, and subsequently LP music sales, meant speaker design as a discipline came of age.

World War II fast-tracked many engineering careers — some of which later bore important fruit in terms of loudspeaker technology.The Second World War is not exactly a technological development, but it was significant nonetheless. The extraordinary rate of radio and radar development during the War meant that when the conflict was over, a large number of electronic engineers, whose training, expertise and careers had been fast-tracked by necessity, were looking for things to do with their skills. This was especially so in the UK and is perhaps a significant reason why this small island later became disproportionately influential in audio engineering. The combination of available engineering talent, post-war optimism, an explosion of entertainment broadcasting, and subsequently LP music sales, meant speaker design as a discipline came of age.

1954: Acoustic Suspension

The Acoustic Research AR-1.Photo: Pat GuineyFor the first few decades of speaker design, drivers would either simply be mounted on flat, open baffles or in enclosures whose size was determined more by gut feel and the demands of a 'nice piece of furniture' than by much in the way of serious electro-acoustic analysis. This was especially true of horn-loaded speakers where, understandably, rather more emphasis was placed on the horn in front of the driver than the enclosure behind it. This all changed with Edgar Villchur's insight that if a driver's mechanical stiffness was low enough, the compliance of the air in a sealed enclosure would become a dominating factor of the driver/box resonant system. This meant that the system's low-frequency cutoff, efficiency, maximum level and power handling would all be defined by the internal volume of the box as well as the driver characteristics. It also meant that low-frequency distortion would not be dominated by drive-unit suspension non-linearity. Edgar Villchur's Acoustic Research AR‑1 was the first such 'acoustic suspension' speaker. Acoustic Research went on to dominate the US hi‑fi sector right up to the late 1960s and Villchur went on to revolutionise the design of hearing aids. He died, aged 94, in October 2011.

The Acoustic Research AR-1.Photo: Pat GuineyFor the first few decades of speaker design, drivers would either simply be mounted on flat, open baffles or in enclosures whose size was determined more by gut feel and the demands of a 'nice piece of furniture' than by much in the way of serious electro-acoustic analysis. This was especially true of horn-loaded speakers where, understandably, rather more emphasis was placed on the horn in front of the driver than the enclosure behind it. This all changed with Edgar Villchur's insight that if a driver's mechanical stiffness was low enough, the compliance of the air in a sealed enclosure would become a dominating factor of the driver/box resonant system. This meant that the system's low-frequency cutoff, efficiency, maximum level and power handling would all be defined by the internal volume of the box as well as the driver characteristics. It also meant that low-frequency distortion would not be dominated by drive-unit suspension non-linearity. Edgar Villchur's Acoustic Research AR‑1 was the first such 'acoustic suspension' speaker. Acoustic Research went on to dominate the US hi‑fi sector right up to the late 1960s and Villchur went on to revolutionise the design of hearing aids. He died, aged 94, in October 2011.

1955: Ferrite Magnets

While Rice and Kellogg's drive unit employed an electromagnet to energise its voice coil, and later drive units typically used more practical Alnico permanent magnets, the development of sintered ferrite magnets provided speaker designers with a less expensive and more powerful permanent magnet. Significantly cheaper drive units with higher sensitivity ensued.

1960s: The Transistor Amplifier

The replacement of valves and output transformers with amplifiers based on transistors gifted speaker designers with an order‑of‑magnitude increase in typical amplifier output power. Easy access to more amplifier power meant that the horn loading needed to provide adequate speaker efficiency became unnecessary. So horn‑loaded speakers, certainly in the domestic audio sector, began to disappear, and smaller 'direct‑radiating' speakers, of the kind we know and love, came to the fore.

1961: Loudspeakers In Vented Boxes, by AN Thiele

AN Thiele's paper, Loudspeakers In Vented Boxes.This publication earns its place in the list at this point, because it was first published in 1961 — but it received much wider attention when re-published by the Audio Engineering Society a decade later.

AN Thiele's paper, Loudspeakers In Vented Boxes.This publication earns its place in the list at this point, because it was first published in 1961 — but it received much wider attention when re-published by the Audio Engineering Society a decade later.

1972: Closed Box Loudspeaker Systems &Vented Box Loudspeaker Systems, by Richard Small

Closed-Box Loudspeaker Systems & Vented Box Loudspeaker Systems, by Richard Small.

Closed-Box Loudspeaker Systems & Vented Box Loudspeaker Systems, by Richard Small. I've lumped these seminal technical papers together, not only because they are connected by the fact that both Neville Thiele and Richard Small were working in Australia when the papers were written, but because together they defined a mathematical basis, derived from classical electrical filter theory (and earlier related work by JF Novak), for the analysis of drivers and enclosures at low frequencies. Before Thiele and Small, the understanding of how the electro-mechanical parameters of drivers interacted with the volume and tuning frequency of an enclosure was approximate at best. System design relied on a good deal of 'suck it and see'. After Thiele and Small, speaker designers could use a set of measured driver parameters (known ever since as the Thiele/Small parameters) to design systems on paper and predict with high accuracy how they would perform in the real world.

I've lumped these seminal technical papers together, not only because they are connected by the fact that both Neville Thiele and Richard Small were working in Australia when the papers were written, but because together they defined a mathematical basis, derived from classical electrical filter theory (and earlier related work by JF Novak), for the analysis of drivers and enclosures at low frequencies. Before Thiele and Small, the understanding of how the electro-mechanical parameters of drivers interacted with the volume and tuning frequency of an enclosure was approximate at best. System design relied on a good deal of 'suck it and see'. After Thiele and Small, speaker designers could use a set of measured driver parameters (known ever since as the Thiele/Small parameters) to design systems on paper and predict with high accuracy how they would perform in the real world.

1967: The Soft-dome Tweeter

An early soft-dome tweeter.French OEM drive‑unit manufacturers Audax will forever be identified with the fabric-dome tweeter because it was one of their products, the HD100D25, that became almost obligatory in speaker designs during the late 1970s and '80s. Audax didn't manufacture the first soft-dome tweeter, however. That honour (probably) falls to American Bill Hecht, founder of US speaker company Phase Technology.

An early soft-dome tweeter.French OEM drive‑unit manufacturers Audax will forever be identified with the fabric-dome tweeter because it was one of their products, the HD100D25, that became almost obligatory in speaker designs during the late 1970s and '80s. Audax didn't manufacture the first soft-dome tweeter, however. That honour (probably) falls to American Bill Hecht, founder of US speaker company Phase Technology.

1968: The Goodmans Maxim

The Goodmans Maxim.We all take high-quality small speakers for granted now, but right up until the late 1960s the only speakers capable of anything that we would today consider a reasonable level of performance were on the whole pretty big. That all changed with the first 'mini-monitor', the Goodmans Maxim, designed by Laurie Fincham not long before he left Goodmans to join Celestion.

The Goodmans Maxim.We all take high-quality small speakers for granted now, but right up until the late 1960s the only speakers capable of anything that we would today consider a reasonable level of performance were on the whole pretty big. That all changed with the first 'mini-monitor', the Goodmans Maxim, designed by Laurie Fincham not long before he left Goodmans to join Celestion.

1969: Thermoplastic Cones

A key feature of the BBC-specified LS3/5a speaker (manufactured by several companies; the one shown was made by KEF) was the rigid but lightweight Bextrene thermoplastic cone.Apart from a few examples of metal cones, or composites such as Don Barlow's expanded polystyrene/aluminium-foil cone of 1961, bass/mid-range speaker diaphragms were, until the late 1960s, most often based on various types of pressed wood pulp — paper, in other words. That all changed thanks to research into vacuum-formed thermoplastic cones undertaken by DEL Shorter, Dudley Harwood (who went on to found Harbeth) and Spencer Hughes (who went on to found Spendor) at the BBC Kingswood Warren engineering research department. Along with providing significantly different mass, rigidity and self-damping properties compared with paper, the simplicity of the vacuum forming process meant that experimenting with different cone profiles became rather easier. After appraising numerous different plastics, the BBC engineers plumped for a sheet polystyrene material called Bextrene, and soon began building drivers and systems around it. Commercial speakers with Bextrene‑cone bass/mid drivers soon followed, first from KEF and Rogers, both companies with close commercial links to the BBC, and then subsequently from a number of OEM driver manufacturers. Although Bextrene constituted an advance in speaker engineering, the material was far from perfect. It was relatively heavy and needed a hand-applied coat of PVA glue on its surface to suppress a particularly audible resonance (a characteristic that became known as the, 'Bextrene quack'). Bextrene ruled for between five to 10 years before it began to be replaced by polypropylene (once the significant difficulty of reliably adhering polypropylene to the voice coil and outer surround was overcome). Polypropylene, often reinforced with a variety of fillers, is still today probably the most commonly used thermoplastic cone material.

A key feature of the BBC-specified LS3/5a speaker (manufactured by several companies; the one shown was made by KEF) was the rigid but lightweight Bextrene thermoplastic cone.Apart from a few examples of metal cones, or composites such as Don Barlow's expanded polystyrene/aluminium-foil cone of 1961, bass/mid-range speaker diaphragms were, until the late 1960s, most often based on various types of pressed wood pulp — paper, in other words. That all changed thanks to research into vacuum-formed thermoplastic cones undertaken by DEL Shorter, Dudley Harwood (who went on to found Harbeth) and Spencer Hughes (who went on to found Spendor) at the BBC Kingswood Warren engineering research department. Along with providing significantly different mass, rigidity and self-damping properties compared with paper, the simplicity of the vacuum forming process meant that experimenting with different cone profiles became rather easier. After appraising numerous different plastics, the BBC engineers plumped for a sheet polystyrene material called Bextrene, and soon began building drivers and systems around it. Commercial speakers with Bextrene‑cone bass/mid drivers soon followed, first from KEF and Rogers, both companies with close commercial links to the BBC, and then subsequently from a number of OEM driver manufacturers. Although Bextrene constituted an advance in speaker engineering, the material was far from perfect. It was relatively heavy and needed a hand-applied coat of PVA glue on its surface to suppress a particularly audible resonance (a characteristic that became known as the, 'Bextrene quack'). Bextrene ruled for between five to 10 years before it began to be replaced by polypropylene (once the significant difficulty of reliably adhering polypropylene to the voice coil and outer surround was overcome). Polypropylene, often reinforced with a variety of fillers, is still today probably the most commonly used thermoplastic cone material.

1971: Laser Interferometry

Celestion's paper on laser interferometry.In 1982 Celestion launched an innovative hi‑fi speaker called the SL6. It deployed one of the first 'modern' metal-dome tweeters — one that employed copper pressed (literally) into service as both diaphragm and voice-coil former — and a thermoplastic-coned bass/mid driver with an unusual dust cap and surround design. Both drivers had been developed using laser interferometry. Laser interferometry employs a laser to scan across a moving surface, with analysis of the Doppler effect changes in frequency of the reflected light used to derive the detail of the movement. Using laser interferometry, Celestion could create slow-motion 3D animations of the movement of driver diaphragms and so optimise their mechanical design. It was a brilliant application of technology and Celestion's marketing department understandably made a big fuss about it. However, it wasn't entirely new: Peter Fryer at Wharfedale in 1971 had used the same technique to help inform the design of a new thermoplastic‑coned mid-range driver.

Celestion's paper on laser interferometry.In 1982 Celestion launched an innovative hi‑fi speaker called the SL6. It deployed one of the first 'modern' metal-dome tweeters — one that employed copper pressed (literally) into service as both diaphragm and voice-coil former — and a thermoplastic-coned bass/mid driver with an unusual dust cap and surround design. Both drivers had been developed using laser interferometry. Laser interferometry employs a laser to scan across a moving surface, with analysis of the Doppler effect changes in frequency of the reflected light used to derive the detail of the movement. Using laser interferometry, Celestion could create slow-motion 3D animations of the movement of driver diaphragms and so optimise their mechanical design. It was a brilliant application of technology and Celestion's marketing department understandably made a big fuss about it. However, it wasn't entirely new: Peter Fryer at Wharfedale in 1971 had used the same technique to help inform the design of a new thermoplastic‑coned mid-range driver.

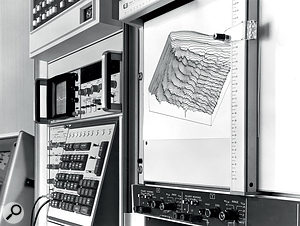

1972: Time-domain Measurement

Mainframe computers in the early 1970s made possible accurate measurement of the time-domain characteristics of a speaker using impulse responses and Fourier transforms.Until the 1970s, speaker acoustic testing was invariably done by feeding the speaker a sine-wave, swept slowly from low to high frequencies, and using a calibrated microphone to 'measure' the output. The output of the microphone would be fed via a preamp to a chart recorder and the speed of the paper through the chart recorder would be sync'ed to the speed of the sine-wave sweep. Using chart paper pre-printed with frequency and level calibrations, a nice neat frequency response curve would be spat out of the recorder. A swept filter in the preamp made measuring harmonic distortion perfectly feasible too. Compared with these enlightened times, it was all a bit Heath Robinson — but it worked beautifully. Swept sine-wave measurement has two big problems, however. First, it requires an anechoic chamber; a very large one too, for any degree of accuracy at low frequencies. And second, swept sine-wave measurement can tell you very little about a speaker's time-domain performance.

Mainframe computers in the early 1970s made possible accurate measurement of the time-domain characteristics of a speaker using impulse responses and Fourier transforms.Until the 1970s, speaker acoustic testing was invariably done by feeding the speaker a sine-wave, swept slowly from low to high frequencies, and using a calibrated microphone to 'measure' the output. The output of the microphone would be fed via a preamp to a chart recorder and the speed of the paper through the chart recorder would be sync'ed to the speed of the sine-wave sweep. Using chart paper pre-printed with frequency and level calibrations, a nice neat frequency response curve would be spat out of the recorder. A swept filter in the preamp made measuring harmonic distortion perfectly feasible too. Compared with these enlightened times, it was all a bit Heath Robinson — but it worked beautifully. Swept sine-wave measurement has two big problems, however. First, it requires an anechoic chamber; a very large one too, for any degree of accuracy at low frequencies. And second, swept sine-wave measurement can tell you very little about a speaker's time-domain performance.

The arrival of cost-effective mainframe‑based computational power in the late 1960s didn't go unnoticed by loudspeaker engineers. One in particular, Laurie Fincham, now at KEF, realised in the early 1970s that computational power made possible recording a speaker's response to a very short impulse, and then applying a Fourier transform. A Fourier transform is a computationally intensive mathematical technique that effectively translates between time and frequency domains. Its application to a speaker impulse response could generate not only the speaker's steady-state frequency response, but also its frequency response at any moment in time after the impulse was over. Furthermore, because the discrete acoustic reflections from wall and ceiling could be 'deleted' from the impulse response, an anechoic chamber was no longer necessary. The familiar 'waterfall' plot, that illustrates how speakers, far from being obedient slaves of power amplifiers, go on playing after the music has stopped, was a revelation. It was as if somebody had photographed the dark side of the Moon.

1975: The Yamaha NS1000

The Yamaha NS1000 is a prime example of the importance of materials science developments to improvements speaker design.Maybe it's just an expression of my unhealthy interest in speakers, but it seems to me there's an irony in the comparison of the Yamaha NS1000 and its near contemporary the NS10. The NS10 was just about as ordinary a speaker as it was possible to design, yet it went on to have influence far beyond its technological worth. The NS1000, on the other hand, was two decades ahead of the curve in terms of technology — yet it left no more than a ripple in audio history. The designers of the NS1000 realised, as do most speaker designers, that if you really want to make a significant technological advance you need to find a new diaphragm material. So they trawled through the light and rigid end of the periodic table and settled on beryllium, choosing to ignore its potential toxicity and the fact that the vapour deposition techniques required to manufacture viable diaphragms from it were truly the stuff of rocket science. They ploughed on regardless, and created not only a speaker that to these ears is still exceptionally good, but one that, long after it had faded from view, helped inspire the engineers at, among others, Focal, Bowers & Wilkins and Accuton, when they once again looked for new diaphragm materials down at the light and rigid end of the periodic table

The Yamaha NS1000 is a prime example of the importance of materials science developments to improvements speaker design.Maybe it's just an expression of my unhealthy interest in speakers, but it seems to me there's an irony in the comparison of the Yamaha NS1000 and its near contemporary the NS10. The NS10 was just about as ordinary a speaker as it was possible to design, yet it went on to have influence far beyond its technological worth. The NS1000, on the other hand, was two decades ahead of the curve in terms of technology — yet it left no more than a ripple in audio history. The designers of the NS1000 realised, as do most speaker designers, that if you really want to make a significant technological advance you need to find a new diaphragm material. So they trawled through the light and rigid end of the periodic table and settled on beryllium, choosing to ignore its potential toxicity and the fact that the vapour deposition techniques required to manufacture viable diaphragms from it were truly the stuff of rocket science. They ploughed on regardless, and created not only a speaker that to these ears is still exceptionally good, but one that, long after it had faded from view, helped inspire the engineers at, among others, Focal, Bowers & Wilkins and Accuton, when they once again looked for new diaphragm materials down at the light and rigid end of the periodic table

1976: The First Active Speaker

The Meyer Sound HD‑1: not the first active speaker, but probably the first to significantly influence the world of studio monitoring.Rice and Kellogg's Radiola wasn't really the first active speaker, well not in the sense that a modern active speaker employs multiple power amplifiers and crossover filters operating at line signal level. It is, however, not easy to pin-down the identity of the first active speaker of the style we see so often today. The BBC used monitors with power amps built into the enclosure as early as the 1960s. One, for example, the LS3/7, integrated a Quad 303 stereo power amplifier, with one channel driving the bass unit and one driving the tweeter. The LS3/7 wasn't a commercial product, however, and can't really be said to have had much influence.

The Meyer Sound HD‑1: not the first active speaker, but probably the first to significantly influence the world of studio monitoring.Rice and Kellogg's Radiola wasn't really the first active speaker, well not in the sense that a modern active speaker employs multiple power amplifiers and crossover filters operating at line signal level. It is, however, not easy to pin-down the identity of the first active speaker of the style we see so often today. The BBC used monitors with power amps built into the enclosure as early as the 1960s. One, for example, the LS3/7, integrated a Quad 303 stereo power amplifier, with one channel driving the bass unit and one driving the tweeter. The LS3/7 wasn't a commercial product, however, and can't really be said to have had much influence.

So the speakers I'll nominate as the first 'modern' active speakers are ones I've already mentioned: the Philips RH range of 'motional feedback' speakers of 1976. Having taken the decision to put some complex feedback and correction electronics in the cabinets, Philips really had no choice but to put the power amps in there too — active speakers as we know them were born. Yet, while the RH544 was reportedly used to mix a few high-profile records (not least Pink Floyd's The Wall), the RH series didn't really have a lasting influence in the pro-audio sector. The active speaker that takes that honour is probably the Meyer Sound HD‑1 (1990).

1978: The Yamaha NS10

I'm breaking a promise here by writing about the NS10 — again — but in the same way that the iconic white-coned speaker is still almost impossible to avoid in recording studios, it's also impossible to overlook in a list of key speaker developments. Having said that, it'd be hard to argue that there were any 'developments' inherent in the NS10 itself. It was, and is, technically unremarkable — only its curled and joined bass/mid driver cone was unusual (its lack of a reflex port is unusual now, but it wasn't at the time). Definitely more by accident and circumstance than by design, however, the NS10 arguably gave birth to an entire class of product: the compact nearfield monitor. The NS10 to some extent also influenced working practices in music recording studios. If you want to know the full story of the NS10 accident and circumstance, this previous SOS article of mine is worth exploring: www.soundonsound.com/reviews/yamaha-ns10-story.

1982: Neodymium-iron-boron Magnets

'Rare-earth' magnets (christened 'rare-earth' not because the elements from which they are made are particularly rare, but because deposits in the Earth's crust are geologically difficult to exploit) were developed in the 1970s, with samarium-cobalt the first to make an impact on manufacturing industry (primarily I believe in the compact but powerful electric motors that gave cars electric windows). Neodymium-iron-boron (NdFeB) came a little later but was almost immediately embraced by speaker designers. Though significantly more expensive than ferrite magnets, NdFeB magnets are inherently far more powerful, so the size and weight of magnet necessary to energise a voice coil is very much reduced. To the benefit of everything from enclosure panel resonance to shipping weight, speakers with NdFeB magnets immediately lost the heavy lump of magnet hanging off the back of their chassis. NdFeB magnets also made possible the design of very small high-frequency drivers which, in turn, made possible KEF's development in the late 1980s of a new type of dual-concentric driver (a dual-coincident), in which a very small tweeter was located on the end of the bass/mid driver pole piece (where the dust cap would normally be found). In an alternate universe, where KEF's patent application for their design wasn't granted, the dual-coincident driver topology would quite possibly be in my list of key developments. In this universe however, where the patent was granted, further development of the idea outside KEF was stifled for a couple of decades. As witnessed by the recently introduced Pioneer RM series of active monitors, dual-coincident drivers are beginning to appear, but thanks to later KEF developments and patents the technological territory is still somewhat restricted.

Now that I've raised the the subject of dual-concentric drivers, honourable mention must go to the Tannoy dual-concentric driver design (by Ronald Rackham) of 1948 that, from the 1960s to the '80s was found almost as often in studios as the NS10 is now. The Tannoy dual-concentric driver is very different from the KEF dual-coincident concept. The small size of an NdFeB magnet enables the high-frequency element in a KEF driver to be positioned right at the neck of the bass/mid diaphragm in front of the bass/mid driver magnet. In the Tannoy dual-concentric design, however, the high-frequency driver is located on the back of the bass/mid driver magnet and 'fires' through a hole in the magnet pole piece. The profile of the hole in the pole piece, which blends seamlessly with the profile of the bass/mid diaphragm, forms an effective horn for the high-frequency driver that increases its radiation efficiency and controls its dispersion.

1985: Medium Density Fibreboard (MDF)

MDF: not something to set pulses racing, but its qualities nonetheless had a positive impact on affordable speaker cabinet construction.I can't be certain of this, but I'd place a bet that Rice and Kellogg's Radiola speaker cabinet was made of plywood. Plywood was the material of choice for at least the first half of the 20th Century for the manufacture of pretty much anything that needed easily machined and joined sheet material, from wardrobes to aircraft. And then, literally cheap as chips, chipboard came along and furniture became disposable — if it didn't fall apart first! High-quality plywood (birch plywood in particular) is actually a fabulous material from which to make a speaker cabinet. It is relatively light, very rigid and its laminate nature provides an element of self-damping. But high-quality plywood is expensive, and that's where MDF came in. MDF is not only more rigid and more homogenous than chipboard, It can also be machined far more accurately and finished to a much higher quality. MDF can even be curved (by machining and post-filling parallel slots in the inside surface) or sanded to a profile. Of course, speaker enclosures are still, on the whole, rectilinear, but many of the curves we do get to see these days were made possible by MDF.

MDF: not something to set pulses racing, but its qualities nonetheless had a positive impact on affordable speaker cabinet construction.I can't be certain of this, but I'd place a bet that Rice and Kellogg's Radiola speaker cabinet was made of plywood. Plywood was the material of choice for at least the first half of the 20th Century for the manufacture of pretty much anything that needed easily machined and joined sheet material, from wardrobes to aircraft. And then, literally cheap as chips, chipboard came along and furniture became disposable — if it didn't fall apart first! High-quality plywood (birch plywood in particular) is actually a fabulous material from which to make a speaker cabinet. It is relatively light, very rigid and its laminate nature provides an element of self-damping. But high-quality plywood is expensive, and that's where MDF came in. MDF is not only more rigid and more homogenous than chipboard, It can also be machined far more accurately and finished to a much higher quality. MDF can even be curved (by machining and post-filling parallel slots in the inside surface) or sanded to a profile. Of course, speaker enclosures are still, on the whole, rectilinear, but many of the curves we do get to see these days were made possible by MDF.

1988: The MLSSA Acoustic Measurement System

MLSSA made measuring a speaker's time-domain response possible with pretty much any PC.The development of computer-based time-domain analysis techniques at KEF in the 1970s was the beginning of the end for traditional swept sine-wave speaker measurement, and it wasn't long before others got in on the act. The Time Delay Spectrometry technique devised by Richard Heyser in the USA was, for example, soon packaged up into the relatively affordable stand-alone TEF acoustic measurement system. The real sea-change occurred, though, when Douglas Rife took Stanley Lipshitz and John Vaderkooy's Minimum Length Sequence technique and created a comprehensive DOS-based acoustic analysis package, christened MLSSA (pronounced Melissa), that could run on almost any PC. Suddenly, immensely complex time‑domain-based acoustic measurement was available to anybody with a PC and a couple of thousand dollars. My own career in speaker design incorporated a break from 1987 to 1990. In 1987 the industry was still predominantly one of swept sine waves. In 1990, the swept sine wave was history and MLSSA was king (or should that be queen?).

MLSSA made measuring a speaker's time-domain response possible with pretty much any PC.The development of computer-based time-domain analysis techniques at KEF in the 1970s was the beginning of the end for traditional swept sine-wave speaker measurement, and it wasn't long before others got in on the act. The Time Delay Spectrometry technique devised by Richard Heyser in the USA was, for example, soon packaged up into the relatively affordable stand-alone TEF acoustic measurement system. The real sea-change occurred, though, when Douglas Rife took Stanley Lipshitz and John Vaderkooy's Minimum Length Sequence technique and created a comprehensive DOS-based acoustic analysis package, christened MLSSA (pronounced Melissa), that could run on almost any PC. Suddenly, immensely complex time‑domain-based acoustic measurement was available to anybody with a PC and a couple of thousand dollars. My own career in speaker design incorporated a break from 1987 to 1990. In 1987 the industry was still predominantly one of swept sine waves. In 1990, the swept sine wave was history and MLSSA was king (or should that be queen?).

The MLSSA story is actually not entirely positive, though. With analogue swept-sine measurement systems it was a piece of cake to quickly measure harmonic and intermodulation distortion. With MLSSA, distortion measurement is rather more difficult and the results it provides offer less immediate insight. MLSSA can easily measure distortion at spot frequencies, but the very nature of distortion mechanisms means that distortion products can vary significantly with frequency. So spot frequency measurement is no good because the risk that you will miss something significant is high. The result of this missing weapon in the MLSSA armoury is that speaker distortion measurement began to be given much less significance than it once was. And call me a gibbering old fool if you will, but I think speaker distortion characteristics are a significant performance indicator!

1995: Wolfgang Klippel

Wolfgang Klippel put detailed distortion measurement into every speaker designer's toolkit.The distortion measurement hole in the MLSSA armoury (see above) was filled by a German electro-acoustics engineer and academic Wolfgang Klippel. From the mid-1990s onward, first as an academic exercise and then as a commercial suite of products, Klippel gave speaker engineers a set of hardware and software tools that enable extraordinarily detailed driver distortion measurement and analysis. The driver design optimisation that 'Klippel analysis' enables can have a significant bearing on performance, and Klippel products are now used extensively in speaker R&D the world over.

Wolfgang Klippel put detailed distortion measurement into every speaker designer's toolkit.The distortion measurement hole in the MLSSA armoury (see above) was filled by a German electro-acoustics engineer and academic Wolfgang Klippel. From the mid-1990s onward, first as an academic exercise and then as a commercial suite of products, Klippel gave speaker engineers a set of hardware and software tools that enable extraordinarily detailed driver distortion measurement and analysis. The driver design optimisation that 'Klippel analysis' enables can have a significant bearing on performance, and Klippel products are now used extensively in speaker R&D the world over.

Late 1990s to 2019: Digital Signal Processing (DSP)

It's not easy to put a date on the first example of digital signal processing (DSP) having a direct influence on speaker technology, or even which manufacturer or manufacturers should be credited with being first. My best guesses however would be a date of the late 1990s, with Genelec in the pro sector and Meridian in the hi‑fi sector being among the first. To begin with, DSP in speakers comprised little more than the addition of an S/PDIF or AES digital input and, perhaps, active filtering and EQ being handled in the digital rather than the analogue domain. Next up was DSP-based room optimisation, such as Genelec's SAM/GLA system and more recently the Sonarworks app. But I suspect that the most significant applications of DSP in speaker engineering have really only just come about: the Kii Three monitor, Trinnov's stand‑alone optimisation systems, the Bang & Olufsen Beolab 90 and 50 home speakers, and the 'smart speakers' from Amazon, Apple and Google.

Many thanks to Chris Binns, Laurie Fincham (THX), Mike Gough, Phil Knight, Philip Newell, Pete Thomas (PMC) and Billy Woodman (ATC) for their help, thoughts and contributions to this piece. Of course, as I suggested at the outset, none of them will necessarily agree with or endorse everything I've written... but hey, that's half the fun!

Notes

1. Arthur C Clarke once wrote that "any significantly advanced technology is indistinguishable from magic."

2. What if there's no microphone, you might reasonably ask? Well in this case, and perhaps delving into philosophy, the beginning of the workflow must be the point at which the creative impulse is externalised.

3. Imagine if we lived underwater. Speaker design, at least in diaphragm radiation terms, would be very much easier because the density of the radiation medium would be far closer to the density of the radiating diaphragm. Wales and dolphins can 'throw' their voices vast distances for this very reason.

4. The sE/Munro Egg was by no means the first speaker to go down the egg-shaped route. For example, who remembers the Blue Room Minipod, reviewed a very long time ago in this very publication (they're actually still around: www.podspeakers.com)? Or the Fujitsu Eclipse, heavily endorsed by Brian Eno, among others. French hi‑fi company Cabasse have the extraordinarily complex and all-but-spherical, four-way, concentric-driver, high-end hi‑fi speaker, La Sphère. And for many years Genelec have knocked the edges off many of their monitor enclosures.

5. One way of visualising a reflex port is to see it as a device that, over a narrow bandwidth (defined by the tuning frequency of the port), reverses the phase of the rear radiation of the driver. Reversed rear radiation reinforces the front radiation from the driver and effectively increases its efficiency.

6. If you see a reflex-loaded speaker without generous flaring at the exit of the port, your best quizzical look is appropriate.

7. I've written 'measurement' in inverted commas because the term implies that some parameter is accurately quantified; something that measuring a monitor's performance in a room can never achieve. If, for example, you use a single microphone position, you'll have an accurate measurement of the monitor's performance at that location — but nowhere else. So your subsequent DSP correction will be viable at that location, but potentially nonsense a short distance away — where it will quite possibly make things sound worse. Alternatively, you can measure the monitor's performance in the room at multiple positions and base your correction on an average. But of course the more measurement positions used, the more the average will flatten and the correction 'curve' will be unable to fix anything but the most gross errors — for which acoustic treatment in the room, or the Kii approach of making a monitor that 'sees' less of the room, is perhaps a more sensible option.

8. I have two theories as to why studio-monitor manufacturers are conservative with driver technology. One is that the whole pro-audio sector seems instinctively careful when it comes to the bits of hardware that are fundamental to making a living. It's an instinct that provides a lifeline for the NS10 and perhaps is the same instinct that leads guitarists and bass players still to rely so much on instruments designed by Leo Fender in the 1950s. My second theory is rather more prosaic: money. Speaker drivers of conventional design have become pretty much commodity items, where price is driven to a great extent by supply chain and manufacturing factors. Add to this the hugely price-competitive nearfield monitor market and you have a recipe for the use of conventional drivers every time.