For most of us, compression is a necessity when capturing and editing footage, but the range of 'codecs' can be bewildering. Find out what you need to know with our in‑depth guide.

our two-part series, 'Making Movies', which appeared in the May and June 2010 issues of Sound On Sound, outlined the basic technology and technique behind making videos, and covered some of the issues around cameras, compression and codecs. This month, we'll get down to some of the real nitty-gritty of video production: what technology and type of compression should you use at each stage of the job?

There's a fundamental difference between video production and audio production, in that even quite modest audio kit can work with full‑quality, uncompressed audio files. Given due respect, excellent results can always be achieved. Video is different. Compression of the video signal is an unavoidable fact for all but the highest‑budget feature film work (particularly for HD video, whose uncompressed data rate is around 2.05Gb/s), and it will have an impact on the final picture quality. Choosing the right kit and workflow pay dividends.

There are two phases of video production that we need to consider: how the material is shot or acquired, and how its edited and post‑produced. I'll look at each separately.

GOPs, Macroblocks & Wavelets

Before explaining the individual codecs, it's probably best to reiterate exactly how different types of compression work. Video compression comes in two basic flavours, intraframe and interframe, names so similar they are often confused with each other.

Intraframe (also known as I-frame) compression is compression calculated on an individual frame of video. This happens in the frame‑based editing codecs used by Avid (DNxHD) and Apple Final Cut Pro (ProRes 422) and also on specific frames, called I-frames, of the MPEG‑based interframe compressions used by many cameras. Each frame is effectively considered as a still image, and is JPEG compressed.

This process involves dividing the whole frame into blocks of pixels, called 'macroblocks'. The size of a macroblock depends on the type of compression, but is normally 8x8 pixels. The data of the pixels in the macroblocks is then compressed using a mathematical process called Discreet Cosine Transformation, or DCT. Don't worry, I'm going to spare you the details of this, but the important point is that it's the precision of the DCT that determines the amount of compression, and therefore the quality of the result.

Another method of compression that doesn't involve dividing the image into Macroblocks is wavelet compression. Wavelet compression can be thought of as a 'circular' compression, using a convolution transform. Unlike MPEG or JPEG compressions, wavelet compression compresses the image using overlapping circular 'pools'. The result is a more subtle degradation of the image than with macroblock compression. As computers have increased in power, wavelet compression has become more viable, and examples of it include Red's Redcode, Cineform's NeoForm and JPEG 2000.

Interframe compression is compression that compares frames of video with each other. In many scenes there is relatively little change in content from one frame to the next, making great efficiency of compression possible by only storing the frame‑to‑frame differences, rather than the frames themselves. This is the basis of MPEG compressions. The number of frames involved depends on the compression system, but is often around 12 (as in MPEG2 DVD) to 15 (HDV). This collection of frames is known as a GOP (Group Of Pictures), and GOPs of this length are typical of so‑called 'long‑GOP' compressions.

A GOP starts and ends with an I-frame, a conventional JPEG‑compressed frame. These are the 'known quantities', compressed only from the data of that frame. In between lie two different types of predicted frames, the frames that carry only difference data. These are called P-frames and B-frames. P-frames are predicted from the I-frame at the beginning of the GOP, and B-frames are bidirectional frames created by comparing the data from both the start and end I-frames. As an example, the GOP structure of MPEG2 is IBBPBBPBBPBBI.

Acquisition Formats

It's in the camera itself that the first and most important compression happens. Lose too much quality here and no amount of primping in post-production will put things right. There are more different competing formats than you can shake a stick at, with more arriving all the time. Here's a quick tour of the major contenders:

AVCHD

With AVCHD camcorders starting at around $300, AVCHD could be called the bottom of the HD food chain, and is aimed at the 'prosumer' end of the market. The camcorders commonly store the video they shoot onto SDHC flash memory cards, although some also have on‑board hard disks. AVC itself stands for Advanced Video Compression, and is the core technology behind the HD revolution in consumer video. Got a Blu‑Ray player? The discs have AVCHD compressed video on them. Got Sky HD? It's AVC compression you're watching. But while Blu‑Ray can have a data rate of up to 36Mbps and Sky Sports HD is around 20Mbps, many consumer AVCHD cameras max out at around 17Mbps. AVCHD is fundamentally an MPEG4, long‑GOP compression so it's very efficient and squeezes very good pictures from these meagre data rates, but even so, such low bit‑rate AVCHD is best left to videoing the family.

AVCCAM & NXCAM

Panasonic's AVCCAM and Sony's NXCAM formats use long‑GOP AVC compression. They can shoot at up to 24Mbps, onto SDHC flash memory cards and, combined with decent lenses and image sensors, give pretty decent pictures. The results aren't broadcast quality, but they're perfectly alright for home videos and low‑budget shoots. These cameras can be thought of as the HD successors to DV cameras such as Sony's PD170 and the Panasonic 100, and cost in the $2000 to $3500 range.

HDV

HDV was the first low‑cost HD camcorder format. It records onto the same tapes as standard-definition DV cameras, getting its HD media to fit by using log‑GOP MPEG2 compression. It comes in two flavours, Sony's 25Mbps 1080i and JVC's 19Mbps 720p. Probably the best‑known HDV camera is Sony's Z1, which looks and feels like a serious piece of gear. Unfortunately, though, HDV isn't quite up to broadcast standards for HD programme making. The Z1, for example, is far more likely to be used to record SD material for broadcast than it is to record HDV material for the same use.

XDCAM HD & EX

These are variants of Sony's professional quality XDCAM formats. XDCAM shoots onto 'Pro‑Discs', basically rugged, rewritable Blu‑Ray discs in plastic cassettes, and XDCAM EX stores its media on SXS flash memory cards. XDCAM uses long‑GOP MPEG2 compression at 25, 35 or 50Mbps data rates. The top 50Mbps rate uses 4:2:2 sub-sampling and is regarded by the BBC as acceptable for use in HD production (they use these cameras for programmes such as The Culture Show). The Sony EX1 XDCAM EX camera, at around $6500, is currently regarded as the baseline for HD production.

The Panasonic AGHPX301 is one of the campany's new AVC Intra cameras, using a 100MB/s intraframe compression. Intraframe codecs are ideal for editing work, as each frame is discrete.

The Panasonic AGHPX301 is one of the campany's new AVC Intra cameras, using a 100MB/s intraframe compression. Intraframe codecs are ideal for editing work, as each frame is discrete.

DVCProHD & AVC Intra

DVCProHD was Panasonic's first mainstream HD broadcast camera format. Shooting onto P2 Flash memory cards at 100Mbps, DVCProHD cameras are capable of producing fine pictures. However, the DV compression used limits the ultimate performance of the camera, and Panasonic have moved to using a new compression system, AVC Intra.

AVC Intra is a relative of AVCHD, but uses a GOP length of one frame (the 'Intra' part of its name means that there's no interframe compression at all). This, in turn, means that the data rate has to be significantly higher to give good picture quality, hence the 100Mbps used for AVC Intra.

DVCProHD cameras have proved popular in a wide range of HD productions, particularly where the cameras need to be reasonably portable but still capable of excellent picture quality. The BBC's recent Lost Land of the Jaguar natural history series is an example of a production that made fine‑looking programming using this format.

DSLRs

Until recently, the above formats would have been a pretty comprehensive resume of the HD format options available, but this is a medium that's evolving faster than the average flu virus. The new mutant strain is DSLR video, typified by cameras like the Nikon F7 and the Canon 550D. These offer the promise of HD quality video from relatively low‑cost cameras (by comparison with HD camcorders, that is), with the prospect of using high‑quality photographic lenses. Canon's 550D, for example, shoots full 1080p/25 HD video, recording 45Mbps I-frame (intraframe) H264-compressed video onto Flash memory cards.

Inevitably, these cameras are not without compromise, and the jury's still out on their suitability for mainstream video production. Most concerns centre around their handling and monitoring facilities, as well as problems with moire patterns when shooting certain objects. They weren't really designed for use as video cameras, after all, and some of these issues are discussed in the review of the Canon EOS Rebel T2i in the July 2010 issue of Sound On Sound.

Nonetheless, the Canon EOS 5D MkII DSLR has recently been used to shoot an episode of House, as well as a host of independent films, massively strengthening the format as a viable shooting proposition.

Sony HDCAM & HDCAM SR

HDCAM was Sony's first mainstream broadcast HD format. Despite its age (HDCAM camcorders have been available for 13 years now, an eternity for this fast‑moving technology), it's still popular today. HDCAM records onto the same size cassettes as SD DigiBeta cameras, using 144Mbps short‑GOP (around six frame) MPEG2, and its basic quality has stood it in good stead.

HDCAM SR, despite the similar name, is actually an entirely different kettle of fish. This is the big league for most of us, with camcorders recording superlative quality at either 440Mbps sub-sampled video, or even 880Mbps RGB Video. We've reached the pinnacle of HD broadcast — or, alternatively, the foothills of digital cinema — at this level. Top‑end broadcast dramas (for example, the BBC's Silent Witness) are often shot using HDCAM SR at 440Mbps.

Red

Introduced in 2006, the Red One camera has been one of the most innovative and controversial products of the digital TV era. It can shoot video at up to 4k (4096 x 2304) pixel resolution, and uses a 35mm film-frame‑sized image sensor, far larger than the 2/3-inch (17mm) sensors of most broadcast video cameras. This, combined with its use of standard film‑industry PL‑mount lenses, makes the Red camera a popular choice among digital cinematographers, and a number of well‑known features have been shot using Red.

The output from the camera is compressed using Red's own Redcode codec and recorded onto Compact Flash card memory at data rates of 224, 288 or 336Mbps. Redcode uses wavelet compression, an entirely different approach to the MPEG or JPEG compressions used by other manufacturers.

These media files are confusingly sometimes called by their file extension, R3D, or sometimes by their format, Red Raw. As its name suggests, Red Raw is raw media, with no in‑camera processing of gamma or colour balance applied to it. Instead, it is assumed that these processes will be done in software during editing or post‑production.

The post‑production workflow with R3D media is an art in itself, with as many twists and turns as The Forsyte Saga, but for those prepared to see it through, the reward is spectacular quality pictures.

Arri Alexa

Arri cameras have been mainstays of film production for decades. In recent years the company has been steadily making the transition to digital cinema with the D20 and D21 cameras, and now the Alexa.

The Alexa may not have the 4k resolution of the Red camera, but it is capable of shooting at 2880x1620 (also known as 3k), so it's not short of pixels!

There are some parallels with Red. Alexa also uses PL‑mount lenses, and generates raw format media called ArriRaw, which it outputs without compression, to be recorded on a fast disk array. It can also output Final Cut Pro-friendly ProRes422 HQ format at 1080p/25, or ProRes 4444 at 2k resolution, therefore simplifying the post‑production workflow significantly for users who want to work at lower resolutions.

Editing & Post Formats

Once you've shot your video, you then have to navigate the minefields of Editing and Post. Which NLE you're going to use and what format or compression you're going to work with in the NLE are questions you need to consider carefully. Here I'll outline some of the setups you'll encounter in three of the most popular NLEs: Apple Final Cut Pro, Avid Media Composer and Adobe Premiere Pro.

Whatever your setup, it's best to avoid (as far as possible) concatenation errors, one of the scourges of video production. Concatenation errors are produced because every codec leaves its mark on the media in the form of artifacts which the codec designers hope will be either unnoticeable or unobjectionable. If the media is rendered into a different codec, those artifacts will be rendered too, and may become more obvious as a result. Also, the new codec will have added its own characteristic artifacts, which will be quietly waiting to potentially show themselves at the next stage of compression the media goes through, perhaps when a Blu‑Ray disc is made after editing is complete.

Now that I've discussed shooting codecs and their uses, I'll outline how to pick an editing codec in three popular NLEs, and how to make sure it's the right one for the job.

Final Cut Pro

We'll look first at Apple's Final Cut Pro (FCP). On starting a new Project, you can choose the format of it from the friendly‑sounding 'Easy Setup' menu. I'm presuming your project is HD, so I've chosen it from the Format menu, and that you've shot at 25fps. But what codec do you want to work with?

Clicking the 'Use' drop-down menu reveals a pretty extensive list of possibilities. Tthe exact contents will depend on whether your system has a hardware capture card or peripheral or is software‑only. Final Cut Pro's Easy Setup simplifies project setup to three selections. As you can see in the screenshoots above, I have a Matrox MXO2, so some of the options shown are specific to that hardware. You'll see a different selection if you have different hardware.

Final Cut Pro's Easy Setup simplifies project setup to three selections. As you can see in the screenshoots above, I have a Matrox MXO2, so some of the options shown are specific to that hardware. You'll see a different selection if you have different hardware. These are just some of the options you might see in Final Cut Pro's Easy Setup. The Matrox MXO2 options are accelerated by this particular piece of hardware. The choices you here boil down to:

These are just some of the options you might see in Final Cut Pro's Easy Setup. The Matrox MXO2 options are accelerated by this particular piece of hardware. The choices you here boil down to:

1. Hardware‑assisted by the MXO2 or not

2. Using the 'native' codec of the media you've shot (DVCProHD, HDV, XDCAM.)

3. Using an Apple ProRes codec.

The first option, using one of the formats supported by the MXO2 hardware, makes a lot of sense. Many hardware capture cards or processors offer acceleration of effects processing and will speed up the edit nicely.

However, we don't all have hardware assistance, so leaving the MXO2 to one side, the second option is to use the native codec of the media you're editing. This is often a good choice, because it allows you to edit without FCP having to recompress the media to another format. This means that the timeline you edit can be of identical quality to the original source footage. As a result, you're much less likely to suffer from concatenation errors.

When you edit in FCP, the timeline has a codec associated with it. Media in that timeline must ultimately be converted to that codec through rendering, although Final Cut Pro is often able to play it in real time during the editing process. When rendering occurs, the conversion from the original compression type to the new inevitably reduces the picture quality, and can sometimes result in unexpected artifacts — called concatenation errors, as mentioned earlier — being produced.

The third option is to use an Apple ProRes codec. ProRes comes bundled as part of the Final Cut Studio package and is Apple's high‑quality post‑production codec, offering a variety of bit‑rates and therefore qualities (see 'Apple ProRes Variations' box).

Proxy

As the table shows, for offline work (see 'Offline, Online & Editing Jargon' box) Proxy is ideal. It looks pretty good, certainly good enough to be able to critically assess focus and picture quality, and at 35Mbps it will use around 16GB of disk space per hour. At this kind of data rate, even if you have gone berserk and shot 100 hours of rushes, a 2TB disk will do. Best of luck with the editing, though!

LT

LT (short for Lite) is probably the lowest bit‑rate you'd want to use for proper online finishing of your project. It's OK for basic editing work, but if you're going to be using significant amounts of effects, and therefore rendering, its low bit‑rate will begin to show.

ProRes 422

ProRes 422 at 120Mbps is high enough in bit‑rate to survive multi‑layer rendering without obvious picture degradation, so it's suitable for more serious on‑line and effects work. Having said that, its bit‑rate is generally considered insufficient for broadcast finishing, so unless disk space is tight, you're better off going the whole hog and using ProRes 422 HQ.

ProRes 422 HQ

The 185Mbps data rate of ProRes 422 HQ means that excellent quality is maintained even in complex, multi‑layer sequences, and it has the broadcasters' seal of approval as a consequence. You'll need plenty of disk space for your media, and for multi‑layer work they will have to be capable of playing back multiple streams of media at this bit rate. Both eSATA drives and SCSI arrays are good options, as are RAID systems.

ProRes 4444

ProRes 4444 is another step up in quality. The 4444 tag means that it's designed for compressing full‑bandwidth RGB data from digital cinema cameras such as the Arri Alexa, rather than the 4:2:2 sub-sampled data generated by 'ordinary' HD TV cameras. The fourth '4' in its name also indicates that it's capable of carrying a full‑bandwidth Alpha channel (containing transparency data), useful for post‑production work where keying and matting will be involved. ProRes 4444 also supports frame sizes bigger than 1920x1080, allowing it to work at digital cinema 2k resolution. Data rates are predictably high: 275Mbps at 1080i/50 resolution, rising to 315Mbps at 2k.

Avid Media Composer

Beloved by the Broadcast industry, Media Composer approaches HD editing in a slightly different way to Final Cut Pro. When you start your Project, Media Composer initially only needs to know what format you want in terms of the frame size and frame rate. It then lists the formats that are supported at this frame size.

After picking these options, you select either 'capture' or 'import' media and choose which compression codec you want to use for the media. While this is a bit long‑winded, it does at least walk you through the process step by step. Avid Media Composer asks that you select the frame size and rate, telling you which formats support them. After this, you can select your editing codec.

Avid Media Composer asks that you select the frame size and rate, telling you which formats support them. After this, you can select your editing codec.

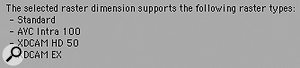

As you can see from the screenshots on the left, as well as working with native AVC‑Intra, DVCPro HD or XDCAM compression, Avid offers its own I-frame HD Codec, DNxHD. DNxHD comes in a variety of bit‑rates, offered according to project frame size, but the ones you'll see in a 1080i/50 project are shown in the box above.

DNx120

DNxHD 120 is broadly comparable to Apple ProRes 422 in picture quality, and, like ProRes 422, is a little low in bit‑rate for demanding online work or for broadcast.

DNxHD 185 and DNxHD 185x

DNxHD 185 comes in two flavours: eight bits per channel or ten bits per channel (the 'X' factor in the name is the clue). Confusingly, both share the same 185Mbps data rate. The logic of this is that 'vanilla' 185 is best for online editing where maximum picture quality is the aim, but 10‑bit 185x is to be preferred if there's significant colour grading work to be done. Colour correction work is best handled at high bit-depths, with DNxHD 185x's 10-bit video allowing finer colour adjustments than 185, outweighing the fact that DNxHD 185x is actually more compressed than its 8-bit brother.

Adobe Premiere Pro

In broadcast circles, Premiere Pro has always been overshadowed by FCP and Media Composer, but it's a very capable system and has the advantage of integrating extremely well with Adobe's other products such as Photoshop and After Effects.

In Premiere Pro CS5, once a Project has been created, the software offers you a choice of formats for the sequence you're going to edit.  Premiere Pro supports a large range of native editing codecs including, unlike most NLEs, 4k Red R3D files.Choosing a Sequence Preset to match the format of the media you want to edit will let the system work with maximum efficiency, since otherwise Premiere Pro will be attempting to convert the clip format to the sequence format on the fly during playback. Premiere flags media that does not match the sequence with a red line in the timecode track (see top screenshot).

Premiere Pro supports a large range of native editing codecs including, unlike most NLEs, 4k Red R3D files.Choosing a Sequence Preset to match the format of the media you want to edit will let the system work with maximum efficiency, since otherwise Premiere Pro will be attempting to convert the clip format to the sequence format on the fly during playback. Premiere flags media that does not match the sequence with a red line in the timecode track (see top screenshot).  The red area on the Premiere timeline may require rendering in order to play back smoothly. These clips won't necessarily play back in the sequence without frames being dropped because of the codec mismatch.

The red area on the Premiere timeline may require rendering in order to play back smoothly. These clips won't necessarily play back in the sequence without frames being dropped because of the codec mismatch.

Much work has been done to improve native-codec performance in Premiere Pro CS5, particularly for owners of certain Nvidia video cards. In fact, Adobe claim that Premiere Pro CS5 has the most advanced multi‑format playback engine of any NLE available today.

Note that an important difference between Premiere Pro and the other two NLEs is that it doesn't have its own editing codec, which can be a disadvantage – see the next section for the full explanation. One solution to this is the Cineform NeoScene codec, a third‑party codec available from www.cineform.com, which offers Premiere Pro users the same sort of advantages as ProRes and DNxHD offer FCP and Media Composer users.

The Cineform NeoScene codec uses Wavelet compression, and is capable of excellent picture quality as a result, although it needs a relatively powerful computer to play back smoothly.

Which Codec Should I Edit With?

The pictures above show zoomed-in crops of an image that has been through 10 HDV renders (top), 10 DNxHD renders (middle), and 10 uncompressed renders (bottom). Look in particular at the edges of the flags: the blue and red edges are soft in the HDV image compared to the other two.

The pictures above show zoomed-in crops of an image that has been through 10 HDV renders (top), 10 DNxHD renders (middle), and 10 uncompressed renders (bottom). Look in particular at the edges of the flags: the blue and red edges are soft in the HDV image compared to the other two.

So why use ProRes 422, DNxHD or Cineform rather than a native camcorder codec, or, indeed, why use any compression at all? In most cases, the camcorder codecs are MPEG‑based, long‑GOP compressions. The efficiency of compression this gives them means they can be relatively low in bit‑rate, so they can offer long recording times on the memory cards or tapes they use.

So why use ProRes 422, DNxHD or Cineform rather than a native camcorder codec, or, indeed, why use any compression at all? In most cases, the camcorder codecs are MPEG‑based, long‑GOP compressions. The efficiency of compression this gives them means they can be relatively low in bit‑rate, so they can offer long recording times on the memory cards or tapes they use.

However, long‑GOP MPEG is not easy stuff to edit. Firstly, unless you get lucky and pause the clip on an I‑frame, the system will have to load an entire GOP and decode it before it's able to display the frame of video to you. This takes both time and significant processing power, so jogging through the video and selecting your edit point is not a quick and slick experience.

Secondly, the edits you make are likely to be on one of the P-frames or B-frames within the GOP, and the result of this is that eventually the whole sequence will have to be decoded and re-encoded again to reconstruct the GOP structure uniformly throughout. This means that a lengthy render will await you at the end of your edit.

Thirdly, long‑GOP MPEG was not designed or intended to be repeatedly decoded and re-encoded, which is precisely what happens as you render effects or colour gradings in your timeline. Repeated rendering will significantly affect the final picture quality.

A RED R3D is actually a folder of files, not just a single video clip. The RSX file includes metadata while the MOV files are low resolution previewsBy comparison, DNxHD and ProRes422 are I-frame compressions. This makes video compressed with these codecs much quicker to decompress for display or for effects processing, making for a more efficient, quicker editing experience. The absence of a GOP structure means that you can edit on any frame of a clip without having to go through a re‑encode process.

A RED R3D is actually a folder of files, not just a single video clip. The RSX file includes metadata while the MOV files are low resolution previewsBy comparison, DNxHD and ProRes422 are I-frame compressions. This makes video compressed with these codecs much quicker to decompress for display or for effects processing, making for a more efficient, quicker editing experience. The absence of a GOP structure means that you can edit on any frame of a clip without having to go through a re‑encode process.

The penalty is that these codecs need to have much higher data rates (120‑185 Mbps versus 25‑50 Mbps for the MPEG camcorder codecs) to achieve good picture quality. MPEG4 is often cited as offering a 30 to 50 percent improvement in picture quality over MPEG2 at the same bit rate. Similarly, long‑GOP MPEG compressions are, in general, regarded as giving equivalent picture quality to frame‑based compression of twice the data rate. So Sony XDCAM EX at 50Mbps could be thought of as offering virtually the same picture quality as Apple ProRes422 HQ or Avid DNxHD185.

If your I/O hardware supports it, you may be able to work at 2K resolution with Red R3D media. For example, pictured are the options offered by the Matrox MXO2:The Wavelet compression of Cineform and REDCODE represents a third way. If an image becomes too complex for macroblock compression to cope with, we get the visible result of this data overload in the form of visible 'blocking' or pixellation of the image, and sometimes motion artifacts around moving objects (the so‑called 'mosquito noise' you're probably familiar with from watching Freeview TV pictures, for example).

If your I/O hardware supports it, you may be able to work at 2K resolution with Red R3D media. For example, pictured are the options offered by the Matrox MXO2:The Wavelet compression of Cineform and REDCODE represents a third way. If an image becomes too complex for macroblock compression to cope with, we get the visible result of this data overload in the form of visible 'blocking' or pixellation of the image, and sometimes motion artifacts around moving objects (the so‑called 'mosquito noise' you're probably familiar with from watching Freeview TV pictures, for example).

Wavelet compression doesn't produce artifacts like these. Instead, compression overload results in an increase in noise, similar to a film-grain effect, and a reduction in detail, causing slight softening of the picture. Wavelet compression artifacts are generally more natural and less distracting than those thrown-up by macroblock compression and Red, for example, describe Redcode as 'virtually lossless'.

However, the data rates of any of these codecs represent pretty massive amounts of compression by comparison with the data rate of uncompressed HD video — which is an eye‑watering 2.05Gbps at 1080i/50. For the very best‑quality results, it's arguable that we should be avoiding all compression. That way, no matter how much rendering of effects is done, the picture quality is certain to be maintained at the best level possible.

Bear in mind, though, that at 2.05Gbps you'll need around 900GB of disk space per hour of video, and not just any old disks will do. To be able to play your video clips in real time, you'd need disks capable of sustaining read performance of at least this rate, and ideally two or three times this rate, for reliable results. This means using fast RAID arrays of disks, ideally with fibre‑channel connection to the host computer. The computer itself is going to have to be fast, too — an eight‑core Mac Pro or similar — together with a high‑performance graphics card. So, while perfectly possible, the hardware requirements of uncompressed HD working are quite demanding, and beyond the resources of non‑specialist video post‑production facilities.

Conclusion

Without specific hardware, Final Cut Pro will transcode Red footage into Apple Pro Res

Without specific hardware, Final Cut Pro will transcode Red footage into Apple Pro Res

It's probably true to say that most people would not be able to tell the difference between video that has been through a carefully considered and controlled compressed workflow and video that has been post‑produced uncompressed, bearing in mind that the final product must be delivered to the end user in compressed form via Blu‑Ray or HD transmission anyway. So for all practical purposes, the decision really boils down to staying in the native codec of the originating camera through the post‑production process, or transcoding into an editing codec.

Avid Media Composer allow you to 'develop' Red footage, applying exposure and colour correction at import.There's no clear‑cut answer to this, but the accepted logic is that keeping the media in the native codec works well if there's not too much rendering involved. Using an editing system codec (at 185Mbps) will give better results if the sequence is likely to be rendered repeatedly. So documentary‑style programmes might stay native, but pop‑promos might go to an editing codec, for example. So there we have it. The issues you face in video production are numerous and complex, but you should now be able to make informed choices about your production workflow.

Avid Media Composer allow you to 'develop' Red footage, applying exposure and colour correction at import.There's no clear‑cut answer to this, but the accepted logic is that keeping the media in the native codec works well if there's not too much rendering involved. Using an editing system codec (at 185Mbps) will give better results if the sequence is likely to be rendered repeatedly. So documentary‑style programmes might stay native, but pop‑promos might go to an editing codec, for example. So there we have it. The issues you face in video production are numerous and complex, but you should now be able to make informed choices about your production workflow.

Camera Codec Summary

The table below summarises much of the camera codec information I've discussed in the main body of this article

Referring to the table, you'll notice 'Colour Sampling', which was touched on in June's 'Making Movies' article. The type of sub‑sampling used by the different camera formats will have a big impact on their suitability for shooting chromakey (or 'green screen') material. The 4:2:0 sampling used by many of the MPEG‑based compressions does not retain as much detail in the colour data as 4:2:2 (or 4:4:4) sampling; 4:2:2 sampling is generally considered sufficient for chromakey work.

I've also included information about the frame size the camera actually records. In some cases, although the image sensor in the camera may have a resolution of 1920x 1080 or more, the codec used will down‑sample the data to a lower resolution during compression. On decompression, the codec up‑samples back to full 1920x1080. This inevitably means that resolution is lost, which will affect the camera's suitability for demanding situations.

The final column indicates the status of each format in the eyes of the BBC. They will only accept programmes shot with approved HD formats for HD transmission, although they do allow a 'quota' of non‑HD material, normally 25 percent, to be used in a programme. Most other major broadcasters have similar policies, so if you're aiming for a broadcast market, it's important to check out what's acceptable before you start shooting.

| Format | Frame Size | Colour Sampling | Data Rate (Mbps) | Compression Type | BBC HD? |

| AVCHD | 1920x1080 | 4:2:0 | Up to 23 | AVC Long GOP | NO |

| HDV | 1440x1080 | 4:2:0 | 25 | MPEG2 Long GOP | NO |

| AVCCAM/NXCAM | 1920x1080 | 4:2:0 | 23 | AVC Long GOP | NO |

| DVCPROHD | 960x1280 or 1440x1080 | 4:2:2 | 100 | DV | YES |

| DSLR | 1920x1080 | 4:2:0 | 45 | H264 | NO |

| XDCAM HD | 1440x1080 | 4:2:0 (4:2:2 at 50Mbps) | 25,35 or 50 | MPEG2 Long GOP | YES (50Mbps) |

| XDCAM EX | 1920x1080 | 4:2:0 (4:2:2 at 50Mbps) | 25, 35 or 50 | MPEG4 | YES (50Mbps) |

| AVC‑Intra 100 | 1920x1080 | 4:2:2 | 100 | AVC‑I | YES |

| HDCAM | 1440x1080 | 3:1:1 | 144 | MPEG 2 Intra | YES |

| HDCAM SR | 1920x1080 | 4:2:2 | 440 | MPEG 4 Studio Profile | YES |

| HDCAM SR | 1920x1080 | 4:4:4 RGB | 880 | MPEG 4 Studio Profile | YES |

| Red | 4096x2304 | 4:2:2 | 224, 288 or 336Mbps | Wavelet | YES |

| Arri Alexa | 2880x1620 | 4:4:4 | 185Mbps, 275Mbps or 3GBps | Apple ProRes 422HQ, 4444 or Uncompressed | YES |

Apple ProRes Variations

| Codec name | Bit rate at 1080i/50 | GB per hour | Offline/online editing |

| ProRes Proxy | ~35Mbps | 16 | HD offline |

| ProRes LT | 100Mbps | 45 | Basic HD online |

| ProRes 422 | 120Mbps | 54 | High-quality HD online |

| ProRes 422 HQ | 185Mbps | 83 | Best-quality HD online |

| ProRes 4444 | 275Mbps | 124 | Digital cinema |

Avid DNxHD Codecs

| Codec Name | Bit Rate at 1080i/50 | GB per hour | Offline/online editing |

| DNxHD 120 | 120Mbps | 54 | Basic HD online |

| DNxHD 185 | 185Mbps | 83 | High-quality HD online |

| DNxHD 185 x | 185Mb/s | 83 | Best-quality HD online |