We all want to know what the man on the Clapham Omnibus really thinks of our music. Now we can find out — and have his opinions statistically analysed into the bargain.

The Holy Grail for many a record company A&R department is a foolproof way of knowing whether a track will be a hit in advance of its release. This might seem implausible, but there have been several attempts. Perhaps the best known is Hit Song Science, which was originally launched in 2003, and uses advanced AI techniques to evaluate the hit‑worthiness of tracks. There are rival automated services, too, such as Music Xray and Band Metrics, but what all of these have in common is that their analytical techniques are largely or entirely computer‑based.

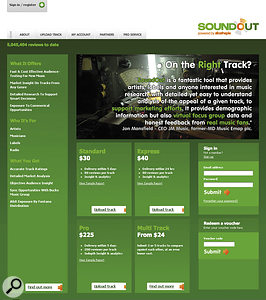

The idea behind SoundOut is to fulfil the same goal, but using human reviewers, harnessed through crowd‑sourcing on the Internet. The reviewers in question belong to the community associated with slicethepie.com — a site which was originally focused on helping artists find alternative ways to fund their releases. The idea is simple: artists or record labels pay a flat, per‑track fee to have their tracks fed into slicethepie's 'scouting' service. Members of the community then audition and review the tracks, and receive micropayments based on the number of tracks they review and their long‑term success as a 'scout'. These reviews are then automatically analysed and compiled into reports, which are returned to the artist or record label to help with their decision‑making. What's more, SoundOut claim to offer high‑rated tracks free exposure to record labels and sync opportunities.

Boarding The Express

Artists and labels can choose from three different report formats. Standard reports are returned within five days, with a minimum of 80 reviews per track, and cost $30 per track. Express reports are the same, but reach your inbox within 24 hours of uploading the tracks, and cost an extra $10 per track. For more in‑depth analytics based on 200 reviews rather than 80, meanwhile, you can opt for the $225 Pro report. There are also Multi Track options which, in effect, offer discounted 'bulk buys' for situations where you might want reports on a number of different tracks.

In order to try the system out, SoundOut gave me two vouchers for Express reports, and I also signed up as a Scout (see box overleaf for details). The process of signing up and uploading tracks is relatively painless, and not dissimilar to that used by many other sites. Your music has to be a 192kbps MP3 at a constant bit rate, and is auditioned through a live streaming player. SoundOut told me that this applies no further compression, but some of the mixes I heard through it as a Scout sounded distinctly odd, so perhaps those artists had uploaded files in the wrong format.

After that, all you need do is sit back and wait for the reports to arrive. These are lengthy PDF documents that provide a number of analytical tools, of varying helpfulness. Anyone who 'scouts' your track has to rate it out of 10 and provide a short text review. These are all listed at the end of your report, and various statistical techniques are used to pick up trends and themes that emerge. The numerical results are broken down by age and gender, and plotted on a two‑dimensional graph showing overall rating against consensus of opinion. The written reviews are also analysed using a technique called Computational Forensic Linguistics to generate a 'word cloud' and to produce ratings for different elements of the track, such as vocals, guitars, drums, performance, production, arrangement, and so on. The report also lists tracks by other artists that, supposedly, have a similar 'Market Potential'.

Since all tracks are pooled into a single 'scout room' and Scouts are, in effect, rated by their ability to predict each others' opinions, you'd expect it to be the safe, polished, familiar pieces of mainstream music that would achieve highest overall 'market potential' ratings, and so it proves. This is, however, supplemented by an 'in genre classification', which compares your track only against others in the same genre; SoundOut say that a good niche track might poll 'below average' overall, yet 'excellent' within its own genre.

Grow A Thick Skin

Some pages from one of my SoundOut reports showing some of the conclusions generated by its automated analysis. The last six pages, not shown, list all the actual reviews verbatim.

Some pages from one of my SoundOut reports showing some of the conclusions generated by its automated analysis. The last six pages, not shown, list all the actual reviews verbatim.

One thing I learned fairly quickly is that if you're planning to use SoundOut to evaluate your own music, you had better not be too sensitive about criticism! What you are getting is a selection of reviews written anonymously by people on the Internet, not a group known for exaggerated politeness, and if they don't like something, they'll say so in no uncertain terms. For test purposes, I'd chosen more or less at random a couple of tracks of my own, for which I had no real commercial ambitions, and it was a bit of a shock to actually set them in front of a disinterested audience.

Superficially, it seemed there was not a huge difference in the overall ratings of the two tracks: the first one came in at 6.4 out of 10, while the second rated 5.3. This might not sound like a big deal, but the SoundOut analysis suggested that this translated to a massive gulf in commercial appeal. The nature of the rating process means that scores tend to cluster in the centre, so a rating of 6.4 is actually well into the top third of all tracks, while a rating of 5.3 lies in the bottom 40 percent. Further, analysis of the variations among reviewers apparently suggested that although not everyone liked the first track, those who did like it, loved it — what SoundOut calls a high 'passion rating'. The first track thus reached the dizzy heights of being "a potential album track for this target market”, while the other (which I had thought, and still think, a much better song) was simply "below average”.

Out & About

Given that I had no particular commercial ambitions for either track, it's hard to know exactly how much weight I would be inclined to place on these results if I was actually contemplating a release. There were elements of both the reviews and the analysis that were puzzling. For instance, a couple of apparently positive reviewers had given ratings of 1/10, and both of my tracks elicited exactly the same list of three Foals album tracks as having similar 'Market Potential' (SoundOut say that they hope to have improved this aspect of the service by the time you read this). And in both cases, features of the track that I was most pleased with ended up getting trashed, while qualities I was nervous about got praised.

If I have a more general reservation about SoundOut, it's that because Scouts are ultimately rated by their success in predicting the herd opinion, it risks encouraging a conservative approach to A&R. I suspect the Alan McGees and Tony Wilsons of this world would scoff at the suggestion that they should trust the statistically weighted opinions of a random bunch of Internet users over their own instincts. It also seems to me highly likely that the auditioning context, where different artists' tracks are shuffled in a random order, rewards simple loudess as well as artistic merit, especially as, unlike on the radio, no further audio compression is applied. (Of course, it may well be that loudness really is a commercial advantage that this system is simply reflecting faithfully!) SoundOut agreed that it would be interesting to do some research to see if there is a correlation between Scout ratings and RMS levels — watch this space. Finally, the site's user base is currently quite UK‑centric, so its opinions might be less useful to record labels located in other territories.

All these caveats need to be borne in mind, but at the end of the day, SoundOut is an affordable way to get truly disinterested opinions on your music, and get enough of them to provide a basis for useful analysis. As such, I think it is probably unique, and as long as you have the confidence and the time to examine the automated analysis with a pinch of salt, it provides information it would be hard to get in any other way.

Would I pay to use SoundOut? It depends very much on the music. For experimental music that is likely to be greeted with incomprehension by a general audience, I think that SoundOut reports are likely to be of limited value, because a general audience is what you are going to get. But for anyone targeting the mainstream, it strikes me as a very useful tool indeed. Compared with the overall cost of releasing and promoting a CD, the price of a couple of Standard reports is trivial, and I can't think of anything else that offers so much useful information for so little. I'm not surprised to find that several major labels are already using SoundOut — and it might not be long before the rest are asking themselves whether they can afford not to use it.

Be Prepared: Join The Scouts

Joining slicethepie.com is free, and once you've registered, you can get to work 'scouting' tracks. By default, there is only a single 'scout room' to visit, although others are sometimes established for special projects. The scout room presents a simple music player with play and pause buttons, and a volume slider. Press play and you'll hear a song. A large timer counts down the 60 seconds that is the minimum evaluation time. After this, you can drag a slider to rate it out of 10, and enter your text review. You're not allowed to move along to another track until you've submitted something that slicethepie's electronic brain acknowledges as a useful review. I found this irritating — quite often I wrote something that seemed perfectly sensible and informative to me, but was told it couldn't be submitted as it was too short or didn't include enough musical terms. I would also have liked the ability to skip tracks where I felt I couldn't comment meaningfully; because everything is currently lumped together in a single scout room, you can find yourself auditioning anything from folk to R&B to thrash metal to extremely bad hip‑hop, in the space of 10 minutes.

Those quibbles aside, I have to say that Scouting is brilliant fun. The general quality of the music is pretty good — generally better than you'd encounter in a random trawl around MySpace or SoundCloud, or on any 'new bands' site — and there's something empowering about the knowledge that your opinions are helping record companies make their decisions. (Frustratingly, though, almost all tracks remain anonymous even after you've submitted your review.)

There's also the financial side of things. No‑one will become rich as a Scout, but if you achieve the top five‑star rating, you'll receive 20 cents per track reviewed, so it would be fairly easy to get $5 or so for an hour's work. Star ratings can change quite fast, and my standing had leapt to three stars (10 cents per track), back down to one, and up to three again before I'd reached 50 reviews.

I was curious to know how star ratings are awarded and, in particular, whether they are measured against the subsequent commercial performance of a track, or simply against the overall views of the slicethepie 'herd'. It turns out that for individual reviewers it's the latter that is important: in other words, what gets you a high rating is the ability to predict what the wider slicethepie community will think, rather than predict the hits directly. In turn, however, SoundOut do take steps to make sure that the overall SoundOut ratings produced by this community are consistent with actual market performance.

Asked to explain how ratings are generated, they said: "Each of the individual reviewers are personally star rated on their ability to predict the broader market view of a track. The better they are at this, the more stars they get given, the more weight their opinion carries and the more they get paid per review. Their 'accuracy' is measured consistently on a 30-review rolling basis, so their star rating can go down as well as up. We also make the star ratings public, and this encourages diligence among the reviewers.

"Where external factors come into play is in the report analysis. Since August 2009, we have put every new release by the major labels through SoundOut before they have been released (on radio or commercially) or immediately on release. Earlier this year we tracked the chart and sales performance of 125 of those singles (released from August 2009 to April 2010) and mapped their SoundOut ratings against their post‑release sales performance. This testing showed a strong correlation between the two and is what the SoundOut benchmarks are based around, and why we're confident that SoundOut really is a good indicator of a track's sales or 'hit' potential.”

The Slicethepie Community

I asked SoundOut how they could be confident that their reviewers form a representative cross‑section of the music listening community. They told me that there are over 120,000 people signed up to the site, though it's not clear how many of these are active. Of these, roughly half fall into the under‑25 age bracket, 60 percent are male, and 58 percent are located in the UK, with 24 percent in the US and the rest elsewhere. Just over half are in employment, while 28 percent are students, and in terms of tastes, most declare themselves to be fans of pop, rock and indie.

Special Offer

SoundOut are keen for SOS readers to try the service for themselves, and to that end, are offering all readers $5 off their first SoundOut report. Use the voucher code 'SOUNDONSOUND5' to claim your discount.

Pros

- A unique way of obtaining disinterested opinions from a large number of listeners.

- SoundOut reports include comprehensive statistical analysis of reviews and ratings.

- Affordable pricing puts Standard and Express reports within the reach of most.

- Being a Scout is great fun, and you get paid (a bit).

Cons

- Less useful for music that is a long way from the mainstream.

- Arguably, encourages a conservative approach to A&R, and the pursuit of loudness in mastering.

- Not for the faint‑hearted!

Summary

SoundOut provides a unique resource, and could well become a vital tool in the A&R man's kit.