The introduction of 'inter-app audio' in iOS 7 provides a new level of integration between music apps.

Back in June this year, at the Worldwide Developers Conference in California, Apple first introduced the term 'inter-app audio'. It was lurking, in a smallish typeface, to one side of a back-projected list of new features in iOS 7. But with no explanation, and apparently no public information of any kind, nothing could be made of it.

Now that iOS 7 has had a bit of time to settle though, many more details about inter-app audio, and the first crop of apps to implement it, have appeared. And let me tell you, for iOS-based musicians it's going to be a massive!

Progress Inter-appted

Of course, we've been able to stream audio between apps for nearly a year now, courtesy of Audiobus. A separate app itself, which allows others to be connected in simple input > effect > output configurations, The iOS app Tabletop is already implementing inter-app audio features in nifty ways. Shown here, its Mastermind controller drives Arturia's iMini synth. Chooser pop-up menus list all the compatible apps on your iDevice. Audiobus has deservedly become a standard in the iOS audio world. To the extent, in fact, that any music app not supporting it really looks like a poor relation. It's easy to use and understand, and it fits right in with the simple, direct iOS ethos (try saying that after a few pints...).

The iOS app Tabletop is already implementing inter-app audio features in nifty ways. Shown here, its Mastermind controller drives Arturia's iMini synth. Chooser pop-up menus list all the compatible apps on your iDevice. Audiobus has deservedly become a standard in the iOS audio world. To the extent, in fact, that any music app not supporting it really looks like a poor relation. It's easy to use and understand, and it fits right in with the simple, direct iOS ethos (try saying that after a few pints...).

However, inter-app audio, or 'IAA' as it's starting to become known, is poised to be bigger still. Running at a system level, it lets apps pass not only audio from one to another, but MIDI and transport commands too. And believe it or not, it's going to allow you to use effect-type apps (like Amplitube, for example) like they were plug-ins, 'inside' another app. No more linear Audiobus-like signal chains; this is "hub-spoke topology”, as the developer of the iOS NLog synths recently tweeted. Another developer, Jonatan Liljedahl of www.kymatica.com (who's behind the excellent AudioShare, Yellofier and, more recently, his own AUFX series) very helpfully gave Apple Notes this lowdown: "There are two kinds of IAA apps: hosts and nodes. A node app can be plugged into a host, very much like audio plug-ins [in OS X], and can be either a generator/instrument, which produces sound, or an effect, that processes sound. When an IAA node is used inside a host, it's the host that feeds the node with audio (if it's an effect), and grabs the resulting signal output. Additionally, a host can send MIDI messages directly to a node, for example to play a synthesizer node app. Also, a node can implement a remote control to manipulate the host transport, with standard stuff like rewind, play, pause, record.”

A node app can be plugged into a host, very much like audio plug-ins [in OS X], and can be either a generator/instrument, which produces sound, or an effect, that processes sound. When an IAA node is used inside a host, it's the host that feeds the node with audio (if it's an effect), and grabs the resulting signal output. Additionally, a host can send MIDI messages directly to a node, for example to play a synthesizer node app. Also, a node can implement a remote control to manipulate the host transport, with standard stuff like rewind, play, pause, record.”

In the case of an app like the iOS DAW Auria, which is very definitely a host, inter-app audio will allow you to insert your effect apps directly into its channel strips, all running within its buffer, with no additional latency. It won't force their graphic interfaces to load either, until you need them. This is coming in v1.130.

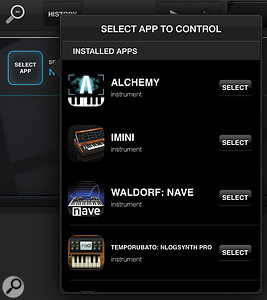

And elsewhere IAA is already fulfilling its promise. Retronyms' Tabletop production app, for example, has two new devices dedicated to inter-app use. Mastermind is a virtua When used with an inter-app audio host, synths add something to their graphic interfaces to allow the host to be remotely controlled. Waldorf's Nave synth, for example, gets a little panel hovering over the keys.l controller that can launch, drive and sequence any IAA-compatible instrument. An in-app purchase, Zignal records audio from IAA apps into the Tabletop environment.

When used with an inter-app audio host, synths add something to their graphic interfaces to allow the host to be remotely controlled. Waldorf's Nave synth, for example, gets a little panel hovering over the keys.l controller that can launch, drive and sequence any IAA-compatible instrument. An in-app purchase, Zignal records audio from IAA apps into the Tabletop environment.

IAA's capabilities even enhance MIDI-oriented apps, apparently. Yamaha's TNRi visual sequencer can now (via its Layer menu) drive either internal sounds or any other IAA synths on your iPad.

Teething Trouble

There are some limitations. There's currently no provision for IAA hosts to manage their nodes' presets, or to automate their parameters. And there are some clumsy corners, like the confusing distinction between 'generator' and 'instrument' versions of synth-type apps, which can appear together when you're trying to choose one in a host app. (Tip: only 'instruments' receive MIDI note input from a host). But it seems all this and more will be ironed out as the standard, not dissimilar from OS X's Audio Units, develops.

There's still a role for Audiobus, no doubt. But another huge game has just rolled into town.