It’s useful to be able to work on headphones even when mixing immersive audio. Does Genelec’s Aural ID system make this easier?

Headphones remove the environment from the monitoring experience. Binaural encoding can put it back. That, at least, is the theory behind the many ‘virtual control room’ plug‑ins that are intended to create the illusion of being in a world‑class studio and not a bedsit in Dudley. It’s a nice idea, but in practice, you don’t usually have to open your eyes to realise that you’re not actually sat at the console in Abbey Road.

Binaural encoding works by applying a set of filters called a head‑related transfer function (HRTF). These recreate the complex effects worked by the outer ear, head and shoulders on sound arriving at the ear from a distance, which are otherwise missing in headphone listening. And one reason why binaural encoding doesn’t always perfectly convince is that no two ears, heads or shoulders are quite the same shape. Binaural encoders typically use a generic HRTF that represents the ‘average person’, but some of us are more average than others! The ideal solution is to measure the characteristics of the individual listener, and calculate a personalised HRTF. Historically, though, this hasn’t been a practical solution, because the measurement process required the use of an anechoic chamber.

Genelec changed this three or four years ago with the use of advanced machine‑learning techniques to derive accurate head‑related transfer functions from images: specifically, from a video of the relevant anatomical details. Users could upload a suitable movie from their smartphones, have it analysed, and download a file that encapsulated their individual vital characteristics. When it was first introduced, however, Genelec’s Aural ID seemed a bit like a technology in search of an application. It generated files in the SOFA format, which is intended as an open standard for head‑related transfer functions, but Genelec themselves didn’t offer any software that could put these files to good use — and nor, on investigation, did many other developers.

Since that launch, two things have changed. The explosive growth of immersive audio has created new use cases for binaural headphone monitoring, and Genelec have introduced an Aural ID plug‑in.

Ears On Film

The system that Genelec have come up with for generating a personalised HRTF is not difficult to navigate, but it is quite exacting. You’ll need to recruit a willing helper to shoot the video, which has to be shot in a suitably bare space with decent lighting; there are even recommendations as to what sort of clothes the subject should be wearing. There needs to be enough space for the person filming to walk right round his or her victim at a distance of a metre or two, which rules out quite a few domestic rooms.

Recording, upload and payment is handled through the free Aural ID Creator app, which is available for iOS and Android. Once the video is accepted, Genelec’s servers can take up to two days to crunch the data, whereupon you can log into your Genelec Community account from a desktop computer, install the plug‑in and download your personal Aural ID profile. Multiple profiles can be stored locally, for situations where more than one user needs access to Aural ID, and you also get a generic profile derived from a KEMAR HATS (head and torso simulator).

On a superficial level, the Aural ID plug‑in appears similar in concept to Embody’s Immerse Virtual Studio Alan Meyerson Signature Edition, reviewed in SOS June 2022 and undisputed holder of the award for longest plug‑in name ever. However, appearances are deceptive in this case. Both use custom HRTFs to place the listener within a virtual surround array, and both incorporate equalisation designed to ‘tune’ the effect for specific headphone models; but beyond that, the two companies’ approaches are actually quite different.

Embody’s plug‑in is designed to recreate the experience of sitting in a specific real‑world monitoring environment, namely Alan Meyerson’s mix suite at Remote Control Studios in Santa Monica. This environment is recreated using impulse responses recorded at the listening position, which have the imprint of the room acoustics and speaker characteristics baked into them. By contrast, Aural ID synthesizes rather than samples its virtual monitoring environment. Although this is set up by positioning virtual loudspeakers within a 360‑degree sphere, there’s no attempt to model the actual characteristics of real‑world speakers, nor the acoustics of a control room. What you’re listening to, in effect, is a number of ideal point sources placed within an ideal non‑reflective space.

Another interesting difference is that Embody generate a personalised HRTF from a single photo of one ear, whereas Aural ID requires a detailed video lasting several minutes, which presumably involves processing a lot more information. A direct comparison would be fascinating — but unfortunately, both plug‑ins now store this data in proprietary formats, so Embody’s HRTFs cannot be loaded into Aural ID, nor Genelec’s into Immerse VR. (Buying a yearly subscription to Aural ID still gives you the option to download the data in SOFA format, though, so if you can find a suitable host plug‑in, you could in theory continue to use the Genelec personalised HRTF after your Aural ID subscription has lapsed.)

In The Cells

The Aural ID plug‑in interface will feel very familiar to anyone who’s used Genelec’s GLM speaker management system. The speaker layout is displayed on a web of hexagonal cells, with height speakers distinguished by a slightly different colouring. No fewer than 18 different layouts are supported at present, from mono up to two different 7.1.4 arrangements. As with Immerse VR, the second of these is necessitated by Apple’s use of a non‑standard channel mapping in the Atmos implementation within Logic; at the time of writing, Aural ID hadn’t caught up with the new and equally non‑standard mapping in Logic 10.7.3, but it’s possible to reconfigure this easily enough.

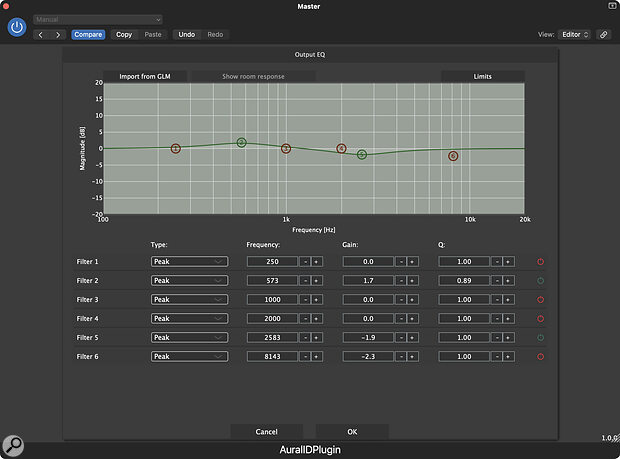

Embody make a selling point out of the fact that their headphone ‘tuning’ is done by ear rather than through measurement. By contrast, I presume Aural ID’s frequency correction is measurement‑based. At present, though, it supports only eight headphone models, plus a generic curve labelled ‘Other’. I don’t own any of the models on the list, but I do have several pairs that I consider pretty neutral and not really in need of correction, so it’s frustrating that this section of the plug‑in can’t simply be switched off. If you do feel the need to adjust the frequency response manually, there’s also a comprehensive EQ section; interestingly, this can even import the ‘after’ curve from GLM room correction, for situations where you want Aural ID to match the sound of your space.

A comprehensive equaliser lets you massage the overall tonality of the results.

A comprehensive equaliser lets you massage the overall tonality of the results.

Individual speakers within the web can be muted by Shift‑clicking or soloed by Ctrl‑clicking. This is invaluable during the setup process, and if I have a single feature request for a future version, it would be the option to have these actions automatically mirrored so that, for example, soloing the Lrs speaker would also solo the Rrs speaker. Each speaker, apart from the subwoofer, also has editable Azimuth and Elevation parameters, which allow you to determine exactly where in the 360‑degree soundfield it should appear. These are important for several reasons. Different conventions for surround monitoring specifiy different speaker angles, and you might also want to match a real‑world speaker setup that doesn’t quite accord to any convention. You may well also find that a particular degree angle in the real world is subjectively better matched by a slightly different angle in Aural ID.

Clear Identity

Underlying the different approaches taken by Embody and Genelec are contrasting ideas about what these plug‑ins are for. With typical Californian optimism, Embody suggest that Immerse VR and a decent pair of headphones are all you need to mix immersive audio successfully: that a virtual control room is, if not as good as the real thing, at least good enough. Genelec’s claims are much more cautious. They see Aural ID primarily as a tool that working professionals can use to augment a real Atmos mixing environment, by allowing them to continue to work on projects away from the studio. I am pretty sure Genelec would never recommend submitting an Atmos mix for release that was done only using Aural ID, without at least checking it on a physical surround monitoring array.

If you want to dive really deep, the Controls view presents every single adjustable parameter within the Aural ID plug‑in, totalling many hundreds.

If you want to dive really deep, the Controls view presents every single adjustable parameter within the Aural ID plug‑in, totalling many hundreds.

Ideally, then, you’d set up Aural ID for your own personal use by A/B’ing with your own speaker‑based rig, using the Azimuth and Elevation controls to match the subjective position of each speaker. This is best done using a source such as spoken word, and having briefly tried it in a Genelec‑approved Atmos space, I can confirm that it makes a big difference to the results. Not having an Atmos monitoring setup of my own, though, what I can’t say for sure is exactly how good real‑world mix translation between Aural ID and such a space can be.

Genelec see Aural ID primarily as a tool that working professionals can use to augment a real Atmos mixing environment, by allowing them to continue to work on projects away from the studio.

Using Aural ID on its own certainly suggests that it will prove a useful resource. It produces a powerful sense of spatialisation, and does a surprisingly convincing job of placing sources behind or above the listener. Compared with Immerse VR, this positioning seems less dependent on motion and context: you can use static pan settings to push something overhead or behind you, and the effect is somewhat retained even in solo. Again, optimising the Azimuth and Elevation settings makes a real difference here. When I switched back to the binaural encoding built into the Dolby Atmos Renderer, I found that things sounded rather flat, as though the sound was being projected on a wall in front of me rather than enveloping my head — though it’s hard to perform a fair A/B comparison, partly because the switch can’t be made instantly and partly because other variables such as the undefeatable headphone correction in Aural ID tend to introduce small level and tone differences.

Aural ID is not ‘plug and play’, and getting the best from it requires a significant investment in terms of setup time. It also requires a significant investment in financial terms: there’s no getting away from the fact that this is a costly tool. Anyone who is merely curious about immersive audio will probably baulk a subscription price that values a single plug‑in on the same level as, say, Pro Tools Studio. But I do think it’s fair to suggest that Genelec’s Aural ID represents the state of the art when it comes to immersive monitoring on headphones — and if you’re doing paid work at the level where you can justify spending tens of thousands on a 7.1.4 Atmos system, the additional expense could well be worthwhile, especially if you don’t have 24/7 access to that system.

Pros

- Probably the most convincing binaural spatialisation tool yet.

- Can generate a custom HRTF from video footage.

- Virtual loudspeaker array is highly configurable.

Cons

- Expensive.

- Headphone correction only supports eight models, and can’t be defeated.

Summary

Though it’s currently priced beyond the reach of all but serious professionals, Genelec’s Aural ID technology is an impressive and potentially very useful adjunct to a speaker‑based immersive monitoring system.

Information

€49 per month or €490 for the first year, €249 for the second and €149 thereafter. Prices include VAT.

Source Distribution +44 (0)208 962 5080

€49 per month or €490 for the first year, €249 for the second and €149 thereafter.