Extracting a groove from a loop in Studio One is easy. As you can see, the vertical lines within the box at top centre are no longer regular, but correspond to the strong beats within my loop.

Extracting a groove from a loop in Studio One is easy. As you can see, the vertical lines within the box at top centre are no longer regular, but correspond to the strong beats within my loop.

We explore real-world uses for Studio One's tempo-tweaking tools.

There was a time when the basic elements of a song were things you could build upon, but which would have to remain pretty much as they were once established: a beat, a vocal recording, a chord structure, and so on. But what's been interesting me recently is the ability we now have to fundamentally change those raw materials. I'd like to say that this is in the endeavour of creative music production, but more often than not, it's in the process of trying to rescue something. In this month's poke at Studio One I'm going to use my own gripping story of disaster and rescue to highlight the fabulous tools it has for finding beats, extracting grooves and regrooving the fundamentals of your track to find a path out of production dead ends.

My story begins with the desire to produce a definitive version of a song used in live performance. I'd put together a skeleton arrangement and found a super drum loop to build the track around. I then grabbed the opportunity to work with a passing vocalist, and was able to record some pretty great vocals alongside the groove. Then the work began.

Extracting A Groove

The drum loop had a powerful lilt and vibe to it, and I had great difficulty fitting other virtual instrument parts into that groove. My playing was not really up to the task, and quantising seemed to take things far away from what I was trying to achieve. This is where the wonderful world of groove extraction comes into play.

Hit the 'Q' in the Studio One toolbar to open the Quantize panel. In conventional quantising, you deal with the Grid view, where settings for note lengths, resolution, swing and so on keep everything on the same grid. Click on Groove, however, and you're given a bunch of evenly spaced lines in a long rectangular box. This is where the magic happens. Let's say you have a loop on the timeline that you are trying to get everything else to sync up to. Simply drag and drop this loop into the rectangular box, and Studio One will perform beat and transient detection to pull the groove from the audio into the quantising engine — you'll see that the lines in the rectangular box are no longer evenly spaced. You can now apply this groove to all the other MIDI and audio tracks in your project.

There are two ways the groove can be applied to your other tracks. One is to apply it to notes or audio within an event, which is probably how you'd expect it to be done. The other way is to apply it to the starting point of individual events on the track. So, for example, you may have dragged in a single kick‑drum sample that you want to duplicate and place at the same points as the kick in the original loop. Applying the quantise to the track would shift all the placed kick sample events onto the groove. The button to switch to track mode can be found on the far right of the Quantize panel.

With my groove extracted, I could now start applying it to my virtual instrument parts — but then I hit another problem. Arpeggiation and sequence modulation within virtual instruments wasn't party to my groove quantisation template, and although I could add swing, this wouldn't approximate the groove in my loop. The workaround was to record the output of the NoteFX Arpeggiator into the track and then quantise it. This is easily done once you've recorded the chords you are using to trigger the arpeggiator. Right-click the event, select Instrument Parts and then Render Instrument Tracks. The term 'render' tends to make you assume that you're about to render the event out to audio, but in this case, it pulls in the note events from the NoteFX plug-in and commits them to the event as MIDI notes.

The track in my story was taking shape, but felt like it was being pushed around by this drum loop. The synth bass part that was following the groove was sounding a bit weird, and so I decided to borrow a bass player to put some humanity into the bottom end. The part worked well with the vocals and drum loop, and I thought I had cracked it, but the way the groove was dominating and pushing the other instruments into weird places still bothered me.

I persisted with the mix and started to work on the drum loop to flesh it out and create some variations — and that was when things started falling apart. The loop had a glitch which, initially, I'd seen as being part of its character, but the more I worked with it, the more annoying it became. The groove itself seemed to be impossible to replicate, slice or re-order in any way that worked musically. The realisation slowly dawned that this loop was becoming a dead horse and it was time to stop flogging it. I had some great vocals and a decent bass performance, but they were wired to a groove that I had started to loathe. In days gone past you would write the track off and start again — but armed with Studio One's transient detection and Melodyne integration, I could manipulate the audio into a whole other groove.

Grabbing a section, I muted the offending drum loop and the other audio tracks, generated a quick Impact XT pattern in the step sequencer and, with a little bit of swing on the quantise, all the other instrument tracks pulled themselves together nicely. Fabulous! Right, now to pull in the bass and vocal tracks... which both sounded terrible. The void between the original groove and my new straightforward beat was vast and seemingly insurmountable. But I was not about to let it drop...

Transient Detection

Having run Detect Transients on my bass part, I now have Bend Markers that can be quantised or moved manually.Studio One is very good at detecting transients, the short-duration peaks that represent the non-harmonic attack phase of a musical sound or spoken word — or, for my purposes here, the beginning of each recorded bass note. To detect the transients in the bass track, you have to get Studio One to analyse the contents. There are a few ways you can do this, but I'm a fan of using right-click whenever possible, so that I'm completely sure which event I'm asking it to do things on. From the right-click menu, select Audio, and then under Audio Bend, select Detect Transients. Blue lines then appear in the audio clip showing (hopefully) the start of each note. These are called Bend Markers and we use them to quantise the start of the notes by stretching and compressing the length of the audio.

Having run Detect Transients on my bass part, I now have Bend Markers that can be quantised or moved manually.Studio One is very good at detecting transients, the short-duration peaks that represent the non-harmonic attack phase of a musical sound or spoken word — or, for my purposes here, the beginning of each recorded bass note. To detect the transients in the bass track, you have to get Studio One to analyse the contents. There are a few ways you can do this, but I'm a fan of using right-click whenever possible, so that I'm completely sure which event I'm asking it to do things on. From the right-click menu, select Audio, and then under Audio Bend, select Detect Transients. Blue lines then appear in the audio clip showing (hopefully) the start of each note. These are called Bend Markers and we use them to quantise the start of the notes by stretching and compressing the length of the audio.

Once these are detected, we can now apply our quantise grid and Studio One will shift the Bend Markers around to fit with the timing. You can skip the transient detecting part, because applying quantisation to an audio event will trigger the transient detection automatically, but I prefer to do this as a separate stage so that I can undo and come back to a point where I can edit the Bend Markers directly if the quantising doesn't quite go according to plan.

The bass track in my story was a case in point: it had no intention of following any kind of plan, and whatever strength of quantisation I applied, notes moved either not at all or entirely in the wrong direction. To begin re‑grooving the bass line manually you have to open the editor, select the Bend Tool and start snapping the notes to your new grid with the mouse. Tackling a few bars at a time with the new drum pattern playing along, I could very quickly bring the bass into line.

Melodyne

Tackling the vocals was a different matter. Studio One uses the zplane Elastique Pro time-stretching engine to stretch the audio between Bend Markers, and it's excellent in most cases. With vocals, however, there can be different things going on like vibrato, pitch slides and performance elements that need a bit more consideration. For this the ARA-integrated Melodyne is the perfect solution. Right-click the audio event, choose Audio and then Edit with Melodyne under Audio Processing.

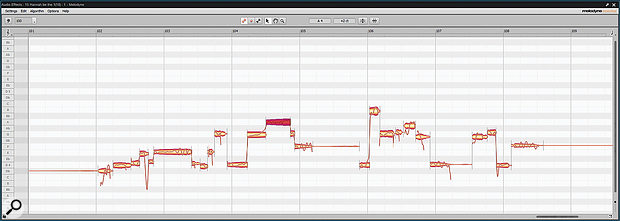

Melodyne isn't only useful for correcting pitch: it is also a powerful tool for modifying timing.Melodyne is still a wonder to behold as it pulls out the notes and displays those orange-shaded blobs of data. As you can see in the screenshot, none of them were on the grid; I didn't necessarily want everything hard quantised, but the unprocessed vocals sounded like they were from a completely different song, which I guess they were. Manually editing in Melodyne gives you the opportunity to adjust and tailor the performance to something that better fits your project. I tackled a handful of bars running with my guiding drum pattern and newly bent bass line to ensure it was hitting the beat in all the right places. After a little bit of tweaking I brought back in the rest of the arrangement and it sounded phenomenal. I felt physically uplifted by the way it all hung together. Weird as it may sound, this track only really found its soul when it was released from the loop on which the whole thing was based.

Melodyne isn't only useful for correcting pitch: it is also a powerful tool for modifying timing.Melodyne is still a wonder to behold as it pulls out the notes and displays those orange-shaded blobs of data. As you can see in the screenshot, none of them were on the grid; I didn't necessarily want everything hard quantised, but the unprocessed vocals sounded like they were from a completely different song, which I guess they were. Manually editing in Melodyne gives you the opportunity to adjust and tailor the performance to something that better fits your project. I tackled a handful of bars running with my guiding drum pattern and newly bent bass line to ensure it was hitting the beat in all the right places. After a little bit of tweaking I brought back in the rest of the arrangement and it sounded phenomenal. I felt physically uplifted by the way it all hung together. Weird as it may sound, this track only really found its soul when it was released from the loop on which the whole thing was based.

I got stuck into the rest of the bass track and the vocal track and the four harmony tracks. Then other options opened up like arpeggiation, sequenced modulation and tempo‑based delay effects. It took a few hours of editing, but when I came back to it the next day it felt so fresh and exciting that it was definitely worth the effort. This ability to quantise audio, to bend audio and stretch the timing of vocals is amazing. It rescued a track that had become unworkable and found an entirely new groove.