Korg's Z1, like all modelling synths, requires masses of DSP horsepower.

Korg's Z1, like all modelling synths, requires masses of DSP horsepower.

Physical Modelling and Virtual Synthesis have been buzzwords for several years now, especially when it comes to imitating analogue synthesis. But what are their advantages and disadvantages, and how do they work? Paul Wiffen explains. This is the ninth article in a 12‑part series.

About 12 years ago, I was taken by a guy, with whom I was working on an Atari sampler, to the engineering lab where he moonlighted as a Cambridge research doctor in DSP for audio. There, from a computer which filled half a decent‑sized room, I was played a series of brass and woodwind sounds which I assumed to be samples. They certainly had an authenticity I had previously only heard from sampling. But the more I listened, the more admiration I had for the guy who had made the multisamples. I couldn't hear the loops, nor the points up and down the range where one sample stopped and the next one started. I knew he couldn't have used positional crossfading, because that always gives a 'doubled', chorus‑like effect. What's more, sometimes the effect of velocity (from the MIDI master keyboard being used to trigger the sounds) changed the sound subtly, in a way that velocity crossfades could not. I was flummoxed. "How's it being done?," I asked. "Physical modelling," came the reply. "One day all synthesis will be done like this!"

Two years later, at a US NAMM music fair, I was helping out on the stand of my room‑mate in California. My main contribution had been to use a Roland MC500 to sequence the backing for his demonstrator, ex‑Berlin guitarist Dave Diamond, and sync it to the PPG HDR, the world's first stand‑alone hard disk recorder, so that Dave had something to record his guitar and vocals alongside. I had assumed that the HDR ($17,000 with an 85MB hard drive) was the most advanced piece of technology I was going to see during that show, but when the German designer, Wolfgang Palm, emerged from the internal booth, saying "Andy, I think I have it working again," we all huddled inside to hear an even bigger and more expensive box do a passable imitation of a Minimoog. Then he flipped a switch and it produced the kind of non‑electric piano which only FM can be responsible for. The box was called the Realizer and when I asked how it was being done, the dour German replied "Virtual Synthesis." It took me years to equate this with what I'd heard two years before in Cambridge.

Let's just make this clear: Virtual Synthesis is another name for Physical Modelling. One term describes where it is done, the other how, but the procedure is the same.

So before we go any further, let's just make this clear: Virtual Synthesis is another name for Physical Modelling. One term describes where it is done, the other how, but the procedure is the same. So don't let any boffins or, worse still, marketing men, hoodwink you — they are two terms for one technology.

But what is the technology, exactly? Again, you may well receive several different answers depending on who you ask. Here are just a few of them: "masses and masses of DSP horsepower"; "software models of the way real instruments produce their sound"; "built‑in DSP FX taken to its logical conclusion"; and "the sonic equivalent of virtual reality". The trouble is that there is an element of truth in all of these; it does take a huge amount of Digital Signal processing to undertake realistic physical modelling; the software involved does attempt to recreate the way sounds are made in the real world; instead of just changing the basic sounds through effects processing, the sound is created from scratch by the same sort of chips which have been producing the effects in synthesizers for years; and the level of realism involved these days beats anything I have seen on a virtual reality system into a cocked hat.

She's A Model...

Let's return to first principles and the word 'modelling', because this is the key to the technology. All the other methods of synthesis we have looked at over the last year have one thing in common: the parameters involved with each type of synthesis don't change depending on the type of sound you're trying to get. There's a filter attack parameter on an S&S (Sample & Synthesis) synth whether you're trying to produce a piano, strings, or a synth bass. There are harmonic levels on an additive synth whether you're making a brass sound or a harpsichord. The wave sequencing parameters on a Wavestation are always there, whether you use them or not!

The same is not true of a current multi‑model synthesizer such as the Korg Prophecy/Z1 or a Yamaha VL‑series synth. Look for the same parameters you used to make a flute sound when using the Bowed String model and you'll be out of luck: the parameters change depending on the model you have selected. This is why the time it takes to change patches on a modelling synth is often perceptible, because so many different parameters need to be broken down and re‑configured. Quite often when you change models, you are quite literally changing synths. This can make physical modelling as a method of synthesis quite challenging to define, which is why the DSP effects analogy is quite useful. We expect the parameters to change when we switch a multi‑effects unit from reverb to flanging or distortion; the multi‑modelling synth is the same — only more so. Think of changing from a tenor sax to a soprano as akin to changing from a hall reverb to a room; changing to a violin is like selecting a phaser effect instead. The only real difference is one of scale: the amount of DSP power is greater in a modelling synth by at least a power of 10 or two.

However, this really doesn't help you understand how physical modelling does what it does, in the same way that most people don't have any idea how DSP is used to create effects. In fact, the principle is the same as with digital reverb. The designer attempts to work out what happens in the real world, and then uses mathematical calculations to attempt to recreate this in software. The degree of realism achieved depends on two things: howaccurate is his analysis or 'model' of what happens in the real world, and how closely the DSP algorithms he then writes reproduce this analysis. If the designer has misunderstood how the sound is produced in the real world, then — however good his DSP code is — it's unlikely that he'll make a very realistic‑sounding reverb or plucked string instrument (although he may create some great new effect or sound which can't be produced in the real world). On the other hand, however great his understanding of the processes involved, if he doesn't have the necessary DSP horsepower to hand he may get into the right ballpark, but he isn't going to fool anyone that this is a real hall or a real guitar. For this reason, I still haven't heard a halfway decent model of a grand piano, because it's still prohibitively expensive to provide the amount of DSP power needed to recreate what's going on inside a 9ft Bösendorfer (even after you've spent a lifetime analysing exactly what that is). I would hesitate to say that it will never happen, but I think we're probably still a few years away from a great physical model of an acoustic piano. (However, the rate of acceleration of technology we're currently experiencing, coupled with the falling price and increasing power of DSP chips, might make it sooner than I think!)

Think of changing from a tenor sax to a soprano as akin to changing from a hall reverb to a room; changing to a violin is like selecting a phaser effect instead.

Analysing Analogue

Roland's DSP‑based JP8000 modelling synth (top) offers much of the look and feel of the analogue Jupiter 8 (above).

Roland's DSP‑based JP8000 modelling synth (top) offers much of the look and feel of the analogue Jupiter 8 (above).

The easiest instrument for the programmer to figure out, with a view to recreating what's going on inside it, is the analogue synthesizer. The reason for this is that these instruments were designed by people who knew the physics of what they were creating (unlike Stradivarius, who refined his craft empirically — by observation and experimentation). It's thus easy to break the analogue synthesis system down into components for recreation in software, and cheaper to provide the necessary DSP power to do this. So even if the modelling synth programmer doesn't know how an analogue filter works, he can get a book on filter design and read up on it. He can then recreate this with a fairly limited amount of DSP. This explains why we currently have more affordable modelling analogue synths on the market, but also why their polyphony is fairly restricted (at least in comparison to today's PCM‑based synths, if not to the original analogue machines on which they are 'modelled'!). Once you move into the recreation of acoustic instruments such as brass and strings, however, as with Korg's MOSS system (Prophecy/Z1) and Yamaha's VL multi‑modelling synths, things get a lot more complicated and therefore a lot more expensive (or a lot less polyphonic, to save money).

I'm going to leave the modelling of acoustic instruments until next time, and concentrate this month on the advantages that the modelling of analogue synthesis gives. First, I'll make sure that everyone has understood exactly how the model works. The designer starts by analysing how the analogue synth breaks down into its component sections — oscillators, filters, envelopes, and so on. If you don't know this stuff by now, you've clearly not been paying attention. But worry not — you can re‑enrol in Synth School simply by contacting our back issues department and re‑ordering the June and August 1997 issues of SOS, which dealt with the components of an analogue synth.

Once the designer has a block diagram of an analogue synth in his head, he simply goes about replacing each component section with a software engine to accomplish the same task. In fact, this has been happening to analogue‑style synthesizers over the last 20 years anyway. First, synths like the EDP Wasp, the OSC OSCar, the Elka Synthex and the Korg Poly 61 replaced analogue oscillators with digital ones (with greater or lesser success). Instead of the waveform being produced by analogue components, it was read out through a D‑A (Digital‑to‑Analogue) converter as a string of numbers which acted in roughly the same way (the degree of roughness tended to determine how good the machine sounded), before being fed through an analogue filter. Many envelopes were generated digitally from right back in the early 1980s, but it took until the end of the '80s before analogue filters were replaced with digital ones, Roland and Emu being amongst the first to achieve respectable sounding digital versions of this central component of analogue synthesis.

However, these digital replacements still tended to be implemented in 'discrete' circuitry. You could still point to the bit of the circuit board where the filtering was done or the waveforms generated (if you were a suitably‑trained engineer with a circuit diagram, of course). Often the signals were passed around the board in the analogue domain between these sections (less and less often as time went by, of course) and from them to the increasingly present DSP effects chips.

From Real To Virtual

Even though by the time we reach the modern S&S synth almost everything inside is done digitally, it is not a virtual synth, as parts of the process still take place in the physical world. The transition to virtual synthesis takes place when all these elements are replaced in code which runs on general‑purpose DSP hardware chips, and when it is no longer possible to say just where the filtering or enveloping takes place (except in a particular line or lines of code). The true virtual synth may look like its analogue or digital equivalent when represented as a block diagram, but look inside the case and all you will see is a bunch of (usually identical) DSP chips, with a very simple circuit board layout that allows them all to be harnessed together as one mass of DSP horsepower.

Of course, many of today's DSP‑based modelling synths look almost more like analogue synths than analogue synths do! The Roland JP8000 modelling synth lovingly creates the front panel of the analogue Jupiter 8 (JP8), even down to the sliders I never liked the first time around. Clavia's Nord Leads (I & II) give you a knob for every parameter, so you can twist again like you did last decade but two (if you were born then), and even a synth like the Korg Z1, which doesn't have room on its entire surface to provide a knob for every parameter (it takes 17 Mac windows to fit them all in), offers you the most commonly tweaked ones as dedicated hardware knobs.

Dedicated these knobs may be (and should be, in my opinion — there's nothing worse than a knob with an identity crisis when you're stood performing in front of a crowd of people), but don't think this means that there's any physical connection with the parameter they control. The pot is simply read, and the value derived applied to the appropriate software parameter. A new operating system could very quickly make nonsense of the parameters printed on the front panel (perhaps this is how DSP engineers play practical jokes on mere mortals, who see a label and believe it). The Yamaha AN1x makes this point pretty forcefully, as you need to check which of the colour‑coded modes is selected and then look for the parameter name in the matching colour. This does enormously reduce the number of knobs required, but it makes use on a darkened stage a bit exciting!

Unique Attributes

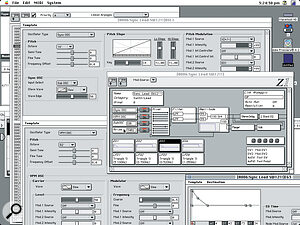

Mac editing software for Korg's Z1, showing the sub‑oscillator being used as the sync source.

Mac editing software for Korg's Z1, showing the sub‑oscillator being used as the sync source.

Apart from the return of knobs, what other advantage does the modelling of analogue synths offer, especially over the original machines, which tended to have knobs aplenty? Well, let's start with the mundane but very important quality which digital synths have been offering for a while: stability, especially in tuning and in the accurate recreation and recall of sounds. The younger members of my audience may not value this very highly, but they've probably never played a Minimoog or Prophet 5 in a hot club. With modelling, you could emulate the tuning inconsistencies of analogue machines, though there's little artistic use for such things (unless you are going for the nostalgia vote), but there are some modelling systems which can introduce the slight random changes in pitch which fatten up a multi‑oscillator analogue synth sound.

The exact matching of filter settings is something else which digital technology has brought, and physical modelling makes the exact polyphonic reproduction of the same sound more precise, as well as allowing the recall of timbres and their porting from instrument to instrument. Again, though, this is old news for anyone who owns a synth made in the last 10 years.

The designer attempts to work out what happens in the real world, and then uses mathematical calculations to attempt to recreate this in software.

So what are the advantages of physical modelling of analogue which we have never seen (or should that be heard?) before? Put simply, the synthesizer can be reconfigured for different ways of combining components in a way which compares to a modular synth, although new components can be created with less cost than in a modular system. The most obvious example is on the Korg Z1. Instead of having one model for analogue synthesis, it actually has six different models, with different configurations.

Let's look at the Sync Osc model as a case in point. This model runs on a single oscillator (so, for example, you can have a different model on the Z1's second oscillator). Those of you who were paying attention to the instalment of Synth School which covered oscillator sync (SOS August '97), should be raising one eyebrow, Spock‑like, by now, wondering how you can sync an oscillator if you haven't got a second oscillator to sync it to. Well, fear not. The Z1's designers have made it so that you can use the sub‑oscillator as the sync source (see the accompanying screen dump from the Mac Z1 software). You then don't need to actually listen to what this oscillator is doing. In fact, the other oscillator can have a completely different model on it (in the patch shown in the screen dump, the second oscillator is set to the VPM model, Korg's equivalent to FM). The real advantage of this is that when you take the pitch of the sub‑oscillator which is controlling the sync right up, the sound of the sync'ed oscillator, while very interesting, becomes very thin. Because the second oscillator is still available for playing another waveform, you can keep a solid basis to the sound, even when you making the sync'ed oscillator squeal by taking the control oscillator pitch right up.

The Z1 has different models for Cross Modulation and Ring Modulation, which (as I pointed out when we originally covered these techniques in the August 1997 issue of SOS, as above) was previously often only possible on modular synths which allowed you to patch any source to any control point — techniques developed from what the original designer might have seen as 'mis‑patching.' Of course, there were analogue synth keyboards which started to bring in these routings as standard, but they offered nothing like the complexity of reconfiguration of the standard analogue setup which is possible with Korg's MOSS physical modelling system. As the 'components' are only DSP software routines linked by more software, re‑routing is not as difficult as when one had to switch control voltages coming from one part of the system to a completely different part of the system, often where the original designer had not expected them to go. Back then, a physical wire was needed to connect the source to the destination, but in the virtual world of physical modelling the designer only has to think it there, change a line of code for the address a datastream is sent to, and hey presto, it's done. Of course, you're still limited by the imagination of the designer and his fixed ideas on what you might want to do (the machines with a knob for every parameter tend to limit you in much the same way as the original synths did), but with the advent of software editors for machines like the Z1 and the AN1x, the possibilities really open up.

Modelling The Future

Seer Systems Reality software synth.

Seer Systems Reality software synth.

I've only really scratched the surface of physical modelling in the imitation of analogue here: while analogue imitation may be the most widesprad use of modelling at the moment, and brings certain advantages even over the original classics, it is but the visible tenth of the modelling iceberg. As time goes by and DSP chips become cheaper than the cholesterol‑filled variety, we'll see physical modelling expand to take over some of the market share currently dominated by S&S machines. As I said earlier, the acoustic grand piano may be some way off, but there are already great woodwind and brass, bowed and plucked string, electric piano and organ models out there. Currently it's Yamaha and Korg who have the 'virtual' monopoly, and it is their models of these instruments which we'll be looking at next time, as well as an early 'close but no cigar' attempt by Technics, whose WSA1 synth is very close to this writer's heart. But who knows — at any moment, any of the other major manufacturers (or even a brand‑new name) may burst onto the market with a revolutionary modelling system which will replace the entire orchestra.

In the meantime, see if you can get access to one of the analogue modelling instruments described here, as they are particularly rewarding for those of an experimental frame of mind. I've gone from being a sceptic to a total convert in less than 18 months (but then my favourite 'analogue' synths all had well‑programmed digital oscillators anyway). The authenticity of the sound quality is, of course, purely a matter of opinion, but most people seem to find one of the current crop of analogue imitators which they can live with.

Computer Love

With the advent of the powerful CPU (Central Processing Unit) chips in personal computers, there are now also physical modelling programs which actually run on the main processors of Macs and PCs. The first of these that I became aware of a few years back was by Seer Systems, for the Pentium. Those of a Sherlock Holmes mentality may be able to guess the identity of the synth guru behind Seer (another word for Prophet). It was the brainchild of Dave Smith, who left Korg's R&D facility in San José some years back (where he had set them on the track that would lead to the Prophecy and Z1) to develop the same kind of technology as software‑only programs running on PCs. I'm afraid that my admiration for Dave, great though it is, was not enough to make me buy a PC to run his software on, but the demonstrations I heard (and played) at several NAMM shows in succession were enough to convince me of the validity of Seer's code, if not their choice of computer platform. Those of you who have the patience to install a soundcard (because, of course, you need its D‑A converters to listen to the sound created in the digital domain) into a PC (something for which my life is definitely not long enough) can check this software out.

I was cheered, on Apple's own stand at this year's NAMM show, to find a company called BitHeadz, who have produced a very similar product for the Macintosh, called the Retro AS1. I wasn't able to spend long with this program, but the authenticity of the analogue sound was certainly there, even through the basic Apple Sound Manager (greater fidelity is available through PCI digital audio cards). Have a look at this product on the company's web site (www.bitheadz.com) and see if they have any audio examples to download. In the course of writing this piece, I spoke with BitHeadz, who have been shipping Retro AS1 for a little while now. PC people (if they haven't stopped reading already, because of my partisan copy!) will be pleased to know that the PC version will ship this summer, although it will apparently need much faster Pentiums to produce the same results as on the PowerPC chips.

While no proper analysis of either of these programs is possible here, this is developing technology, and anyone really interested in synthesis should watch this space as it looks as though cyberspace is probably where the exciting development in physical modelling will come from. Something common to both the Seer and BitHeadz products is the fact that polyphony and numbers of oscillators per voice are fluid and entirely dependent on how much CPU power you have and how much of it you want to dedicate to synthesis. This means, with the accelerating pace of CPU speeds and MIPS capacities, that the 6‑oscillator‑per‑voice, 1000‑voice multitimbral analogue synth is only a matter of time. I can't wait!