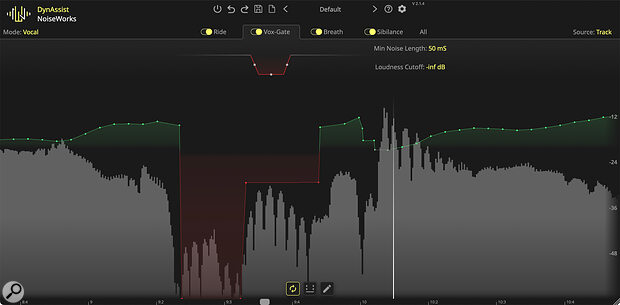

The default view, after the initial analysis has been performed, with the Ride tab open.

The default view, after the initial analysis has been performed, with the Ride tab open.

With ARA2 compatibility, this clever software slots right into your DAW workflow and promises to take much of the pain and time out of getting your vocals mix-ready.

Obviously, a great vocal recording starts with a decent mic in front of a great performance. But once that performance is captured, there can be a lot of effort involved in improving the recording before it’s time to actually start mixing. Such prep work was traditionally done by an assistant but that’s only now the case for a select few in‑demand mix engineers — it allows them to mix quickly, and put their full focus on the more creative and musical side of mixing.

The rest of us must be our own assistants, but the tasks are the same: detailed clip‑gain work to make sure the vocal part has a broadly consistent level and every word and syllable is audible, as well as cleaning up undesirable sounds that could interact unhelpfully with your processing chain — things like bumps of the mic stand, plosive pops, loud breaths, sibilance, any hum on amps and pedals, and tape hiss. Get all your ducks lined up at this stage, and you’ll find that it’s so much easier to bring out the magic in your recordings using your bread‑and‑butter mixing tools, such as compressors, EQs, saturation, reverbs and delays, along with the usual fader and pan moves.

It’s a similar (albeit slightly different) story when working with dialogue, where you’ll typically want to aim for a nice, consistent level, with every word and syllable clearly heard, whether it’s a whisper or an excited exclamation, and with the breathing, popping and essing and so on firmly and transparently under control — sometimes eliminated, sometimes just lowered, for a more natural feel. A key difference with dialogue is that recordings are often much longer, so a lot of these issues are necessarily addressed on the fly, along with your edits and fades.

There have always been tools that can take some of the sweat and tears out of this prep stage, but some can cause you almost as much work as they save. Take breath control, for example: until recently, every real‑time or offline breath control plug‑in I’d tried was a case of ‘close but no cigar’, and while I might be able to correct any artefacts manually, doing that takes a lot of attention and time. That’s why I’ve tended to rely on a manual approach to breath control; it’s more effective, it’s quick to do for a 3‑4 minute song, and with experience it can be done reasonably swiftly as you audition and edit dialogue.

DynAssist

All of which preamble brings me to my first encounter with software developers Noiseworks, whose DynAssist software aims to perform much of this prep work for you, swiftly and, potentially, entirely automatically. In 2025, it almost goes without saying that DynAssist makes use of machine learning (ML), but I feel it important to highlight that it only employs this ‘AI’ to detect the signal levels and identify different content in the signal; the actual processing it applies is conventional level automation, and the user has full DAW‑automation‑style control over the result.

DynAssist is a VST3/AU/AAX plug‑in for 64‑bit macOS and Windows systems, and for most DAWs the best approach is to use it as an ARA plug‑in. In case you’re not yet familiar with ARA, this is an offline plug‑in format that most DAWs, including Studio One, Reaper, Cubase and Pro Tools, now support, and it enables third‑party software to function in much the same way as your DAW’s own offline tools such as pitch and time correction. There are other direct transfer systems for DAWs that don’t support ARA, though, and these are detailed in the PDF manual.

Different DAWs have slightly different ways of opening the ARA plug‑in but generally it’s a case of right‑clicking on a track or event and selecting the plug‑in, or selecting a track/event and navigating your DAW’s menu to locate and load it. Your audio will then open within DynAssist, typically at the bottom of the DAW’s GUI. Personally, I prefer to pop it out into its own floating window, as I can then resize to taste with a drag of the corner, and show/hide it as desired.

Within the DynAssist GUI are four main tabbed windows: Ride, Vox Gate, Breath and Sibilance, each providing control over a different function. At the bottom, whichever view you’ve selected, you’ll see a waveform with a node‑based automation line overlaid, and switching tabs updates this view to highlight the relevant data. A final fifth tab puts all the parameters in one place; obviously this looks busier, but it’s sometimes convenient to have direct access to everything from one screen.

As you become accustomed to using DynAssist, there’s a lot to be said for working in the All tab, where you have access to every parameter at once, but without the graphical control functions found in the other tabs.

As you become accustomed to using DynAssist, there’s a lot to be said for working in the All tab, where you have access to every parameter at once, but without the graphical control functions found in the other tabs.

At the top left is a toggle that lets you select between vocal and instrument modes. Essentially, instrument mode omits the breath control and sibilance tabs, and offers a different approach to gating, of which more below. On the other side, another toggle determines whether DynAssist operates in Track mode (whereby it analyses all clips on the DAW track on which you’ve inserted it) or Clip mode (it analyses only the currently selected clip). The latter can be really helpful in the initial tweaking stages, since you can leave DynAssist’s automatic re‑analysis feature switched on so you can audition the results quickly, without unhelpful pauses. There are also the usual global facilities, including bypass, undo/redo, a preset management section, a link to open the manual, and a basic settings pop‑up, where you can enable tooltips, change the default Track/Clip mode behaviour, and adjust the GUI scaling.

At the bottom of the GUI are a number of useful features, not least buttons to engage auto‑analysis and for loop selection. Not only does the latter sync with you DAW to facilitate loop selection for playback, but again, if auto‑analyse is on, it will only analyse that section, making it much quicker to audition different settings. It’s a good idea, and especially so for long dialogue recordings, as you can refine settings on a shorter selection before applying them to the full part, which will likely be very similar. The auto‑analysis can also be switched off, by the way — again, this allows you to make changes more quickly, since DynAssist won’t have to recalculate its settings after every tweak, though obviously you won’t hear the result until the analysis has been performed.

A pen button lets you manually adjust the automation points (that are created automatically according to the settings in the main tabs) with the mouse cursor. As well as directly editing individual points in this way, you can make marquee selections and pull all the contained points up/down simultaneously, which is useful. You can navigate around the waveform using the mouse wheel to zoom in/out, with Cmd (Mac) or the Windows key acting as a modifier to scroll horizontally. It was a breeze with my MacBook Pro’s trackpad too. All in all, it’s intuitive and works well — very much like editing in your DAW’s own automation lanes.

Ticket To Ride

Starting in Ride view, the aim here is to level the dynamics of your vocal part. Conceptually, it’s not dissimilar to using two‑stage compression, with a parallel compressor bringing up lower level details, and then a regular one pulling down the louder parts. A big difference, of course, is that it’s an offline process, not a real‑time one, and that has a couple of potential advantages.

For one thing, DynAssist’s analysis means it ‘already knows’ where the signal level rises and falls, so it can be more accurate and transparent, and less ‘reactive’, side‑stepping common issues such as pumping or overreactions to brief peaks.

Another key difference is that its output is editable level automation, so it’s super‑easy to control any issues thrown up by that ‘compression’. And since DynAssist’s level changes are all applied at the start of the signal chain, before your processors and effects, it’s arguably more akin to an automatic clip‑gain envelope process than either compression or fader riding. You don’t really need to think about ‘make‑up gain’ in the way you would with a compressor either, since DynAssist lets you specify a target LUFS‑I loudness by dragging a tab on the left to the desired value, and to my mind, there are a couple of benefits to this approach. For example, you can assess any tweaks you make safe in the knowledge that loudness isn’t skewing your perception. Also, you can make the signal levels predictable for any further processing. For instance, you might have a go‑to chain for processing vocals, and within it various threshold‑dependent tools such as compressors. You may even have this feeding into a similarly structured bus‑processing chain, with thresholds calibrated to specific levels. If you already know how loud your source must be to hit those chains in their sweet spot, you can make it so — increasing your chances of a decent ‘faders up’ mix, and thus making mixing quicker and easier.

Sibilance is indicated in light grey on the waveform. In some places, other processes have applied gain such that the overall automation curve shows a boost (green) even where one of two esses (light grey) is being attenuated.It’s also worth noting that although it must take the time to perform an analysis when you update settings, DynAssist introduces no latency on playback in the way an insert plug‑in might. So if you’re one of those types who tends to add more virtual instrument parts at all stages of your production, DynAssist won’t get in the way.

Sibilance is indicated in light grey on the waveform. In some places, other processes have applied gain such that the overall automation curve shows a boost (green) even where one of two esses (light grey) is being attenuated.It’s also worth noting that although it must take the time to perform an analysis when you update settings, DynAssist introduces no latency on playback in the way an insert plug‑in might. So if you’re one of those types who tends to add more virtual instrument parts at all stages of your production, DynAssist won’t get in the way.

There are just a handful of user controls, and I don’t think you really need more. A Speed slider determines how detailed the level correction will be, with fewer automation points being created if you drag the slider over to the right, and very detailed automation when it’s fully left. Beneath, a Smooth slider effectively scales the range of gain/cut being applied, so you can decide just how assertive your vocal riding will be, while Max and Min parameters set the threshold boundaries within which the vocal riding will be performed; these can be linked so that both are adjusted at once, or unlinked, as you prefer. Finally, an Avoid Clipping toggle prevents the level of the part being boosted above 0dB true peak when engaged.

I should note that performing the analysis function — whether automatic or not — will override any manual automation edits that you’ve made. And because whenever you make a change to any parameter, in any tab, DynAssist needs to re‑analyse the audio, you must for now either leave your manual edits until last, or render them before any re‑analysis. Helpfully, you do get a pop up warning before losing any changes. Better still, when I suggested to Noiseworks that it might be good to allow the user to ‘protect’ areas from analysis, in much the same way I can in Synchro Arts’ Revoice Pro (another ARA plug‑in), I was told they’d had the same thought, and that this is already very much in their development plans.

Gate Expectations

The next tab lets you engage or bypass the Vocal Gate and, courtesy of ‘AI’, this is a content‑aware process: it knows which parts of the recording are vocals and which aren’t, and you don’t need to set a threshold. As I mentioned already in passing, Instrument mode works slightly differently: this does give you a conventional threshold control, the idea being that you can manually set up the gating to suit any other instrument/sound source you might want to process.

The Vox Gate uses machine learning to identify non‑vocal/dialogue areas in a file, and lets you decide how much they’re attenuated.

The Vox Gate uses machine learning to identify non‑vocal/dialogue areas in a file, and lets you decide how much they’re attenuated.

Essentially, this gate automatically detects any ‘silence’ between vocal phrases and attenuates it, to prevent unwanted noise coming through that might otherwise be brought up by any compression and limiting downstream in your signal chain. On the left, there’s a graphical control resembling automation points, and this lets you set the gate close and open speeds, and the amount of attenuation (ie. the ‘floor’). At minus infinity, it gives you silence when the gate is closed, while other values attenuate the noise without fully muting it; useful for continuity where a noise (like tape hiss) is audible during the vocal and not just in between phrases.

To the right are a couple of directly editable parameters. Min Noise Length prevents the gate kicking in overzealously during short breaks between words. This control is located away from the graphical one, but the latter is updated to indicate your changes. Loudness cutoff lets you prevent quieter sounds such as spill from making it through the gate, and this seems to work pretty well.

Take My Breath Away

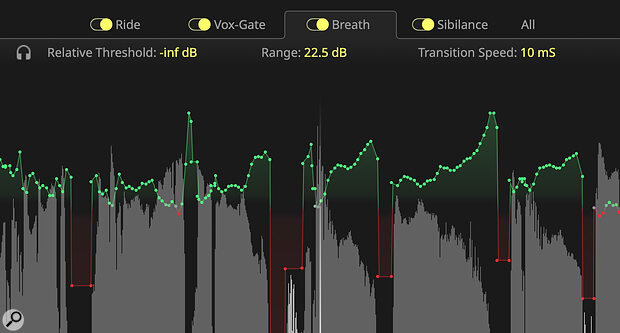

The next two tabs are almost identical to each other, but one targets breaths and the other sibilance. Again, DynAssist’s analysis identifies these sounds for you and changes the level according the parameters you specify in the relevant tab. And I have to say it largely does a grand job of the detection, without you having to do anything...

This might just be one of the most effective automatic breath‑control tools out there!

This might just be one of the most effective automatic breath‑control tools out there!

A Relative Threshold control determines how loud a breath/ess must be to be affected by your settings. At minus infinity, all instances will be targeted, and typically this is how I wanted things set up for breaths. That said, I can conceive of some situations where you might want to leave quieter breaths alone, and target only louder ones. You can also specify by how much the breaths/esses should be attenuated, again from nothing to minus infinity. And, lastly, there’s a Transition Speed parameter, broadly equivalent to a combined attack/release control.

As I mentioned, the final tab called All shows all of the other tabs’ settings as numeric/text parameters that you can click and drag to increase/decrease. While I did find the sliders and graphics of the other tabs useful for learning how to use this tool, as I grew more familiar with it, I spent more time here.

User Experience

In general, DynAssist works superbly. I tested it as an ARA plug‑in in Reaper on an M1 MacBook Pro running macOS 12.4. The tests involved several male and female vocal tracks and a couple of long dialogue parts for a podcast I was producing at the time. The vocals included some recordings of my own voice where I deliberately sang more dynamically (erratically?) than I normally would, just to see how DynAssist could cope.

On the sung parts, the analysis was pretty speedy, but naturally it took a good while longer to perform the initial analysis for the 50‑minute‑long dialogue parts. It’s worth the short wait, though, as generally you’re going to need to do less by way of tweaking with simple dialogue than with singing. However, after the initial analysis, you may want to tun off auto‑analyse while making adjustments to the settings, or to limit the analysis to a short loop so that you can hear the results without delay. In all cases, bar none, the initial levelling was truly impressive, and I found it super‑easy to hone the results by juggling the Speed and Smooth parameters to taste, and only occasionally feeling a need to set minimum/maximum gain limits.

Much to my pleasant surprise the breath control was, for the most part, absolutely spot on, with breath onsets and endings detected beautifully, and none of the unacceptable artefacts I’ve endured with every other tool that’s got my hopes up with promises of perfect de‑breathing! There were just a few instances in a full track where the very start of the breath wasn’t identified, but these were few and far between, and as each breath is obviously highlighted you can quickly drag a single automation point left to solve that. I loved that I was able to turn all the breaths up/down to taste — with a vocal processing chain inserted after DynAssist, where a singer was using breaths rhythmically, I could even judge how they interacted with the overall groove.

Though it was effective, I found the visual feedback of the de‑essing tab a little more confusing. While the detected esses are highlighted, esses are briefer than breaths, and depending on your zoom settings they can be harder to see. Also, it’s not always immediately obvious what the de‑ess contribution is to the overall automation — it’s possible to have the audio being turned up for other reasons, so that even when de‑essing the net effect is indicated in green (for gain) on the automation curve. I’d quite like the option of dedicated lines to indicate the de‑essing and de‑breathing gain reduction — and again, when I put that to Noiseworks, they said I was not the first to suggest that, and it’s in their plans. The audible results are good, by the way.

It does what it does in a way that leaves the user free to make manual adjustments, so they have complete control over the end result.

On The Level

When you consider that, for all the clever machine‑learning‑driven content recognition, all DynAssist is actually doing to your audio signal is turning it up and down, it really is an incredibly impressive achievement. But it’s a very practical one too, which could well save you a lot of effort and frustration — and time. It can be your clip gain, your compressor, a de‑esser and, I have to say, perhaps the most effective de‑breather I’ve yet encountered, all in one. I will be using it for my dialogue and music work going forward, and reckon it will save me lots of time without obvious compromise — I love that, unlike many AI tools, it does what it does in a way that leaves me free to make manual adjustments, giving me complete control over the end result.

Of course, DynAssist doesn’t do everything that I might need to do to prep a vocal or dialogue part: there’s no de‑noising, and you can’t apply temporary filters to counter plosive pops, for example. Also, like all ARA plug‑in, it must be placed at the start of the chain, and you can’t instantiate other ARA plug‑ins simultaneously on the same track. So if you wish to use other ARA plug‑ins such as Melodyne for pitch‑correction or iZotope RX to address some of the jobs listed at the top of this paragraph, then you’re going to have to spend a little time planning the most efficient workflow. For example, if I were to want to use all three of those apps, I might look to use RX first for spectral de‑noising, perhaps plosive control and a spot of mouth de‑clicking, before rendering that result and loading DynAssist, and I might do pitch correction after that.

But in the grand scheme these are tiny niggles. And again, without giving away too many details, when I discussed this side of things with Noiseworks, I was told that they’re very conscious of these limitations of ARA, and that they have ambitious plans to address them in future versions. Already, though, I’ve found DynAssist most impressive. I’d heartily recommend that anyone working regularly with dialogue or vocals at least tries the demo. For me, it’s probably worth the price of entry for the breath control alone — and that’s not even the main selling point!

Pros

- Simple idea, brilliantly executed.

- A genuine labour‑saver.

- AI used only for detection, not for the actual processing.

- User has complete control over the end result.

- One of the best breath controllers out there.

Cons

- Can’t protect manual edits if you need to adjust other settings.

Summary

A superb labour‑saving plug‑in, DynAssist can help you prep your vocals and dialogue for mixing in next to no time, and includes one of the best breath control facilities out there. A company worth keeping an eye on.