The evolution of convolution takes several steps forward with Inspired Acoustics’ awe‑inspiring immersive reverb.

It’s amazing how a technology that seems mature can turn out to be anything but. Not so long ago, it seemed, we were all pretty happy as long as our plug‑ins could do a passable impression of a Lexicon, or implement convolution without melting our CPUs. Yet over the last few years, plug‑in reverb has evolved beyond recognition. Only last month, for example, Hugh Robjohns reviewed Nugen Audio’s Paragon, the first IR‑based reverb to use resampling technology. And this month it falls to me to tell you about another breakthrough.

The name Inspired Acoustics may be familiar to SOS readers from their sampled pipe organs, available for the Hauptwerk, GigaStudio and Kontakt platforms. Their latest product is so ambitious that it’s actually unfair to use the word ‘breakthrough’ in the singular, because Inspirata embodies a number of new developments. Like Paragon, or HOFA’s IQ‑Reverb 2, it represents an attempt to make convolution reverb more controllable and more versatile, but the similarities end there.

Impulse Purchase

Conventional convolution reverbs stand or fall on the strength of the supplied IR collection. At first glance, Inspirata’s factory library might sound small, containing as it does only 37 ‘sampled’ spaces (albeit with the promise of more to come). But if you wanted a clue that this is not a conventional convolution reverb, you’d find it in the size of the download: this apparently modest collection comprises well over 100GB of data!

Why is so much data required? Recording an impulse response in a space is like recording any other source. A starting‑pistol shot, sine sweep or balloon pop is emitted from a particular location within the room, and captured by a mic array at another location. So it’s an exaggeration to say that a single impulse response ‘samples the room’. It samples the behaviour of sound emitted from one point within a space, in one direction, as heard from another point, at one point in time.

When used within a convolution plug‑in, that can be a more or less good approximation to the actual experience of hearing music within the space. In principle, a single IR should do a reasonably effective job of recreating how a small source such as a solo piano sounds at a particular point within the hall. What it can’t do, however, is simulate the effect of hearing multiple sources at different positions within the hall, such as the members of a symphony orchestra or choir. Nor can it recreate natural modulation, rotation or movement on the part of either source or listener, or variations in directivity of the source, or the way the acoustic changes in different parts of the hall.

This, in turn, affects the way that mix engineers have to think about reverb. When we’re actually recording in a reverberant hall, we attempt to set the right balance of direct and reflected sound, and to capture an appropriate ambience without unpleasant coloration, by varying the placement of the mics and the performers. When we’re applying convolution reverb after the fact, we’re limited to varying the pre‑delay and choosing how much reverb to add.

Inspired Acoustics haven’t just ‘sampled’ each space once, or even a handful of times. Instead, they’ve repeated the process for thousands of different combinations of source and capture position.

Count The Ways

Inspirata’s massive sound library represents an attempt to overcome these limitations. Inspired Acoustics haven’t just ‘sampled’ each space once, or even a handful of times. Instead, they’ve repeated the process for thousands of different combinations of source and capture position. In effect, what they’ve done is to map out performer and listener areas within each space as grids, and then record a separate IR for each combination of performer and listener location. Especially in the larger halls, each grid can contain 100 or more points, so there can be upwards of 100 IRs for each listener position; multiply that figure by the number of source positions and you’ll see how the total number of IRs for each space could easily end up in five figures. That’s right: a single room or hall in the Inspirata sound library can be represented by up to 10,000 separate impulse responses!

There are further innovations behind the scenes, too. Inspired Acoustics spent two years developing proprietary de‑noising techniques to overcome problems with ambient noise, and used custom sine sweeps that were precisely matched to the loudspeakers and the conditions in the room. Lasers were used to align and position the mics and speakers. Oh, and the results weren’t just captured in stereo, but in first‑ and fourth(!)‑order Ambisonics.

It should be noted, too, that this huge collection of impulse response data isn’t just applied using straightforward convolution. Rather, it’s the raw material for what Inspired Acoustics call a “time‑variant algorithm”. The details of this are proprietary and highly technical, but again, the aim is to overcome some of the limitations of conventional convolution. Simple IRs are static in both a temporal and a spatial sense. They can’t recreate the way the reverberation of a real space varies from moment to moment, and there’s no way to smoothly move from one IR to the next to recreate a sense of motion within the space. Inspirata’s technology allows both kinds of movement to be reproduced. It also permits the early reflections and reverb tail to be adjusted and treated independently, and for the decay times in the tail to be modified both globally and in a frequency‑dependent fashion using a graphic EQ‑like interface. An editable volume envelope can be applied to the tail.

Another relatively novel feature of Inspirata is that the most expensive Immersive edition supports surround configurations all the way up to 22.2. The basic Lite and Personal editions are stereo‑only, and the Professional edition offers surround support up to 7.1.2. Personal and Professional users also enjoy support for sample rates up to 96kHz, while the full Immersive version will go as high as 384kHz if you can find a host that supports it.

Choose the best seat in the house: Inspirata allows you to freely position Sources on the stage area and a Receiver in the auditorium.

Choose the best seat in the house: Inspirata allows you to freely position Sources on the stage area and a Receiver in the auditorium.

The multi‑channel capability isn’t just there to cater for surround output, though, and multi‑channel inputs are important even if you’re working in stereo. That’s because each mono input path can be treated as an independent Source with its own position, directivity, apparent width and other qualities. These Sources are then ‘heard’ by a single Receiver, which recreates a mono, stereo or surround capture at a specific point within the hall. So, for example, if you had dry recordings of four actors’ voices and wanted to recreate the sound of them moving around in a room or hall, you could use a quad‑to‑stereo instance of Inspirata, route each voice to a separate input path and then use automation to move the resulting Sources. It’s also possible to have multiple inputs contributing to different Sources to a user‑variable extent, if you wish. (Of course, this all means that if you’re using a DAW that doesn’t support multichannel tracks or buses, there’s probably not much point in opting for the Professional or Immersive versions of Inspirata.)

Finally, I should mention another of Inspired Acoustics’ design goals, which is to replace the parameters commonly used in artificial reverbs with ones that more closely reflect real‑world characteristics and those used by acousticians. Their idea is that instead of having to translate the audible effect we want to hear into changes in diffusion or pre‑delay or EQ, we should be able to adjust that effect directly. So, for example, if you want dialogue to be more intelligible, you can simply adjust a parameter labelled Clarity rather than mucking about with wet/dry balances or diffusion.

Just Browsing

Inspirata and its sound library are installed from a separate Inspired Acoustics Connect application. This, thankfully, allows you to download the library in stages. Once you’ve installed the plug‑in and told it where to find the library, you can then visit the Browser page to select from the 37 rooms, halls and churches on offer.

The selection is an interesting one. It includes a number of fine theatres and concert halls, such as the Great Hall of the Liszt Academy of Music, Amsterdam’s Royal Concertgebouw, the Hungarian State Opera and the Berliner Philharmonie. Churches too are well represented and chosen for their acoustic merits, from the lavish Szeged Votive Church to the austere and clear‑sounding Scots Church in Melbourne. Other spaces that have potential for music mixing include orchestral and choral rehearsal halls and a very nice Hungarian radio studio. Several small and medium‑sized live music venues are also featured, as is a Basement Rehearsal Room, but like the bedrooms, bathroom, car park, airport departure lounge and office spaces, these are probably more useful for post‑production.

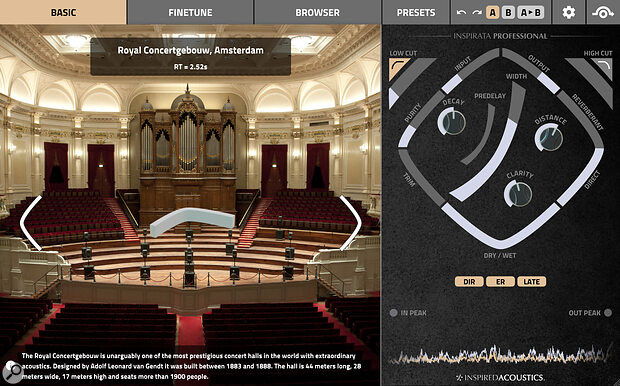

A Basic page brings up a large photo of your chosen venue along with a brief description and a boomerang‑like control that allows you to adjust which direction the Receiver faces. The realism with which the recreated space rotates around you as you do so is pretty uncanny, and will whet your appetite for the more detailed control available in the Finetune page and in the panel to the right.

Fine & Dandy

Select Finetune and you’ll see a plan view of your chosen space, with the Receiver and all the Sources clearly visible. Clicking on one of these ‘greys out’ any part of the space where it can’t be moved. In most of the music‑oriented rooms, for example, Sources can be positioned anywhere on stage and the Receiver anywhere in the audience, but not vice versa. In some of the post‑production spaces, by contrast, there’s no distinction between the locations available to the Sources and the Receiver.

The Professional and Immersive editions support not only surround output but also multichannel input, so you can have multiple Sources in different positions.

The Professional and Immersive editions support not only surround output but also multichannel input, so you can have multiple Sources in different positions.

There are numerous sound‑shaping controls available, most of which can be made to operate on a per‑Source basis if you wish, but the most fundamental way of shaping the reverb to your requirements is simply to move the Sources and Receiver around. Want the piano to be a bit more up‑front? Simply drag it nearer the front of the stage. Timbre of the reverb not quite right? Try moving both Source and Receiver to different parts of the hall. Aiming to create the effect of a source behind you? Rotate the Receiver to point the other way.

Other controls are mostly unfamiliar from existing reverbs, but the majority, including Apparent Source Width, early reflections Clarity and Listener Envelopment, are intuitive. Some, such as Crosstalk Cancellation and Purity, are less obvious, but there are not so many as to be bewildering, and you can just dive in and try them out. Two very important settings are Directivity and Speaker Setup: the Receiver is conceptualised as a virtual array of more or less directional loudspeakers around an idealised listener, and these two controls allow you to make rapid and radical adjustments to the stereo or surround presentation of the reverb.One thing worth mentioning is that there are various ways of adjusting the apparent balance of reverb and direct sound. Once again, the design goal is for the controls to reflect real life, so although Inspired Acoustics have grudgingly included a conventional wet/dry mix control, they encourage you to keep this 100 percent wet and use the Direct/Reverberant slider instead, or vary the distance of the Sources from the Receiver.

Inspirational

We are used to thinking of convolution reverb as ‘realistic’, but a few minutes spent auditioning Inspirata reveals how one‑dimensional basic convolution is in comparison. This isn’t reverb that you paste onto a dry source like a sort of halo. It’s reverb that makes it sound as though you are in the room with your source. And as you drag the Sources and Receiver around, you quickly realise that having ‘only’ 37 spaces to work with is not the limitation you might think. There’s an infinite amount of variation within each space, even before you get down to altering parameters such as the tail envelope or the relative decay times of the 1 and 2 kHz bands.

And one of the many things Inspirata can do that most convolution reverbs can’t is respond smoothly to parameter changes. Drag a Source from left to right and it really sounds as though the instrument or voice is moving in the hall. Rotate the Receiver and it’s as though you’ve turned around to look at something behind you. Almost every parameter can be adjusted in real time without glitching — and, consequently, can be automated.

Automation in Inspirata is best written by putting your DAW into Write mode and moving parameters with the mouse, rather than by drawing in curves. This is partly because finding the right parameters among the enormously long list that it presents to your DAW can be hard, and partly because it’s otherwise possible to draw in position values that aren’t used in a particular preset. The obvious parameters to automate are the positions of Sources and Receiver, and the rotation of the latter. In practice this is mostly seamless, and the spaces have been sampled in sufficient detail that movement sounds continuous rather than stepped. I occasionally ran across noticeable tonal or amplitude transitions as the Source moved from one measurement point to another, but these were few and far between, and when you consider how many measurements the sound library contains, its general consistency is impressive.

Unsurprisingly, Inspirata is resource‑intensive. Quite apart from the need to find 100GB+ of drive space, it sometimes provoked warnings that physical memory was running low on my (32GB) machine, and needs a relatively modern computer to be used to full effect. And with its focus on real spaces, it won’t replace plates, chambers, springs or abstract digital reverbs in rock and pop mixing.The other side of that coin is that if you work in post‑production, or on acoustic music, film soundtracks and so on, there is quite simply nothing else that can do what Inspirata does. If your need is to place sources within an acoustic environment in an utterly convincing fashion — and especially if you need to be able to move them around in real time — this will be like manna from heaven. For turning a collection of sampled instruments into an orchestra, for embedding a close‑miked singer into a real hall, or for creating audio and video drama that requires no suspension of disbelief, this representes a real step forward. In its Professional and Immersive versions, in fact, Inspirata isn’t ‘just’ a reverb plug‑in at all. It’s a surround panner and immersive audio engine along the lines of Flux’s Spat Revolution — except that the environment in which we’re immersed is not synthesized but real.

Pros

- Extremely realistic recreations of real spaces, in which sources and listener can be moved about at will.

- Almost all parameters can be automated smoothly.

- Allows reverb to be shaped using parameters familiar from real‑world acoustics.

Cons

- A huge download, and the plug‑in itself is quite resource‑intensive.

- Position automation throws up occasional small discontinuities.

Summary

Inspirata is a quite extraordinary plug‑in. Drawing on exhaustive measurements of real spaces, Inspired Acoustics have created a truly immersive convolution‑based reverb that allows sources to be freely positioned and moved within a recreated real‑world space.

Information

Inspirata Immersive €1599; Professional €499; Personal €169; Lite €79. Prices include VAT.